MCAI Lex Vision: Comment of MindCast AI on Potential US DOJ | FTC Updated Guidance Regarding Collaborations Among Competitors

Docket ATR-2026-0001 | A Nash–Stigler Measurement Architecture for Dynamic Coordination Analysis

Public comment on Docket ATR-2026-0001. MindCast AI LLC is a predictive law and behavioral economics consultancy. MindCast AI produces institutional foresight simulations using Cognitive Digital Twin methodology grounded in Nash equilibrium game theory, Stigler information economics, and the integrated Chicago School of Law and Behavioral Economics. Recent validated foresight predictions include Super Bowl LX, DOJ GPU export enforcement corridors, NVIDIA NVQLink technical specifications, and Live Nation antitrust enforcement trajectory.

Executive Summary

Modern competitor collaborations operate as dynamic coordination systems rather than static contractual arrangements. Digital infrastructure, shared algorithms, data exchanges, and joint platforms alter incentive gradients, compress equilibrium formation speed, and reshape the architecture through which competition unfolds. Updated guidance should reflect that structural shift.

Prior guidance focused primarily on price, output, and market power effects at a point in time. Contemporary collaborations instead modify coordination capacity across markets over time. Guidance that incorporates coordination-cost analysis, incentive-gradient realignment, and principled inquiry sufficiency thresholds will promote both predictability and effective enforcement.

Three MindCast AI publications provide the analytical foundation for this comment. Together they support four modernization proposals: (1) coordination-capacity analysis that distinguishes collaborations building market-wide coordination infrastructure from those capturing it; (2) algorithmic-era incentive analysis structured around equilibrium acceleration, behavioral drift, and convergence velocity; (3) an equilibrium classification taxonomy for joint ventures that predicts competitive effects more reliably than subject-matter classification; and (4) falsifiable enforcement standards that specify termination conditions for both behavioral analysis and investigative sufficiency.

Three process safeguards round out the comment. Updated guidance should include adversarial information architecture to address the participation asymmetries that regulatory economics literature identifies in notice-and-comment proceedings, sunset provisions to prevent guidance from calcifying into institutional grammar, and a structural audit requirement to document the interest alignment behind proposed safe harbor boundaries.

I. Analytical Foundations

Three MindCast AI publications ground the proposals in this comment. Each publication is briefly summarized below; readers need not consult the originals.

A. The Dual Nash–Stigler Equilibrium Architecture

Standard enforcement analysis lacks principled stopping rules. Agencies investigate until resources run out, timelines expire, or political pressure mounts. None of those is a principled criterion for analytical completeness. The Dual Nash–Stigler Equilibrium Architecture addresses the gap through two Nobel Prize-winning frameworks operating as simultaneous runtime constraints.

Nash–Stigler Equilibria: A Dual-Termination Architecture for Antitrust Enforcement (MindCast AI, Jan 2026) — mindcast-ai.com/p/nash-stigler-equilibria

Nash equilibrium governs behavioral settlement. John Nash proved that any finite game reaches a stable equilibrium where no player can improve by changing strategy alone. MindCast AI implements Nash equilibrium as the behavioral termination condition for enforcement analysis: the investigation reaches closure when no agent—the DOJ, merging firms, state enforcers, consumers—can improve its position by unilateral deviation. Settlement predictions specify who concedes, when, and why, enabling forward modeling of litigation, regulation, and market realignment.

Stigler equilibrium governs inquiry sufficiency. George Stigler established that information search should stop when marginal accuracy gains fall below marginal cost. MindCast AI implements Stigler equilibrium as the inquiry sufficiency condition: investigation stops when additional evidence adds less verified signal than it costs. Stigler logic prevents both under-investigation (premature closure before behavioral stability is confirmed) and over-investigation (spurious depth from access-driven over-search that degrades rather than improves analytical integrity).

Neither equilibrium overrides the other. Nash decides where the system settles. Stigler decides when the system stops. Both must fire before the system commits to a prediction.

Each output carries explicit falsification contracts—specific conditions that would prove the prediction wrong. Enforcement analysis built on this architecture is auditable, reproducible, and scientifically credible. For competitor collaboration guidance, Nash–Stigler offers what the rule of reason cannot: a measurable analytical endpoint.

B. Chicago School of Law and Behavioral Economics

The Chicago School—Coase on transaction costs, Becker on incentive response, Posner on efficient liability allocation—correctly identified that incentive architecture is determinative in market analysis. The boundary conditions of that framework have shifted. Modern markets exhibit coordination failure, algorithmic incentive exploitation, and institutional learning failure that the original Chicago frameworks do not resolve. MindCast AI’s Chicago School Accelerated extends each pillar.

Chicago School Accelerated: The Integrated, Modernized Framework of Chicago Law and Behavioral Economics (MindCast AI, Dec 2025) — mindcast-ai.com/p/chicago-school-accelerated

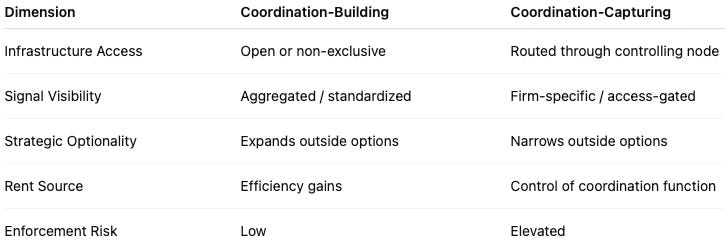

Coase extension: coordination costs are not transaction costs. Coase’s bargaining model assumed parties could find each other, understand the negotiation, and converge on agreement. Coordination was taken for granted. Behavioral economics reveals coordination costs as analytically distinct: markets can exhibit near-zero transaction costs while still failing to coordinate when shared focal points are absent, trust is degraded, or information routing is captured. Competitor collaborations can be either coordination-building (creating shared infrastructure that lowers market-wide coordination costs) or coordination-capturing (routing market signals through a controlling node that extracts coordination rents). Updated guidance must distinguish these categories.

Becker extension: incentive exploitation under degraded coordination. When coordination architecture weakens, the payoff gradient shifts. Competing on price and quality yields diminishing returns; competing on opacity, delay, and platform control yields increasing returns. Rational actors will attack coordination infrastructure when returns from fragmentation exceed returns from efficiency competition. Algorithmic collaborations that appear procompetitive at formation can shift the payoff matrix over time—making exploitation the dominant strategy without any explicit agreement. Static analysis at formation misses the dynamic.

Posner extension: why institutional feedback stalls. Posner argued that common law evolves toward efficiency because inefficient rules generate more litigation, courts hear more challenges, and doctrine improves. That mechanism requires a “kind” learning environment with timely, interpretable feedback. Modern platform and algorithmic markets are “wicked” learning environments: harm is delayed, dispersed across doctrinal domains, and strategically fragmented. Courts never observe the full causal loop. Guidance that assumes self-correction through enforcement will misread why intervention is structurally necessary.

C. The Stigler Equilibrium and Enforcement Capture

Stigler’s 1971 theory of regulatory capture is not an argument against enforcement. Stigler identified the structural conditions under which enforcement institutions face the greatest pressure: a single decisive chokepoint, concentrated beneficiaries, diffuse victims, and organizational asymmetry between them. When those conditions hold, structural economics predicts that the most motivated and well-resourced parties will shape institutional outputs regardless of personnel quality or institutional intent. The prescription Stigler’s framework implies is institutional design that distributes that pressure across multiple venues rather than concentrating it.

The Stigler Equilibrium: Regulatory Capture and the Structure of Free Markets (MindCast AI, Jan 2026) — mindcast-ai.com/p/stigler-equilibrium

MindCast AI extends Stigler’s mechanism from regulatory content to enforcement structure. Concentrated beneficiaries invest not just in favorable rules but in favorable enforcement institutions: jurisdictional monopolies, behavioral remedies that evaporate, long timelines that allow incumbents to consolidate advantage before review concludes. The Enforcement Capture Equilibrium (ECE) names that structural outcome and implies the solution: institutional competition across multiple enforcement venues that distributes organizational pressure and reduces the expected return on any single-venue influence strategy.

Three operationalized metrics track ECE dynamics across enforcement contexts: Degree of Capture measures how closely agency outputs track concentrated-beneficiary preferences versus diffuse-victim interests; Grammar Persistence Index measures whether institutional behavior survives leadership turnover; and Update Elasticitymeasures leadership capacity to alter information flows and remedy structures within one enforcement cycle. These metrics inform the process safeguards proposed in Section VII.

Contact mcai@mindcast-ai.com to partner with us on Law and Behavioral Economics foresight simulations. Prior public comments: Comment on Regulatory Reform on Artificial Intelligence, A Notice by the Office of Science and Technology Policy, Docket ID OSTP-TECH-2025-0067 , Restoring the Stage, A DOJ Blueprint for Fair Competition and Cultural Integrity Versus Live Nation, Docket US DOJ ATR-2025-0002-0023.

II. Structural Gap in Prior Collaboration Guidance

The 2000 Collaboration Guidelines were designed for an industrial economy in which joint ventures and information exchanges evolved slowly and operated within clearly defined product markets. Contemporary collaborations increasingly function as digital coordination platforms that evolve continuously and influence strategic interaction beyond traditional market boundaries.

Shared data environments, algorithmic pricing tools, and joint technology infrastructures alter how rivals observe, predict, and respond to one another. Such collaborations can reduce transaction costs and enable innovation. They can also centralize information routing, narrow strategic optionality, and stabilize outcomes that resemble coordination rather than competition.

Two structural gaps define the inadequacy of prior guidance for modern markets.

Gap 1: No coordination-architecture analysis. The 2000 Guidelines asked whether a collaboration restricted competition. Updated guidance must also ask whether a collaboration restructures the architecture through which competition operates. Coordination-capturing collaborations restrict competition by degrading the shared infrastructure markets need to function—a harm that standard price-output analysis cannot detect.

Gap 2: No principled analytical endpoint. Rule-of-reason balancing under the 2000 Guidelines lacked explicit termination conditions: no standard for when behavioral analysis is complete, no threshold for when evidence is sufficient, no falsification conditions that would distinguish procompetitive integration from coordination capture. Guidance without termination conditions produces unpredictable enforcement and unmanageable compliance costs.

The frameworks proposed in this comment are analytical overlays compatible with existing rule-of-reason doctrine. Coordination-capacity analysis, equilibrium classification, convergence velocity measurement, and inquiry sufficiency standards do not replace the rule-of-reason framework. They supply structured inputs to rule-of-reason analysis at precisely the stages where prior guidance was silent: identifying what coordination architecture effects to measure, classifying which equilibrium type a joint venture is likely to reach, specifying what evidence suffices to distinguish procompetitive integration from coordination capture, and defining when behavioral analysis is complete. Agencies can adopt any of these tools independently and incrementally, integrating them into existing analytical practice without restructuring the doctrinal framework that governs competitor collaboration review.

III. Coordination Capacity as an Analytical Variable

Transaction cost economics examines whether collaboration reduces bargaining and contracting costs. Modern markets require an additional inquiry: whether collaboration increases or decreases market-wide coordination capacity.

Coordination capacity refers to the ability of independent rivals to adapt, innovate, and compete without relying on a shared control node. A collaboration that builds open standards, expands interoperability, or reduces search costs across the market increases coordination capacity. A collaboration that centralizes routing, limits visibility to participants, or conditions access on reciprocal data exchange captures coordination capacity.

A. Factors for Staff Analysis

Updated guidance should instruct staff to evaluate coordination capacity effects along three dimensions:

• Whether the collaboration expands independent rival adaptability outside the collaboration or narrows it.

• Whether strategic optionality meaningfully declines for non-participants—reducing their ability to reach customers, access data, or participate in market-wide signaling.

• Whether market signaling becomes concentrated through a shared infrastructure layer that the collaboration controls.

Joint licensing arrangements, shared data pools, and governance structures for listing or trading platforms present recurring contexts for coordination-capacity analysis. Clear articulation of these factors would enhance predictability while aligning enforcement with the structural harms modern collaborations can produce.

B. The Coordination-Capture Distinction

Coordination capture occurs when a collaboration routes market signals through a controlling node that extracts rents from the coordination function itself, independent of competitive performance. Standard foreclosure analysis—which asks whether a specific competitive opportunity has been foreclosed—will miss coordination-capture harm because the harm is architectural rather than transactional.

Three diagnostic questions identify coordination capture:

1. Does the collaboration alter access to coordination infrastructure (routing, visibility, data, matching) rather than merely restricting competition for the collaborating parties’ products?

2. Does the arrangement extract rents from the coordination function itself—meaning the controlling party benefits from being the node through which coordination occurs, independent of competitive performance?

3. Does the arrangement reduce the ability of market participants outside the collaboration to coordinate through alternative channels, raising coordination costs market-wide?

Arrangements satisfying all three criteria represent coordination capture and warrant analysis beyond standard foreclosure doctrine.

IV. Algorithmic Pricing and Incentive-Gradient Realignment

Algorithmic pricing and data-driven collaboration present the most urgent area for analytical clarification. Shared algorithmic infrastructure does more than transmit price information; it realigns payoff gradients among rivals. Updated guidance should focus on incentive-gradient realignment rather than solely on traditional information-exchange categories.

A. Equilibrium Acceleration

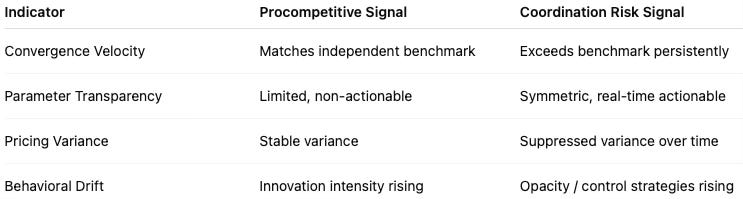

Algorithms compress feedback loops. When competitors share algorithmic pricing infrastructure—common pricing engines, shared data inputs, interoperable parameter feeds—the feedback cycle between one firm’s price decision and its competitors’ responses shrinks from weeks or months to milliseconds. Price convergence that would require extended parallel conduct under traditional analysis occurs automatically and continuously.

Compressed feedback loops accelerate equilibrium formation. Accelerated equilibrium formation produces one of two outcomes: enhanced efficiency (prices converge to competitive equilibrium faster, benefiting consumers) or entrenched coordination (prices converge to supracompetitive equilibrium and stabilize there, benefiting network participants). Standard analysis cannot distinguish these outcomes because it examines market outcomes at a point in time rather than measuring the velocity and direction of equilibrium convergence.

Updated guidance should incorporate a convergence velocity analysis: if price convergence across algorithmic collaboration participants occurs faster than independent optimization under identical market conditions would predict, and prices converge above competitive equilibrium, the collaboration has produced coordination—regardless of whether any explicit agreement occurred.

B. Behavioral Drift and Dynamic Risk

Static market structure analysis at the moment of formation cannot detect when an initially procompetitive collaboration shifts toward exploitation. Behavioral drift risk arises when the rational strategy for participants shifts from competing on price or quality to optimizing within a shared infrastructure that dampens rivalry.

Staff analysis should consider three dynamic indicators:

• Whether algorithmic tools increase the speed at which parallel conduct converges relative to independent optimization benchmarks.

• Whether parameter transparency or shared training data reduce uncertainty in ways that suppress strategic experimentation.

• Whether collaboration alters the relative payoff of innovation versus opacity or parameter manipulation over time.

Updated guidance can address behavioral drift risk without presuming illegality by articulating measurable structural indicators and specifying the conditions under which drift risk crosses the threshold for enforcement action.

C. Proposed Guidance Elements

Guidance on algorithmic pricing collaborations should provide:

4. A safe harbor for collaborations that demonstrably reduce convergence velocity toward competitive equilibrium, with periodic re-examination to verify that behavioral drift has not shifted the equilibrium direction.

5. A rebuttable presumption of coordination risk when convergence velocity across participants exceeds the independent optimization benchmark by a specified threshold, with the burden on participants to demonstrate consumer benefit sufficient to offset the coordination risk.

6. A structural disclosure requirement for algorithmic collaborations above a market share threshold, requiring participants to document parameter inputs, update frequencies, and convergence metrics sufficient to enable Stigler-certified inquiry.

V. Joint Ventures and Equilibrium Classification

Current guidance classifies joint ventures primarily by subject matter (research, production, marketing) and by structural indicators (market share, integration level). Neither classification reliably predicts competitive effects. Subject-matter classification tells agencies what a joint venture does; equilibrium classification tells agencies what a joint venture will do to the market over time.

A. Four Equilibrium Types

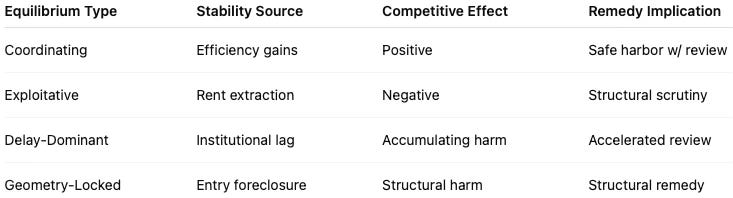

MindCast AI proposes classifying proposed joint ventures by equilibrium type across four categories:

Coordinating Equilibrium. The joint venture reaches Nash equilibrium through genuine value creation: participants converge on shared operational efficiency, cost reduction, or innovation investment, and no participant can improve by exiting. Competitive effects are positive. Safe harbor treatment is appropriate with disclosure requirements.

Exploitative Equilibrium. The joint venture reaches Nash equilibrium through market control rather than value creation: participants converge on rent extraction, coordination-architecture capture, or information asymmetry exploitation. Behavioral drift from coordinating to exploitative equilibrium is the characteristic pattern of aging joint ventures in concentrated markets. Safe harbor treatment requires periodic re-examination with drift indicators.

Delay-Dominant Equilibrium. The joint venture reaches equilibrium through temporal arbitrage: participants jointly exploit the gap between the harm they cause and the institutional capacity to respond. Behavioral remedies, multi-forum litigation, and remedy structures that are unenforceable in practice produce delay-dominant equilibria. Competitive harm accumulates during the gap.

Geometry-Locked Equilibrium. The joint venture reaches equilibrium by altering the strategic geometry of the market—foreclosing entry paths, capturing distribution infrastructure, establishing switching costs—such that market structure itself prevents competitive recovery. Standard behavioral remedies fail for geometry-locked equilibria because the harm is structural.

B. Implications for Guidance

Updated guidance should require agencies to assess equilibrium type, not just subject matter. A production joint venture can be coordinating or exploitative depending on whether the operational integration generates real efficiency gains or primarily routes market access through a shared chokepoint. Subject-matter classification cannot make that distinction.

Joint ventures that exhibit behavioral drift toward exploitative equilibrium—declining alignment between stated rationale and actual payoff structure, rising convergence velocity, increasing capacity to fragment competitive signals for non-participants—warrant re-examination regardless of how they were classified at formation.

VI. Behavioral Termination Conditions and Inquiry Sufficiency

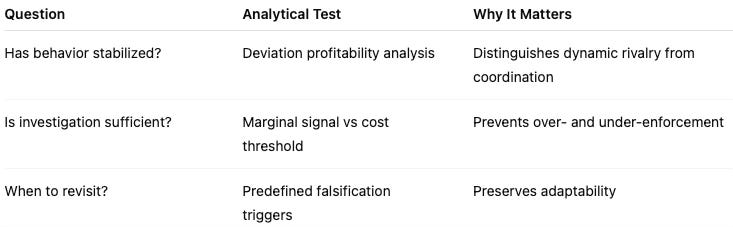

Predictability requires principled stopping rules for enforcement analysis. Rule-of-reason inquiries under the 2000 Guidelines lacked explicit standards for determining when analysis is complete. Updated guidance should specify both a behavioral termination condition and an inquiry sufficiency standard.

A. Behavioral Termination: Deviation Profitability Analysis

Behavioral equilibrium analysis asks whether unilateral deviation from the collaborative arrangement would be profitable under observed conditions. If deviation remains meaningfully attractive, the collaboration may reflect dynamic competition. If deviation is persistently unprofitable due to structural features of the collaboration, stabilization risk increases.

Staff should evaluate:

• Deviation profitability under realistic counterfactuals, including the availability of alternative infrastructure outside the collaboration.

• The durability of outcome stability absent explicit enforcement or monitoring mechanisms internal to the collaboration.

• Whether observed stability depends on information asymmetry or access restrictions embedded in the collaboration structure rather than genuine efficiency advantages.

Explicit articulation of a behavioral termination condition would enhance transparency for businesses and reduce uncertainty regarding the analytical path agencies will follow. A collaboration that cannot produce Nash-certified stability—where at least one major participant can profitably deviate—is still in dynamic competition and should receive correspondingly different treatment.

B. Inquiry Sufficiency: Evidentiary Thresholds

Effective enforcement also requires clarity regarding investigative sufficiency. Marginal information gathering yields diminishing analytical returns. Agencies promote legitimacy and efficiency when guidance specifies the types of structural evidence required to assess collaboration risk.

Inquiry sufficiency standards should define:

• The minimum data necessary to evaluate coordination-capacity effects, including convergence velocity metrics for algorithmic collaborations.

• The counterfactual modeling expected before condemning or approving novel collaborations, including independent optimization benchmarks.

• Observable structural markers that distinguish procompetitive integration from coordination capture under each collaboration category.

Clear evidentiary thresholds benefit businesses seeking compliance certainty and agencies seeking efficient enforcement. Safe harbors should incorporate withdrawal triggers tied to measurable structural changes, preserving flexibility while providing forward-looking certainty.

C. Falsification Architecture

Updated guidance should include explicit conditions under which analytical conclusions would be revisited. Safe harbor determinations should specify observable events that would prompt renewed scrutiny—such as convergence velocity above the independent optimization benchmark for more than four consecutive quarters, material exclusion of non-participants from previously accessible coordination infrastructure, or reduction in independent pricing variance beyond modeled expectations.

Falsification architecture aligns certainty with accountability. Businesses gain clarity regarding permissible conduct. Agencies retain the ability to adapt when market structure evolves. The absence of falsification conditions in prior guidance is one reason that guidance calcifies into precedent regardless of whether market conditions have changed.

VII. Process Safeguards for Well-Structured Guidance

Updated guidance will be shaped by the comment process generating it. Regulatory economics literature consistently documents that notice-and-comment proceedings reflect participation asymmetries: parties with high per-participant stakes, dedicated legal and economic resources, and direct access to the market-specific data agencies need to write guidance participate more intensively than parties whose individual stakes are smaller. Large platform operators, algorithmic pricing network participants, and established joint venture parties typically fall in the first category; small competitors, consumers, labor markets, and new entrants typically fall in the second.

Stigler’s organizational asymmetry framework, along with subsequent work by Peltzman and Becker, identifies this participation pattern as a well-documented organizational asymmetry in regulatory economics literature. Three structural safeguards address this well-documented organizational asymmetry without constraining the agencies’ substantive analytical authority.

A. Adversarial Information Architecture

Agencies should commission independent economic analysis of competitor collaboration harm patterns before finalizing guidance, rather than relying exclusively on party-submitted evidence. Independence requires not merely financial independence but analytical independence from the interpretive frameworks that repeat players have established in prior proceedings.

For algorithmic pricing and data-sharing guidance specifically, requiring parties seeking safe harbor eligibility to submit convergence velocity data, parameter-sharing documentation, and convergence metrics under a structured disclosure framework addresses the information asymmetry that regulatory economics literature identifies as endemic to notice-and-comment proceedings. Structuring the evidence base expands what agencies can see.

B. Sunset Provisions

Updated guidance should include automatic sunset provisions requiring affirmative re-examination within five years. Long-lived guidance without reexamination triggers becomes institutional grammar: practitioners build compliance structures around safe harbor language, courts defer to agency interpretation, and the original analytical assumptions harden into precedent regardless of whether those assumptions remain accurate.

Algorithmic and data-sharing markets change faster than five-year cycles. Guidance written for 2026 competitive conditions may be structurally obsolete by 2031. A sunset requirement does not mandate withdrawal—it requires agencies to affirmatively determine whether guidance remains adequate, with a structured comment process that reopens safe harbor boundaries to challenge.

C. Structural Audit of Safe Harbor Proposals

For each proposed safe harbor, agencies should document: (1) which commenter positions the safe harbor boundary reflects; (2) what financial interest each supporting commenter holds in guidance at that boundary; and (3) whether the public-interest rationale offered for the boundary is advanced by parties with direct financial stakes in it or by independent parties.

Safe harbors where the financial beneficiary and the public-interest advocate are the same party warrant heightened analytical scrutiny before finalization. Safe harbors supported by genuinely independent public-interest advocacy are stronger candidates for adoption. The audit imposes no constraint on guidance content—it creates a process record of the interest alignment behind each safe harbor proposal.

VIII. Responses to Specific Inquiry Topics

A. Joint Licensing Arrangements

Joint licensing arrangements should be evaluated under the coordination-capacity framework. A joint licensing arrangement that creates open standards, reduces search costs across the market, or enables interoperability that benefits non-participants is coordination-building and warrants safe harbor treatment. A joint licensing arrangement that routes licensing through a controlling node, conditions access on reciprocal data disclosure, or limits the ability of non-participants to develop independent alternatives is coordination-capturing and warrants heightened scrutiny under the framework proposed in Section III.

B. Conditional Dealing with Competitors

Conditional dealing with competitors becomes analytically distinct from ordinary exclusive dealing when it degrades coordination infrastructure rather than foreclosing a specific competitive opportunity. Standard foreclosure analysis asks whether a defined competitive opportunity has been blocked. Conditional dealing analysis under the coordination-capture framework asks whether the condition routes market access through a controlling node that extracts rents from the coordination function.

Updated guidance should supply three diagnostic questions for conditional dealing with competitors, tracking the framework in Section III: whether the condition alters access to coordination infrastructure; whether it extracts rents from the coordination function itself; and whether it raises coordination costs for non-participants by reducing available alternative channels.

C. Information and Data Sharing

Updated guidance should distinguish three analytically distinct categories of information sharing that current doctrine collapses. Coordination-building transparency (standardized technical interfaces, common data formats, shared performance benchmarks) lowers market-wide coordination costs and warrants strong safe harbor protection. Stabilization-enabling signaling (prospective price announcements, capacity disclosures, demand forecasts shared among rivals) enables supracompetitive price stabilization without explicit agreement. Coordination-capturing infrastructure (routing market signals through a controlling intermediary) extracts rents from the coordination function itself.

The distinction turns on three structural features: timing (stabilization signals are prospective; coordination-building data is contemporaneous or retrospective), granularity (stabilization signals are firm-specific and actionable; coordination-building data is aggregated or anonymized), and reciprocity (stabilization networks require symmetric disclosure; coordination-building standards can be asymmetric).

D. Algorithmic Pricing

Responses to the agencies’ algorithmic pricing inquiry are developed fully in Section IV. Updated guidance should focus on convergence velocity analysis, behavioral drift indicators, and incentive-gradient realignment rather than solely on traditional information-exchange categories. The proposed safe harbor, rebuttable presumption, and structural disclosure requirement in Section IV(C) are reproduced here as the direct response to the agencies’ inquiry.

E. Labor Collaborations

Labor market collaborations are most harmful when they compress the bargaining geometry available to workers—the set of credible outside options workers can invoke in wage and contract negotiations. No-poach agreements directly reduce bargaining geometry by eliminating the credible outside option that competing employers represent. Information-sharing arrangements among employers allow coordination of wage offers without explicit agreement, compressing the offer distribution in ways that standard price analysis in product markets would identify as stabilization-enabling signaling.

Updated guidance on labor collaborations should require that claimed procompetitive benefits—training investments, workforce development, retention efficiencies—flow symmetrically to both sides of the labor relationship. When claimed benefits accrue exclusively to the employer side while coordination effects accrue exclusively as costs to workers, the collaboration fails a symmetric benefit requirement and warrants heightened scrutiny.

IX. Falsifiable Predictions

MindCast AI registers the following forward predictions. Falsifiable predictions transform analytical claims into scientific claims subject to empirical test. Agencies that adopt the proposed measurement architecture can validate or refute these predictions and update guidance accordingly. If the predictions fail, the framework requires revision; that constraint is intentional.

A. Algorithmic Collaboration Predictions

Prediction 1. Algorithmic pricing collaborations in concentrated markets that exhibit convergence velocity above the independent optimization benchmark will produce sustained price elevation within 24 months of formation, regardless of whether any explicit agreement exists.

Falsifier: If three or more algorithmic collaborations meeting the convergence velocity threshold produce prices at or below competitive benchmark for 24 months post-formation, Prediction 1 fails and the convergence velocity test requires recalibration.

Prediction 2. Algorithmic collaborations that begin as coordinating equilibria will exhibit measurable behavioral drift toward exploitative equilibrium within five years when market concentration increases or coordination infrastructure alternatives are reduced.

Falsifier: If behavioral drift indicators remain stable or decline in high-concentration markets with reduced infrastructure alternatives over a five-year observation period, Prediction 2 fails and the Becker extension requires revision for algorithmic markets.

B. Guidance Process Predictions

Prediction 3. Safe harbor boundaries in updated guidance will, absent structural process safeguards, align more closely with the positions of the largest horizontal collaboration participants (by comment sophistication and market capitalization) than with independent economic analysis of consumer welfare effects.

Falsifier: If a structural audit of finalized guidance reveals that safe harbor boundaries reflect positions advanced primarily by parties with no direct financial stake in those boundaries, Prediction 3 fails.

Prediction 4. Guidance finalized without sunset provisions will exhibit high doctrinal persistence within ten years—meaning courts, practitioners, and future agency staff will treat guidance language as binding precedent regardless of changed market conditions, reducing effective enforcement flexibility.

Falsifier: If guidance without sunset provisions is affirmatively re-examined and substantially revised within ten years through agency initiative rather than enforcement crisis, Prediction 4 fails.

Conclusion

Competitor collaborations increasingly shape the infrastructure through which competition operates. Updated guidance should reflect three advances: incorporation of coordination-capacity analysis that distinguishes collaborations building market-wide infrastructure from those capturing it; structured evaluation of algorithmic incentive realignment focused on convergence velocity and behavioral drift; and explicit articulation of behavioral and evidentiary termination conditions that specify when analysis is complete.

Clear articulation of these principles will promote innovation, strengthen enforcement legitimacy, and provide the predictability both agencies and the business community seek. Modern collaboration guidance should recognize that competition depends not only on price and output effects but also on the architecture of coordination that markets rely upon.

Three process safeguards will help ensure the guidance reflects broad market welfare rather than the participation asymmetries well-documented in regulatory economics literature: adversarial information architecture that expands the evidence base in comment proceedings; sunset provisions that prevent guidance from calcifying into institutional grammar; and a structural audit requirement that creates a process record of the interest alignment behind safe harbor proposals.