MCAI Market Vision: Trust as AI Infrastructure

How Economists Explain the Invisible Foundation of Today’s AI Market

Prologue: Cognitive Digital Twins in Action

The vision statement demonstrates how MindCast AI’s foresight simulations translate volatile markets into structured foresight by modeling Cognitive Digital Twins (CDTs) of leading economists. By bringing Keynes, Arrow, Coase, Shiller, Schumpeter, Iansiti into dialogue, it shows how the hidden force of trust operates as infrastructure for the AI economy. Each economist, recreated as a CDT, applies their framework to present challenges: data center arms races, high failure rates, fragile narratives, and the tension between incremental and pioneering innovation. This reflects MindCast AI’s core capability: transforming historical insight into forward-looking guidance for investors, entrepreneurs, and policymakers navigating uncertain technological landscapes. See also Socrates on AI, A Vision Statement for Intelligence Worth Finding (Aug 2025), Marcus Aurelius on AI, Meditations on Success, Valuation, and Market Discipline (Aug 2025), Realpolitik for AI, How Bismarck, Kissinger, and Three Other Master Strategists Would Navigate Today's Technology Markets (Aug 2025).

I. Why The Dialogue Matters

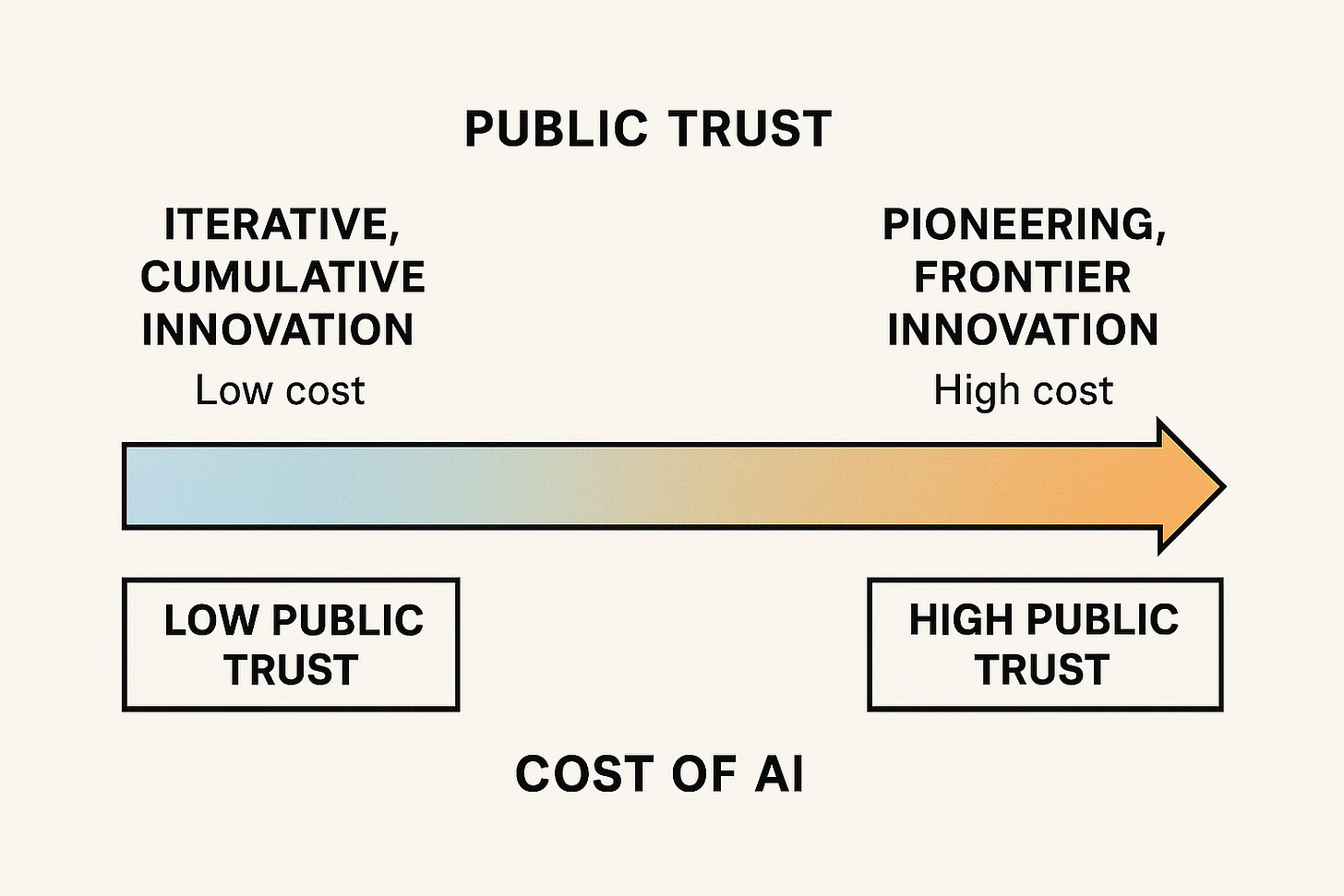

The AI market has entered a critical phase. Enterprise adoption reveals that 95% of projects fail to scale, yet billions continue to flow into GPUs, data centers, and long-term energy contracts. Public trust determines whether these heavy bets are seen as inevitable foundations or reckless overreach. Low-cost wrappers and incremental improvements capture attention, but true transformation depends on sustained confidence in high-cost, long-horizon innovation.

The dialogue convenes the Cognitive Digital Twins of six economists whose theories illuminate the role of trust in economic life. Keynes explains why confidence persists despite failure. Arrow shows how trust lowers transaction costs in colossal deals. Coase clarifies why the frontier clusters in Big Tech. Shiller demonstrates how narratives shape capital allocation. Schumpeter warns that without deep trust, innovation collapses into shallow imitation. Iansiti highlights how digital operating infrastructure requires trusted governance to scale without fragility. Together, they map the architecture of trust underpinning AI’s future.

The dialogue matters because trust is invisible but decisive. It decides whether capital funds stranded assets or enduring infrastructure, whether entrepreneurs dare to pioneer or settle for imitation, whether society sustains the narrative of inevitability or succumbs to skepticism. By applying timeless economic frameworks to today’s AI market, this vision statement reveals trust as the critical resource of the AI age.

Contact mcai@mindcast-ai.com to partner with us on predictive cognitive AI.

John Maynard Keynes (1883–1946)

Father of modern macroeconomics, Keynes explained how animal spirits—confidence, fear, and trust—drive investment beyond rational calculation. In AI, where 95% of projects fail yet billions flow into GPUs and data centers, his lens explains why confidence is the lifeblood of continued capital allocation.

Keynes on AI:

“In your market, 95% of projects fail, yet billions pour into GPUs and power contracts. This cannot be explained by reason alone. These are animal spirits—the fragile confidence that the remaining 5% will justify it all. If those spirits collapse, you’ll have data centers half-built and entrepreneurs unwilling to stake careers. Policy and leadership must therefore stabilize confidence—not by promising certainty, but by keeping alive the conviction that risk-taking is socially supported and failure survivable. Governments that treat every failure as scandal risk destroying the very conditions of progress. Better to frame failure as experiment, and to ensure public institutions cushion its fallout.”

Why Keynes Matters Now: In a market where enthusiasm collides with a 95% failure rate, policymakers and investors must understand that confidence is not irrational exuberance but structural oxygen. Without it, costly AI buildouts stall.

Maxim: Confidence is the only bridge from sunk cost to future gain.

Kenneth Arrow (1921–2017)

Nobel laureate and pioneer of information economics, Arrow famously argued that “virtually every commercial transaction has within itself an element of trust.” AI is the epitome of this: colossal data-center deals, chip contracts, and regulatory bargains hinge on confidence in counterparties, institutions, and norms.

Arrow on AI:

“Your compute market depends on contracts of staggering scale—billions in energy, chips, and cooling. No set of lawyers can write clauses for every contingency. What allows these projects to proceed is trust: in regulators to approve, in utilities to deliver, in firms not to default. When blackouts threaten, or when export controls rattle supply chains, we test that trust. A society that invests in credible, transparent institutions lowers the friction of doing business. Absent that trust, every negotiation becomes a battlefield, and the cost of capital rises until only a few can pay the toll.”

Why Arrow Matters Now: Arrow explains why AI’s infrastructure arms race is not purely technical or financial—it is institutional. Trust in governance and reliability lowers costs that would otherwise cripple large-scale projects.

Maxim: Without trust, data centers become stranded assets.

Ronald Coase (1910–2013)

Originator of transaction cost economics, Coase showed why firms exist: to minimize friction. In AI, only massive firms (Microsoft, Google, Amazon) can internalize the trust and coordination required for billion-dollar infrastructure builds. His framework reveals why the frontier clusters in Big Tech—and the risks of monopolization.

Coase on AI:

“Firms arise to minimize friction. That is why the AI frontier belongs to Microsoft, Google, and Amazon. Only they can internalize the trust needed to coordinate multi-billion-dollar builds. Start-ups cannot. But beware: when the cost of trust is borne only by giants, markets become concentrated by default. That is the hidden danger of your present path. A society that wants both innovation and scale must design institutions—shared compute platforms, trusted public-private partnerships—that distribute the burden of trust. Otherwise, you exchange one inefficiency (transaction costs) for another (monopoly stagnation).”

Why Coase Matters Now: His insight explains why AI’s infrastructure is clustering in Big Tech, and why new institutional designs are essential if the industry wants more than a monopolized frontier.

Maxim: Scale without trust breeds monopoly; scale with trust invites participation.

Robert Shiller (1946– )

Nobel laureate and author of Narrative Economics, Shiller demonstrated how stories drive markets as much as fundamentals. In AI, the tale of “inevitable transformation” competes with the reality of 95% failure. Whichever story dominates determines whether investors keep funding data centers or retreat to low-cost wrappers.

Shiller on AI:

“The public hears two stories: that AI is the inevitable future, and that 95% of projects fail. Which story dominates decides the flow of capital. If the narrative of inevitability persists, data centers rise, even when profits lag. If the narrative of failure takes hold, the market contracts to gimmicks and cost-cutting. Narratives are not background noise—they are the core variable. Consider the housing bubble: belief that prices would always rise drove construction far beyond fundamentals. In AI, belief that models will dominate all industries fuels the data-center arms race. Investors must tend their narratives like gardeners tend vines: pruning hype, watering confidence, ensuring growth.”

Why Shiller Matters Now: AI’s viability rests not on quarterly earnings but on whether the inevitability story holds. If narratives collapse, capital dries up regardless of fundamentals.

Maxim: The market believes stories, not statistics; craft them wisely.

Joseph Schumpeter (1883–1950)

Theorist of creative destruction, Schumpeter argued that capitalism advances through pioneering innovation that destroys old structures. In AI, this means more than wrappers—it means new architectures, chips, and training paradigms. His framework explains why trust must go beyond tolerance for iteration, extending to support for high-risk pioneers.

Schumpeter on AI:

“Most of what I see is imitation: wrappers on models, thin software layers on APIs. This requires little trust and produces little transformation. True creative destruction—new architectures, chips, training paradigms—demands public trust on a different scale. Society must accept disruption; investors must risk long horizons; entrepreneurs must leap knowing failure is not exile. A market enthralled by convenience will stagnate. A market that trusts its pioneers will tear down obsolete structures and build anew. In AI, your greatest risk is not that 95% fail, but that the 5% with true vision are never funded because trust does not extend far enough.”

Why Schumpeter Matters Now: His framework shows the danger of shallow trust: it sustains imitation but starves the pioneers. Without deeper trust, AI devolves into cosmetic progress.

Maxim: Creative destruction dies where trust is too timid to fund pioneers.

Marco Iansiti (1961– )

Professor at Harvard Business School, co-author of Competing in the Age of AI

Iansiti studies how digital technologies reshape business models, industries, and value creation. He emphasizes that AI platforms differ from industrial-era firms because they scale with near-zero marginal cost and create systemic interdependence across entire ecosystems. His lens is vital for AI today: it explains why trust in platform governanceis as important as trust in technical performance.

Iansiti on AI:

“Your industry is not building tools alone — it is building digital operating infrastructure. When a bank or a hospital adopts an AI platform, it entrusts not just data but its very operations to the system. That requires trust in the platform’s governance, its reliability, its alignment with public values. A single failure reverberates through the ecosystem, not just one firm. The paradox is that AI’s efficiency depends on interconnection, but interconnection magnifies the consequences of broken trust. Unless platforms demonstrate transparency and accountability, the scale they seek will collapse under the weight of suspicion.”

Why Iansiti Matters Now: His insights explain why governance and transparency are no longer optional add-ons for AI firms—they are preconditions for durable adoption and scale.

Maxim: In an AI economy, scale without trusted governance is fragility disguised as progress.

II. Reflections

Keynes reminds us that investment in AI depends on sustaining confidence even amid massive failure rates. Arrow warns that without institutional trust, the towering contracts for chips and power become prohibitively costly. Coase explains why Big Tech dominates the frontier, yet also why unchecked concentration corrodes innovation. Shiller shows how narratives of inevitability or collapse direct the flow of capital more than spreadsheets. Schumpeter pushes us to ask whether trust extends far enough to support true breakthroughs rather than surface-level imitation. Iansiti highlights that AI is not merely a set of tools, but a new operating infrastructure — where governance failures ripple system-wide.

III. Synthesis

Across these voices, a common thread emerges: trust is the infrastructure beneath the infrastructure. It is the hidden variable that allows billions to flow into data centers, entrepreneurs to risk careers, and society to tolerate disruption. When trust is deep and well‑anchored, capital supports both the incremental and the pioneering. When trust frays, the AI market retreats into safe wrappers and leaves the frontier to monopolists. The economists’ collective counsel is clear: nurture confidence, reinforce institutions, and sustain credible narratives — because only then can AI’s costly, uncertain, transformative potential endure.

Final Maxim: In the AI age, trust is the power plant behind the data center — unseen, but generating all the energy that fuels progress.

IV. AI Economics: Toward a New Discipline

The dialogue among these thinkers reveals the contours of a distinct field: AI Economics. Unlike classical industrial cycles, AI combines high-cost infrastructure with low-cost applications, fragile public trust with expansive narratives, and platform dynamics that amplify both opportunity and risk. AI Economics studies how trust, governance, and narrative interact with capital allocation in this sector — explaining why billions are sunk into data centers despite high failure rates, and why entrepreneurs still enter knowing most will fail.

AI Economics reframes the discipline to include:

Trust as Capital: measurable as confidence sustaining high-cost investment.

Narratives as Signals: shaping expectations more strongly than fundamentals.

Governance as Infrastructure: ensuring platforms scale without fragility.

Creative Destruction as Filter: distinguishing imitation from true pioneering.

By recognizing these forces, AI Economics provides a framework for navigating the dual dynamic of trust and cost — clarifying when society sustains innovation and when it defaults to monopoly or stagnation.

Final Maxim: In the AI age, trust is the power plant behind the data center — unseen, but generating all the energy that fuels progress.