MCAI Market Vision: Nvidia's Moat vs. AI Datacenter Infrastructure-Customized Competitors

How Infrastructure Bottlenecks Could Reshape the Future of AI Compute

This study builds directly on two recent MCAI Market Vision foresight simulations. Together, these companion studies frame the context for Nvidia's current dominance and the infrastructure-driven risks to its future.

The Bottleneck Hierarchy in U.S. AI Data Centers (Aug 20, 2025), mapped how energy, networking, cooling function as systemic, meso, micro bottlenecks in AI infrastructure. It concluded that competitive advantage depends not on GPU counts but on securing firm power, mastering network topologies, and engineering cooling systems that earn community acceptance.

VRFB's Role in AI Energy Infrastructure: Perpetual Energy for Perpetual Intelligence (Aug 21, 2025), how Vanadium Redox Flow Batteries transform renewable and nuclear power into long-duration, perpetual energy flows. The study positioned VRFBs as the permanence layer for hyperscale AI clusters, enabling uninterrupted scaling.

AI Datacenter Edge Computing, Ship the Workload Not the Power (Sep 1 2025), how AI datacenters evolve toward edge computing as energy, trust, economics, and orchestration converge. It shows that edge is not a marginal experiment but a structural reconfiguration, shifting workloads to where power and community acceptance align.

I. The Infrastructure-First Thesis

Nvidia is printing cash like no other chipmaker in history. Its GPUs are embedded across hyperscalers, sovereign projects, and private labs, forming the backbone of training and inference worldwide. But Nvidia’s biggest risk isn't AMD nor Intel — it's the grid.

AI's growth has shifted the bottleneck from algorithms to physics: power, cooling, and bandwidth. Every AI cluster is ultimately tethered to the limits of physics. Mega-campuses like OpenAI's "Stargate" are consuming as much energy as mid-sized cities, and hyperscalers are racing to secure power purchase agreements years in advance. Cooling requirements are pushing facilities toward direct liquid immersion, subsea siting, and experimental zero-water systems, while inter-campus networking is hitting bandwidth ceilings even at 400–800 Gb/s links.

These constraints create a fundamental strategic opening. When grids congest, when regulators resist water-hungry cooling, and when network fabric saturates, innovation stalls regardless of how many accelerators Nvidia ships. The contest is no longer just about compute — it's about the architecture of constraint itself.

Infrastructure is not simply a background condition—it is now the decisive battlefield. Competitors who codesign chips for low power draw, optimized thermals, and distributed bandwidth may unlock growth where general-purpose GPUs falter. In foresight terms: infrastructure is no longer constraint, it is competitive wedge.

Contact mcai@mindcast-ai.com to partner with us on innovation and market foresight simulations.

II. Nvidia's Current Moat: Formidable but Vulnerable

Nvidia's strength operates on three reinforcing layers. CUDA lock-in represents a developer ecosystem cultivated over 15 years that forces loyalty by making migration painful. Vertical integration through the Mellanox acquisition gave it networking, NVLink provided interconnect dominance, and Spectrum-XGS now positions Nvidia as a full-stack datacenter provider. Scarcity as a weapon allows Nvidia to monetize shortage and command pricing power in a GPU-constrained market.

These layers reinforce one another, making the moat appear impenetrable within today's paradigm. Yet history shows moats erode from the periphery under external stress, not from the center. Infrastructure pressure, not direct rivalry, may expose Nvidia's weakest flank.

The critical insight: Nvidia's moat — CUDA, Mellanox, NVLink, Spectrum-XGS, and scarcity premiums — is formidable within today's compute-first paradigm. But AI's bottleneck has shifted to physics, where those moats provide little protection. The question becomes: will infrastructure bend to GPUs, or will compute bend to infrastructure?

III. Competitive Pathways: Three Routes to Disruption

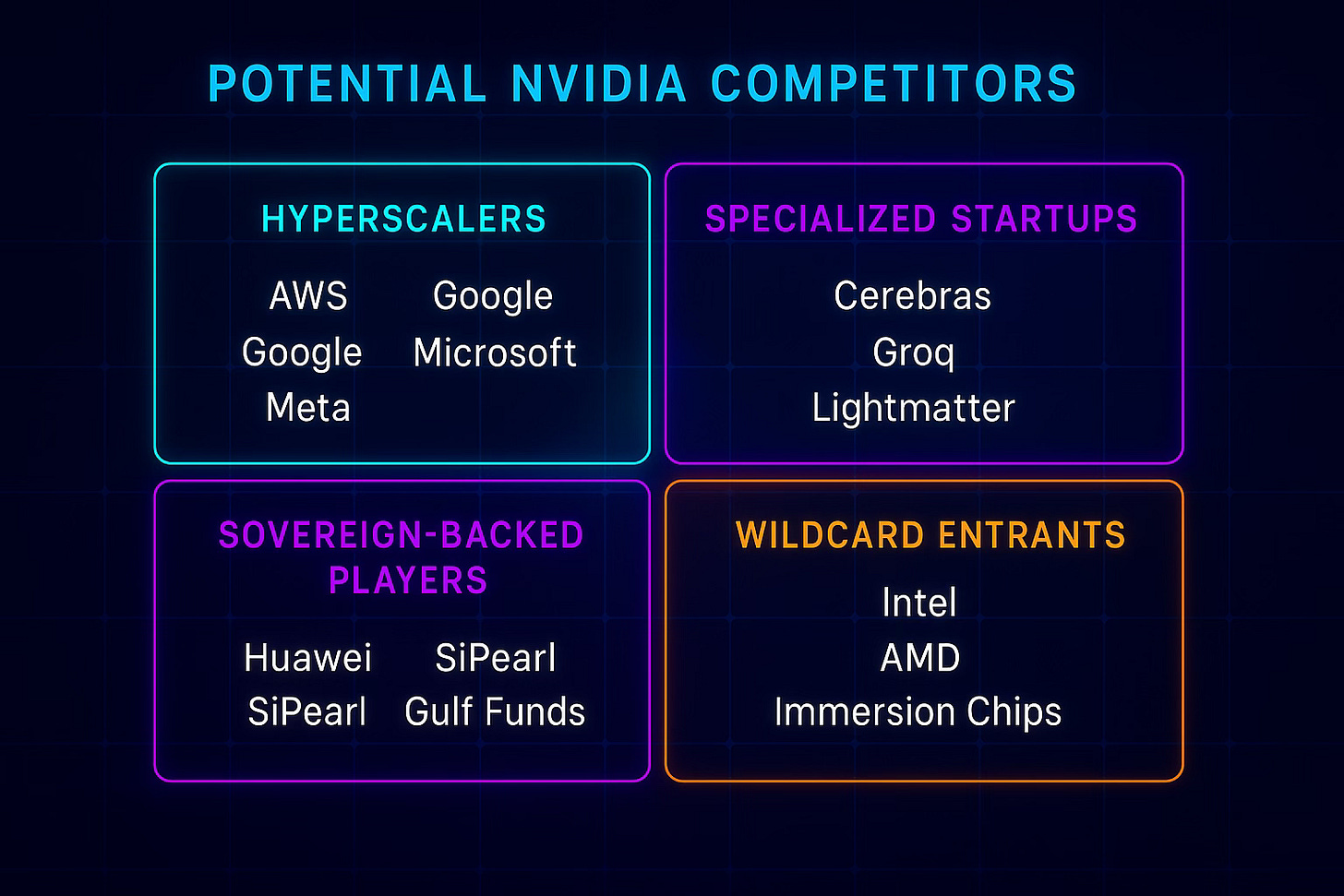

Disruption could emerge through three main routes, each leveraging infrastructure constraints as a competitive wedge.

Hyperscaler alliances represent the most immediate threat. AWS (Trainium, Inferentia), Google (TPUs), Microsoft (Athena), and Meta (in-house accelerators) can deploy proprietary silicon tuned to their specific infrastructure while bypassing CUDA with captive ecosystems. These companies control both the chips and the infrastructure they run on, creating powerful optimization opportunities that general-purpose GPUs cannot match.

Specialized startups like Cerebras (wafer-scale), Groq (deterministic chips), and Lightmatter (photonic compute) exploit niches where GPUs overconsume power or overheat. Their designs target specific infrastructure pain points that Nvidia's general-purpose approach struggles to address efficiently.

National champions present the geopolitical vector: China's Huawei Ascend, Biren, and Alibaba's Hanguang chips; the EU's SiPearl; and Middle Eastern sovereign-backed ventures could drive infrastructure-customized silicon as a matter of technological independence. Even incumbents like Intel (Gaudi) and AMD (MI300) are well-positioned to tailor chips to liquid cooling or subsea architectures.

The unifying theme: the next frontier isn't faster GPUs — it's compute matched to energy and cooling limits.

IV. Foresight Timeline: The Path to Bifurcation (2025–2030)

The trajectory of Nvidia's dominance cannot be measured in quarters, but in inflection points. Each year between 2025 and 2030 reveals whether infrastructure bends to Nvidia's GPUs or whether compute bends to new infrastructure realities.

2025–2026: The Scarcity Peak Nvidia sustains record margins, driven by scarcity and CUDA lock-in. Competitors announce radical designs but lack production scale. Grid strain delays flagship campuses, underscoring infrastructure as the binding ceiling. This period represents peak Nvidia dominance — but also peak infrastructure stress.

2027: First Cracks Appear The first credible hyperscaler-customized silicon arrives, tuned for liquid cooling and energy efficiency. Early adoption occurs in proprietary datacenters, not the open market. Infrastructure debates shift capital allocation toward energy-efficient compute, creating the first real alternative value proposition to Nvidia's GPUs.

2028–2029: Infrastructure-Compute Codesign Emerges Long-duration storage such as VRFBs pairs with customized chips, creating a new hardware–infrastructure codesign loop. Networking alternatives scale for inter-campus bandwidth, challenging Spectrum-XGS. Competitors carve out 15–20% market share in energy-constrained campuses, proving the viability of infrastructure-first design.

2030: Market Bifurcation The market splits into two distinct segments. Nvidia retains dominance in flexible, general-purpose compute, but competitors rule in infrastructure-customized environments. AI's innovation trajectory is now co-determined by energy and storage, not just silicon. The age of infrastructure-agnostic compute ends.

This timeline underscores that disruption will unfold progressively, visible first in contracts and prototypes, then in market share and deployment scale. The shift from scarcity-driven dominance to infrastructure-aligned competition represents a fundamental change in how AI compute markets operate.

V. Early Warning Signals: Detecting Disruption Before It's Obvious

The cracks in Nvidia's moat will first appear in infrastructure signals, not market share. The telltale signs will be buried in grid contracts, storage deployments, and thermal management pivots. By the time benchmark scores shift, the foundations of competition will already have moved.

Energy Domain Signals Watch for 20–30 year grid contracts tied to fusion pilots, enhanced geothermal, or VRFB deployments. These reveal a transition from short-term stopgaps to permanence. Long-duration contracts signal confidence in infrastructure-first compute strategies and the capital backing to make them reality.

Cooling Domain Signals

Track hyperscalers acquiring immersion-cooling startups or announcing subsea datacenter pilots. Custom silicon aligned to those cooling regimes will follow within 18-24 months. The cooling infrastructure leads; the chip design follows.

Networking Domain Signals Monitor adoption of optical circuit switching and non-Nvidia interconnects, signaling intent to scale beyond Spectrum-XGS. Networking alternatives that bypass Nvidia's stack represent fundamental architectural shifts that will eventually require different compute approaches.

Geopolitical Capital Flows Sovereign wealth funds financing VRFB-powered campuses with indigenous accelerators, Chinese provinces subsidizing Biren or Ascend chips tied to local nuclear PPAs, or EU subsidies for SiPearl tied to exascale networking deployments would mark structural divergences from CUDA's gravity.

Why Signals Matter Signals compress the time horizon of disruption. Once infrastructure realigns, market share shifts become inevitable. The foresight imperative is to detect these moves before they appear in earnings calls—because by then, the inflection will already be locked in.

VI. Investment Implications and Strategic Recommendations

For Nvidia Investors The infrastructure thesis doesn't doom Nvidia, but it does constrain its future growth vectors. Nvidia will likely maintain dominance in general-purpose compute, but its total addressable market may bifurcate. The company's networking acquisitions (Mellanox, Spectrum-X) show awareness of infrastructure dependencies, but may not be sufficient if the market splits along energy-efficiency lines.

For Infrastructure Investors The greatest opportunity lies at the intersection of energy, cooling, and compute. Companies that can deliver 20-30 year energy contracts paired with advanced cooling solutions will likely capture disproportionate value as hyperscalers seek infrastructure permanence. VRFBs, subsea cooling, and distributed energy systems become strategic assets.

For Technology Investors Focus on companies developing compute specifically for infrastructure constraints rather than general-purpose performance. The winners will be those who optimize for watts-per-operation rather than operations-per-second. Photonic computing, wafer-scale integration, and deterministic processing architectures deserve particular attention.

For Sovereigns and Governments Infrastructure-customized compute represents a path to technological independence that doesn't require matching Nvidia's 15-year CUDA head start. By controlling energy, cooling, and networking infrastructure, nation-states can create competitive advantages that are difficult for external players to replicate.

VII. Conclusion: The Architecture of Constraint

Nvidia remains dominant but operates within a paradigm that is rapidly changing. Its moat is formidable when compute exists independently of infrastructure constraints. But as AI scales beyond current grid capacity, beyond current cooling systems, and beyond current networking limits, that paradigm breaks down.

The future of AI compute will be determined not by who builds the fastest chips, but by who best aligns compute with the architecture of constraint itself. Infrastructure is becoming the competitive wedge that could reshape the entire semiconductor landscape.

If infrastructure bends to GPUs, Nvidia rules another decade. But if compute bends to infrastructure, competitors will seize decisive ground through codesign approaches that Nvidia's general-purpose strategy cannot match.

The ultimate question is not whether Nvidia's moat is strong—it is. The question is whether that moat remains relevant when the battlefield itself transforms.

Appendix: MindCast AI Cognitive Foresight Methodology

MindCast AI uses Cognitive Digital Twins (CDTs) to simulate the behavior of companies, technologies, and markets over time. Each CDT mirrors how an institution or technology evolves under pressure, making it possible to test future scenarios before they unfold. By running these simulations, MindCast AI translates complexity into foresight, showing where strategic cracks or breakthroughs will appear.

Key Forecasts

Market Dynamics: Nvidia's moat is strong today, but competitors could capture 15–20% share by 2030 if infrastructure-optimized chips align with energy and cooling bottlenecks.

Causality: Infrastructure limits (power, cooling, networking) are not background noise; they are the primary forces that could weaken Nvidia's dominance.

Developer Ecosystem: CUDA lock-in is sticky, but not absolute. If hyperscalers back alternative ecosystems tied to their own chips, developer inertia can be overcome through captive deployment at scale.

Timeline: By 2027, the first real cracks may appear. By 2029, infrastructure-customized competitors could shift momentum. By 2030, the market may bifurcate into Nvidia-led general compute and competitor-led infrastructure-specific compute.

Geopolitical Dimensions

China and Sovereigns: State-backed efforts to build national AI hardware stacks could bypass Nvidia within controlled ecosystems, particularly when paired with domestic energy infrastructure.

U.S. and Europe: Policy incentives for clean energy and long-duration storage may accelerate adoption of VRFB-powered campuses paired with custom silicon, creating new competitive dynamics.

Summary: The intersection of geopolitics and infrastructure creates multiple vectors for challenging Nvidia's dominance outside traditional market competition.

Supporting Data Points

Energy Scale: OpenAI's Stargate project consumes energy equivalent to ~600k homes

Cooling Costs: Represent ~40% of total datacenter energy consumption

Network Bottlenecks: Inter-campus links hitting saturation at 400–800 Gb/s

Contract Timelines: Power purchase agreements now extending 20-30 years

Market Concentration: Nvidia currently captures >80% of AI accelerator revenue