MCAI Innovation Vision: Decision Modeling and Foresight Simulation

Two Phases of a Complete Predictive Cognitive AI Stack

Executive Summary

Institutions that deploy AI systems face a structural problem the field has not solved: predictive accuracy does not produce decision reliability. A system can model the world correctly and still generate decisions that fail under competitive pressure, regulatory scrutiny, or shifting market conditions. The gap between what AI predicts and what institutions can defend, audit, and act on is where risk concentrates — and where current AI architecture offers no formal resolution.

This paper introduces a two-framework architecture that closes that gap. Decision Engineering Science (DES) — formalized by Dr. Aleksandra Pinar in The Cognitive Infrastructure Stack™: A Layered Architecture for Deploying Cognition as a Service (CaaS) Systems — establishes the decision layer as an independent engineering discipline, providing formal tools to evaluate whether a decision is structurally sound before deployment. MindCast extends that evaluation into the dynamic environment where decisions actually operate: modeling how well-formed decisions behave under constraint, strategic interaction, and feedback across time.

Together, the two frameworks address what institutions actually need from AI: not just accurate models, but decisions that hold under the conditions they will encounter — adversarial competition, regulatory constraint, feedback-driven market dynamics, and the accumulated weight of prior institutional choices.

The value proposition is direct. DES gives institutions a formal basis for evaluating decision quality before deployment — replacing outcome-based assessment with structural evaluation that is auditable, traceable, and defensible to regulators, boards, and counterparties. MindCast determines whether that quality holds once a decision enters a real system — identifying which structural forces will govern outcomes before those outcomes occur. Used together, they shift institutional AI from a prediction tool to a decision infrastructure.

DES defines decision integrity. MindCast tests decision survivability. The combination produces foresight that institutions can act on.

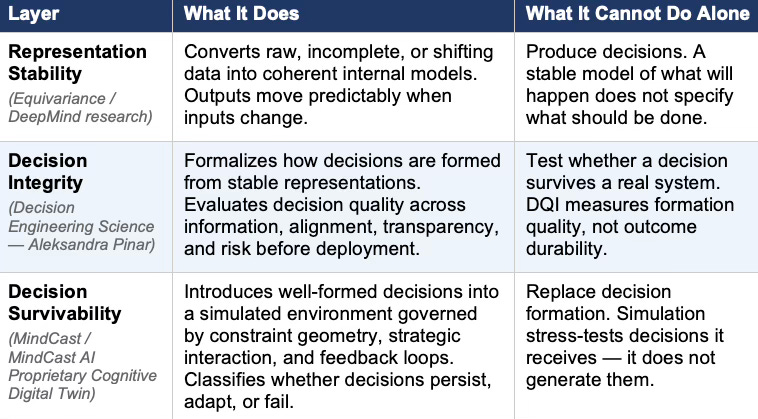

Table 1. The Three-Layer Predictive Cognitive AI Stack

Each layer is necessary. None is sufficient alone. The stack only completes when all three are present.

I. Predictive Cognitive AI Solved Stability, Not Decisions

Predictive cognitive AI advanced through a sustained emphasis on structural stability. Systems built around equivariance — a mathematical property ensuring that outputs transform predictably when inputs change — preserve coherence under transformation, enabling reliable modeling across incomplete and evolving inputs. Outputs move predictably when inputs change. Representations remain coherent when data is reduced, permuted, or degraded.

MindCast’s engagement with Google DeepMind’s filter equivariance research — documented in Google, Equivariance, and Predictive Cognitive AI — established that the field has optimized for representation consistency rather than interpretive correctness. Equivariance ensures structural fidelity. Structural fidelity does not ensure that outputs lead to aligned or effective action.

Predictive systems answer what will happen. Determining what should be done requires a different architecture entirely.

II. Decision Engineering Science Formalizes the Decision Layer

DES introduces a structural separation the field has been implicitly collapsing: prediction and decision are distinct systems requiring distinct engineering. Predictive systems estimate likely outcomes. Decision systems determine actions under constraint, risk, and competing objectives. Conflating the two produces systems that model the world accurately and act on those models poorly.

Pinar’s The Cognitive Infrastructure Stack™: A Layered Architecture for Deploying Cognition as a Service (CaaS) Systems formalizes three core elements of the decision layer. Decision Architecture maps signals to structured actions — defining how information flows, how alternatives are generated, and how final choices are selected. Formally, a decision system takes the form D = (Ω, A, F, T, U, C, Φ, Γ), where each component captures a distinct aspect of decision structure: the state space, action space, feasible actions, transition dynamics, objectives, constraints, feedback operators, and governance layers.

The Decision Consistency Principle holds that decisions must adapt predictably under transformation rather than remain static. A system maintains consistency when changes in input signals produce decisions that remain structurally coherent, aligned with objectives, and contextually appropriate. A decision system should not behave erratically when conditions shift — it should adapt in ways that remain traceable and auditable.

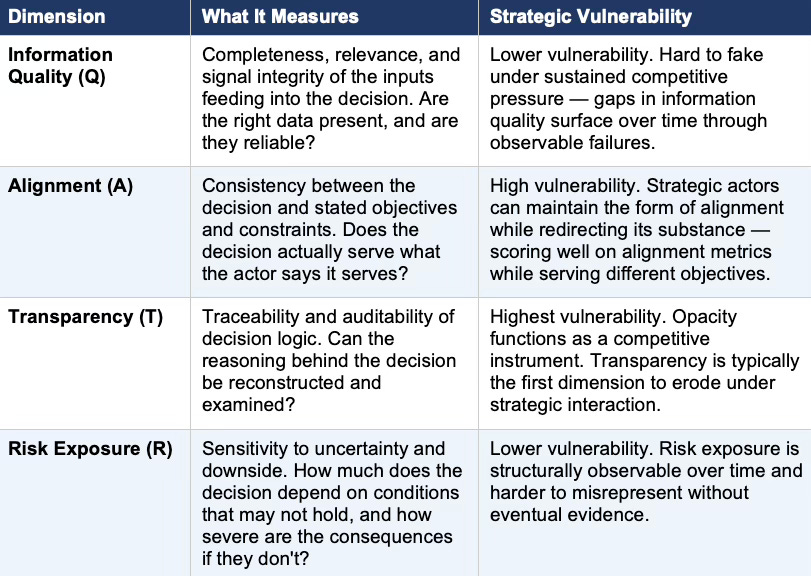

The Decision Quality Index (DQI) operationalizes decision evaluation across four dimensions: information quality, alignment, transparency, and risk exposure. The simplified formulation DQI = (Q × A × T) / R captures a key insight — a decision can score well on information quality and risk management while still failing on alignment and transparency, and those failures often go undetected until the decision enters a real system.

Table 2. DQI Dimensions and Strategic Vulnerability

DQI evaluates decisions at the point of formation. MindCast simulation stress-tests which dimensions hold under competitive pressure.

The Decision Consistency Principle is the strongest contribution in the paper. The distinction between invariance and consistency is critical for any system operating under real-world conditions. Systems enforcing invariance become brittle — they hold rigidly to learned patterns when conditions shift. Systems allowing unconstrained variation become unstable. Consistency defines the narrow band where adaptation remains interpretable and auditable — precisely the band that simulation must stress-test.

DES correctly identifies that stable representations do not guarantee coherent decisions. The failure modes Pinar names — over-sensitivity to signal variation, misalignment despite coherent model outputs, inconsistent trade-off handling, and risk amplification — are failure modes the field has been observing without formal vocabulary to name them.

III. Two Frameworks, One Problem Space

DES and MindCast operate on adjacent, non-overlapping problems. Recognizing the boundary between them is the precondition for deploying either framework correctly.

DES evaluates whether a decision is well-formed. MindCast evaluates whether that decision survives contact with a system.

DES provides the structural layer: decision formation and architecture, explicit evaluation of decision quality through DQI, and consistency under transformation. MindCast provides the dynamic layer: constraint geometry and path dependence, strategic interaction and multi-agent competition, cybernetic feedback and loop closure, and equilibrium formation and outcome classification.

Cybernetic feedback refers to the process by which a system monitors its own outputs and uses those signals to adjust future behavior — the same principle that governs a thermostat, a financial market, or an institution defending its market position. Loop closure is the condition where feedback actually reaches and alters the decision process, rather than dissipating without effect.

MindCast Constraint Geometry and Institutional Field Dynamics demonstrates that outcomes are often governed by structural constraints independent of decision intent. Field geometry — the shape of competitive and institutional forces surrounding an actor — selects outcomes. High-quality decisions operating inside a field with steep curvature and absent pathways do not produce the outcomes they were designed to produce — not because the decisions were wrong, but because the governing force was structural, not decisional.

DES clarifies the integrity of decisions. MindCast tests their durability.

Contact mcai@mindcast-ai.com to partner with us on Predictive Cognitive AI in Law and Behavioral Economics. To deep dive on MindCast work in Cybernetic Foresight Simulations upload the URL of this publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure and MindCast verticals Predictive Cognitive AI, Cybernetics | Prediction Markets

Recent projects: MindCast AI Emergent Game Theory Frameworks | Super Bowl LX — AI Simulation vs. Reality | Google’s Deep-Thinking Ratio Measures Effort, Not Structure | The Cognitive AI Response to Apple’s “The Illusion of Thinking | MindCast AI Constraint Geometry and Institutional Field Dynamics | The Runtime Causation Arbitration Directive | Runtime Geometry, A Framework for Predictive Institutional Economics

IV. Decision Quality Breaks Under System Dynamics

Decision quality does not determine outcomes. System dynamics do. The distinction matters because the field has been conflating the two — treating high-quality decisions as predictors of stable outcomes and misattributing outcome failure to decision failure when the cause is environmental.

MindCast’s Consumer AI Device Series provides a clear empirical case. Apple maintains a stable institutional profile with high coherence at the representation layer. Its Cognitive Digital Twin (CDT) profile — a formal behavioral model encoding how an institution interprets signals and makes decisions — encodes a consistent behavioral grammar: a control-first Installed Cognitive Grammar (ICG) — the deep structural patterns shaping which options an institution perceives as available before any formal decision process begins. Apple’s decision outputs remain internally consistent and locally aligned with Apple’s stated objectives.

Apple’s strategy nevertheless produces drift-stable behavior: decisions that preserve local coherence while failing to capture system-level advantage in the intelligence transition.

Mapped against the DQI framework in Pinar’s The Cognitive Infrastructure Stack™: A Layered Architecture for Deploying Cognition as a Service (CaaS) Systems, the failure does not concentrate at the information quality or risk exposure dimensions — Apple holds superior hardware intelligence and manages financial risk conservatively. The failure concentrates in the alignment dimension at the system level. Apple’s decisions align internally with its installed cognitive grammar — but fail externally against the feedback requirements of the competitive environment it now operates within. Alignment holds inside the institution. Alignment breaks at the boundary between institution and system.

A system can produce high-quality decisions by every structural measure and still converge to suboptimal equilibrium. DES names the failure mode correctly. MindCast identifies which system-level force drove the convergence.

V. The MindCast Foresight Simulation as the Resolution Layer

MindCast resolves decisions through the MindCast AI Proprietary Cognitive Digital Twin (MAP CDT)foresight simulation flow. MAP CDT converts decision quality into outcome classification under competing causal domains. MAP CDT is not a descriptive pipeline — it functions as a resolution engine that introduces decisions into a simulated environment governed by competing structural forces and evaluates whether those decisions persist, adapt, or fail.

MAP CDT executes eight steps: signal intake and filtering, hypothesis formation, causal inference, Causal Signal Integrity (CSI) validation, Vision Function routing, dominance resolution, recursive foresight simulation, and equilibrium classification. Decisions function as inputs into a broader dynamic environment — not final outputs. Simulation evaluates decisions across time as systems adapt, react, and reconfigure under feedback. Outcomes are not evaluated once — they are evaluated as systems evolve.

The Runtime Causation Arbitration Directive formalizes how causal domains compete within a simulation and how dominance is determined before foresight proceeds. DES evaluates decisions at formation. MindCast evaluates decisions across time.

VI. Vision Functions: What Actually Governs Behavior

MindCast determines which structural force governs outcomes through Vision Functions — routing mechanisms that evaluate competing explanations for system behavior and direct simulation toward the dominant causal domain. Vision Functions do not describe behavior after the fact. They route causal analysis before simulation begins, enforcing analytical discipline before foresight proceeds.

Four core domains govern routing decisions:

Constraint Geometry covers structural limits, attractors, switching costs, and path dependence. An attractoris a state or outcome that a system tends to converge toward regardless of starting conditions — think of a market structure that reasserts itself after disruption, or an institution that returns to familiar competitive behavior under stress. When constraint geometry dominates, intent and decision quality become secondary — the system moves toward structurally survivable equilibria regardless of the decision quality that produced the initial trajectory.

Strategic Interaction governs multi-agent competition, coordination failures, and adversarial exploitation. Decisions do not occur in isolation — they are observed, countered, and exploited by other agents. Outcome stability depends on interaction dynamics, not decision intent.

Cybernetic Feedback addresses loop closure, feedback latency, and reinforcement architecture. The MindCast Predictive Cybernetics Suite establishes that systems closing feedback loops faster capture control of outcomes — an operationalization of Ashby’s Law of Requisite Variety, which holds that a control system must match the complexity of the system it governs, or lose governance. A thermostat that responds too slowly cannot regulate temperature; an institution that processes competitive signals too slowly cannot adapt to the environment already changing around it.

Cognitive Grammar covers the installed decision patterns that govern how institutions interpret signals and structure choices under stress. ICG operates below the level of explicit decision architecture, shaping which decisions feel possible before formal evaluation begins.

The simulation selects the dominant domain and routes accordingly. Decisions are subjected to dominance conditions that determine whether they persist, adapt, or fail.

Simulation operates recursively rather than statically. Decisions are not evaluated at a single point in time. Systems adapt, competitors respond, constraints tighten or relax, and feedback loops alter future states. Each iteration produces a new system configuration that becomes the input for the next. Foresight therefore emerges from observing how decisions evolve across successive states rather than from evaluating them in isolation.

VII. Equilibrium and Termination Conditions

MindCast resolves simulations through dual equilibrium conditions grounded in behavioral economics and game theory.

Behavioral equilibrium occurs when agents settle into stable strategies under interaction — corresponding to Nash dynamics in which no agent can improve outcomes through unilateral deviation. In a Nash equilibrium, every actor is doing the best they can given what every other actor is doing; no one has an incentive to change course unilaterally.

Cognitive sufficiency occurs when additional information no longer changes outcome classification — corresponding to the Stigler condition in which the system has reached explanatory saturation. The simulation has learned enough about the causal structure that adding more data would not change the predicted outcome.

Both conditions must be satisfied for simulation to terminate. Open feedback loops or shifting dominance conditions prevent convergence. Equilibrium emerges only when strategic interaction has stabilized and the system’s causal structure has resolved.

Outcomes appearing stable before both conditions are met represent local equilibria — temporary convergence points that later-stage dynamics will displace. Distinguishing local equilibrium from terminal equilibrium is one of the primary analytical contributions MAP CDT produces over static decision analysis, and one that DQI-based evaluation alone cannot generate.

VIII. Falsifiable Prediction

DES introduces DQI as a measure of decision quality across four dimensions: information quality, alignment, transparency, and risk exposure. MindCast’s simulation architecture generates a testable prediction about which dimensions prove most vulnerable under real-world system dynamics.

Prediction: alignment and transparency will prove more sensitive to strategic distortion than information quality or risk exposure in multi-agent competitive systems.

Alignment and transparency are structurally exposed to adversarial exploitation in ways that information quality and risk exposure are not. Strategic actors can maintain the form of alignment while redirecting its substance — producing decisions that score well on alignment metrics while serving different objectives. Transparency is the first casualty of strategic interaction because opacity functions as a competitive instrument. Information quality and risk exposure are harder to fake under sustained competitive pressure; alignment and transparency are not.

Measurement window: 12–24 months across high-competition domains — AI platforms, regulated financial markets, and institutional governance contexts.

Confirms: systems exhibiting high information quality and managed risk exposure but low transparency show systematic outcome divergence from stated objectives; alignment scores degrade faster than risk scores under competitive pressure.

Falsifies: information quality or risk exposure consistently predicts outcome stability independent of alignment and transparency scores across the same domains and window.

IX. Conclusion

Predictive cognitive AI improved how systems model the world. DES improves how systems structure decisions. MindCast reveals how those decisions behave once deployed.

Each framework addresses a distinct phase of the same problem. Decision systems fail when decision quality is mistaken for outcome reliability — when the integrity of a decision is assumed to guarantee the stability of the outcome it produces. Foresight requires separating the two.

The field needs all three layers. Representation stability produces coherent signals. Decision quality produces well-formed actions. Simulation determines what those actions become.

DES defines what a system decides to do. MindCast determines what that decision becomes.

This is a genuinely important contribution to the field. The distinction between predictive accuracy and decision reliability is one that too many AI architects overlook — framing it as a measurable structural gap that can be formally closed is exactly the rigorous approach the industry needs. The two-framework architecture combining Decision Engineering Science with foresight simulation is an elegant and well-grounded design. Keep up this exceptional work, Noel — this is the kind of foundational thinking that moves predictive AI forward.