MCAI Economics Vision: Cybernetic Overview of The MindCast Consumer AI Device Series

How Nine Cognitive Digital Twin Foresight Simulations Produced a Single Structural Finding: The Governing Variable Was Never Exogenous

MindCast Consumer AI Device publications: Installment I — The Intelligence Gap: Apple’s AI Strategy and the Commoditization Bet | Installment II — The Apple AI Challenger Framework: Google, Samsung, and the Intelligence Layer | Installment III — The Consumer AI Device Intelligence Layer: Value Capture Under Interface Drift | Installment IV How Cybernetic Feedback Latency, Loop Architecture, and Ashby’s Viability Condition Resolve Consumer AI Device Competition

MindCast Predictive Cybernetics series: MindCast Predictive Cybernetics Suite | Predictive Institutional Cybernetics | The Cybernetic Foundations of Predictive Institutional Intelligence

Executive Summary

Every technology market eventually stops competing at the product layer and starts competing at the control layer. Consumer AI has reached that inflection point. The product layer — devices, models, operating systems — still generates revenue. Control of the routing layer generates governance: the durable authority to determine which intelligence executes when a user initiates intent, which behavioral defaults compound into lock-in, and which actor captures the margin that flows from both.

Layers determine position. Loops determine power. An institution that wins a layer without closing a feedback loop captures temporary leverage. An institution that closes the loop — routing user intent through its intelligence, returning output through its interface, embedding the interaction as a behavioral default that reinforces the next invocation — captures the system. The consumer AI device market has multiple layer-winners and one loop-closer. Only the loop-closer wins under both resolutions of the governing variable.

MindCast AI Proprietary Cognitive Digital Twin (MAP CDT) foresight simulation execution across Apple, Google, Samsung, OpenAI, Microsoft, Anthropic, Meta, Google DeepMind, and Mistral produced the system-level finding the individual installments approached but never foregrounded as a structural law: the governing variable is endogenous. Whether AI commoditizes or concentrates is not an external market condition waiting to resolve. Institutional behavioral grammars are the resolution mechanism. The aggregate of institutional choices determines the outcome — and the institutions making those choices are doing so inside a feedback system whose dynamics they collectively govern without collectively recognizing it.

Norbert Wiener’s cybernetics predicted this structure before consumer AI existed. Ashby’s Law of Requisite Variety explains why only one institution in the current system is structurally positioned to maintain governance across both outcome scenarios. The Consumer AI Device Series is the proof run. The umbrella names the architecture.

FOR INVESTORS

Standard AI platform analysis tracks device share, model capability benchmarks, and services revenue. The series establishes that invocation frequency — which routing layer fires when user intent enters the system — is the metric that predicts long-term value capture. Invocation share is not tracked in any analyst model. The institutions accumulating it silently are Microsoft, OpenAI, and Google. Apple’s quarterly Services margin is the lagging indicator of a routing contest Apple has not yet entered.

FOR CORPORATE STRATEGY

Every platform incumbent reading the Consumer AI Device Series as a competitive analysis of Apple, Google, and Samsung should read the umbrella as a diagnostic for their own position. The routing layer question — does your institution close the loop between user intent and intelligence execution, or does another institution close it for you? — applies across every enterprise software, consumer platform, and regulated industry context where AI capability is now embedded. The behavioral grammar analysis identifies not just what competitors will do, but at what speed and under what threshold conditions. Both dimensions matter for strategic response windows.

Framework Note

The Consumer AI Device Series executes MAP CDT — MindCast AI’s Proprietary Cognitive Digital Twin Foresight Simulation architecture. MAP CDT routes raw signals through a nine-step process: signal intake and filtering, hypothesis formation, causal inference, causal signal integrity (CSI) validation, Vision Function routing, dominance resolution, and recursive foresight simulation. Each institutional subject is modeled as a Cognitive Digital Twin (CDT): a dynamic behavioral replica encoding the institution’s objective function, constraint stack, adaptation velocity, and feedback sensitivity.

Five Vision Functions appear across the series: Coase Vision (coordination authority and transaction cost architecture), Becker Vision (incentive mapping across heterogeneous agents), CSGT Vision (Chicago Strategic Game Theory — strategic delay and commitment), Field-Geometry Reasoning (FGR — switching costs, lock-in depth, structural attractor states), and ICG Analysis (Installed Cognitive Grammar — adaptation velocity, constraint rigidity, identity preservation).

The cybernetic lineage grounding the series runs from Wiener’s signal filtering theory — formalized as Causal Signal Integrity in MindCast’s architecture — through Ashby’s Law of Requisite Variety (operationalized in the Vision Function architecture’s requisite complexity matching), Beer’s Viable System Model (structural parallel to the five-layer causation stack), and Bateson’s recursive learning theory (replicated in the recursive foresight simulation structure). The full intellectual lineage appears in the MindCast Predictive Cybernetics Suite publications.

I. Loops vs. Layers: The Structural Distinction the Series Produced

The series began as a three-installment analysis of competing institutions. Running all nine CDTs through a shared governing variable produced something the individual installments were not designed to generate: a complete closed-loop model of the consumer AI device market as a cybernetic system — and with it, a structural distinction that resolves the competitive question every installment circled but never named directly.

Layers are positions. Loops are control architectures. Layers describe what an institution owns: the interface (Apple, Samsung), the operating system (Google), the intelligence capability (OpenAI, Anthropic, Google DeepMind), the enterprise infrastructure (Microsoft). Loops describe whether an institution closes the feedback circuit between user intent, intelligence execution, and behavioral default — such that each interaction reinforces the routing decision that produced it. A layer-winner without a closed loop captures margin until a better offer arrives. A loop-closer captures the behavioral default that determines whether a better offer ever gets invoked.

Wiener’s foundational insight — that adaptive systems regulate themselves through feedback, continuously comparing actual output against intended state and correcting deviation — describes precisely the mechanism that determines competitive outcome here. User intent enters the system. Intelligence processes it. Output returns through the interface. The actor that controls the correction loop sets the behavioral default for the next iteration. Each iteration strengthens the default. The loop becomes self-reinforcing.

The four-layer architecture MAP CDT assembled across three installments:

The Interface Layer — Apple and Samsung control the primary surface through which users encounter AI. Apple owns the premium interface with high lock-in depth. Samsung owns global volume distribution with lower lock-in depth. Neither institution closes the intelligence loop.

The Operating System Layer — Google controls the OS running on 83 percent of global smartphones. OS-layer control is routing control: the OS determines which models fire at the default invocation point, which developer APIs are privileged, and which intelligence providers receive distribution without requiring explicit user choice.

The Intelligence Layer — OpenAI, Microsoft, Anthropic, Google DeepMind, Meta, and Mistral compete to supply the capability the interface and OS layers invoke. Value capture at the intelligence layer depends on achieving default status — being the model the system routes to without explicit user selection.

The Behavioral Default Layer — the deepest layer, and the one the series identifies as the primary competition surface. Behavioral defaults form when AI usage becomes embedded in repeated workflow patterns — enterprise software habits that carry into personal usage, consumer interaction patterns that carry into enterprise purchasing decisions, and OS-level default invocations that route user intent without surfacing the routing decision to the user at all.

Winning a layer without closing the loop produces temporary leverage. Closing the loop converts layer-position into governance. Two institutions in the current system close the loop. Seven hold layers without loops — and are therefore structurally dependent on the institutions that do.

II. The Endogenous Governing Variable: Institutional Choices Determine the Outcome

The governing variable is not exogenous. Institutional behavioral grammars are the resolution mechanism.

The causal chain runs as follows. Apple’s passive continuation — licensing external intelligence rather than internalizing capability — reduces competitive pressure on frontier providers to commoditize. Apple is the largest platform incumbent in the premium device market. Apple’s revealed preference for managed dependency signals to the market that platform incumbents will absorb dependency costs rather than contest them. That signal reduces the structural reward for open-weight releases and increases the structural reward for concentration. Apple is not responding to the governing variable. Apple is, through its behavioral grammar, casting the largest single vote in the room for the concentration scenario.

Samsung’s internalization investment runs the opposite feedback loop. Galaxy AI, Samsung Research, and Exynos chip development increase commoditization pressure by expanding the market for independent intelligence capability at the device layer. Every dollar Samsung invests in reducing its Google dependency at the intelligence layer is a vote for the commoditization scenario — not because Samsung’s capability approaches frontier quality, but because Samsung’s distribution scale means its procurement decisions shape the market’s perception of whether open-weight alternatives are viable at consumer volume.

Meta’s Llama strategy is the strongest single vote for commoditization in the system. Open-weight releases do not appear in any intelligence layer provider’s discounted cash flow model as a primary scenario. Meta’s behavioral grammar — distribution scale, advertising monetization dominance, zero marginal cost on model releases — produces the commoditization accelerant that most directly threatens the concentration architectures OpenAI and Microsoft are building. Meta functions as the system’s primary negative feedback mechanism against intelligence layer concentration.

The system implication is precise and falsifiable: if Apple internalizes capability — through acquisition, a credible frontier partnership, or proprietary model development — the signal flips. A credible Apple internalization commitment accelerates commoditization pressure by reducing frontier providers’ pricing power and expanding the market for open-weight alternatives. The governing variable is not waiting for market forces to resolve it. The governing variable resolves when enough platform incumbents change their behavioral grammar.

Contact mcai@mindcast-ai.com to partner with us on Predictive Cognitive AI in Law and Behavioral Economics. To deep dive on MindCast work in Cybernetic Foresight Simulations upload the URL of this publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

Recent projects: The Power Stack Series— How Energy Infrastructure Became the New AI Battleground | MindCast AI Emergent Game Theory Frameworks | MindCast AI Field-Geometry Reasoning | MindCast AI Installed Cognitive Grammar | Runtime Geometry, A Framework for Predictive Institutional Economics | Super Bowl LX — AI Simulation vs. Reality | The Runtime Causation Arbitration Directive | Google’s Deep-Thinking Ratio Measures Effort, Not Structure | MindCast AI Constraint Geometry and Institutional Field Dynamics | Double-Sided Rational Ignorance, How Platform Intermediaries Monetize the Measurement Gap | Executive Summary of MindCast AI Investment Series

III. Ashby’s Law and the Requisite Variety Problem

Ross Ashby established the Law of Requisite Variety in 1956: a control system must possess at least as much variety — as many possible states — as the system it seeks to regulate. A controller with insufficient variety cannot respond to all the states the controlled system can enter. The controller loses governance.

Applied to the consumer AI device market: the institution that seeks to govern user AI experience must match the variety of the competitive system it operates within. The consumer AI device system currently produces variety across three dimensions simultaneously — interface evolution (driven by Apple and Samsung), intelligence capability evolution (driven by OpenAI, Anthropic, Google DeepMind, and Meta), and distribution evolution (driven by Google’s Android ecosystem and Microsoft’s enterprise stack). An institution operating at only one of those dimensions cannot govern the full system.

Apple Fails the Requisite Variety Test

Apple’s control-first ICG constrains adaptation to the interface dimension. Apple matches the variety of the interface environment (high) but not the intelligence environment (low) or the distribution environment (medium). The gap between Apple’s variety and the system’s variety is the mathematical expression of the capability gap established in Installment I. Apple’s behavioral grammar predicts that the gap will persist until dependency pressure exceeds identity tolerance — at which point Apple will attempt late-stage internalization under constrained optionality.

Samsung Partially Satisfies the Requisite Variety Test

Samsung matches distribution variety (high) and is building toward intelligence variety (medium, rising). Samsung’s failure is OS-layer dependency: the variety Samsung can exercise at the intelligence layer is structurally capped by Google’s control of the Android routing layer. Samsung’s internalization investment is, in Ashby’s terms, an attempt to increase Samsung’s variety to match the system’s — but the attempt runs through infrastructure a competitor controls.

Google Satisfies the Requisite Variety Test Under Both Scenario Resolutions

Google’s dual-loop position — OS-layer distribution control plus frontier Gemini capability — means Google matches the variety of the distribution environment (via Android’s 83 percent global OS share) and the variety of the intelligence environment (via Gemini’s frontier capability position) simultaneously. Under commoditization, Google’s distribution variety governs. Under concentration, Google’s capability variety governs. No other institution in the series holds this position.

Google’s dual-loop advantage is not a strategic observation about competitive positioning. Google is the only institution whose controller variety matches the system’s full variety under both scenario resolutions. All other institutions require the governing variable to resolve in a specific direction to maintain their governance position.

FALSIFIABLE PREDICTION — DUAL-LOOP PERSISTENCE

Google’s dual-loop advantage persists until one of two conditions is met: (a) antitrust enforcement severs the Android-to-Gemini routing connection, reducing Google’s effective variety to either distribution or capability but not both, or (b) Meta’s open-weight releases compress intelligence variety to the point where capability no longer differentiates, eliminating the concentration scenario Google wins under. Both conditions are observable. Neither has triggered. The dual-loop advantage is intact.

IV. The Installed Cognitive Grammar Matrix: Nine Institutions, Three Lag Structures

Running all nine ICGs against the same governing variable produces a cross-series finding the individual installments could not generate alone: institutional lag structure determines the sequence of competitive outcome, not just the direction. The institution with the longest lag loses governance first — regardless of which scenario resolves.

The series identified five distinct ICG archetypes:

1. Control-First Grammar — Apple

Observe external innovation → delay entry → integrate into ecosystem → reframe as proprietary experience. Artificial intelligence disrupts this sequence at step three because integration requires dependency on systems Apple did not build and cannot fully control — preventing the reframe at step four from being structurally honest. Apple’s lag structure is the longest in the system. Adaptation velocity is moderate to slow. Constraint rigidity is high. Apple will be the last platform incumbent to internalize, and will do so under the most constrained optionality.

2. Speed-Dominant Grammar — Google, OpenAI

Invest at the frontier first → distribute through ecosystem second → monetize through platform fees and advertising third. Google and OpenAI share this grammar despite operating from opposite positions in the stack. Speed-dominant grammars produce fast adaptation but generate antitrust exposure (Google) and infrastructure dependency exposure (OpenAI depends on Microsoft’s Azure compute architecture for the capability that constitutes its competitive position). The primary failure mode is not slow adaptation — it is governance constraint that limits what fast adaptation can capture.

3. Conglomerate-Distributed Grammar — Samsung, Microsoft

Distributed across business units with competing capital allocation priorities. Samsung’s grammar distributes across semiconductor, display, and consumer electronics divisions. Microsoft’s grammar distributes across enterprise, cloud, gaming, and consumer divisions. Distributed grammars produce slower, more fragmented adaptation than speed-dominant grammars — but also more strategic flexibility. Samsung can pursue internalization paths Apple’s brand constraint would not permit. Microsoft can embed AI into enterprise workflows without requiring consumer interface capture as the monetization mechanism. The primary failure mode is coordination cost: distributed grammars risk internal capital competition that delays commitment at threshold moments when adaptation velocity matters most.

4. Trust-Anchored Grammar — Anthropic

Capability development governed by safety-first constraint architecture. Anthropic’s ICG produces precision positioning — high-trust enterprise relationships with regulated industry clients — that is durable under concentration but time-bounded. Anthropic’s positioning produces value capture only while concentration persists long enough for enterprise trust to compound into routing authority. If Meta’s open-weight strategy accelerates commoditization faster than Anthropic’s enterprise relationships compound, Anthropic’s window closes before trust converts into distribution control.

5. Commoditization-Accelerant Grammar — Meta, Mistral

Distribution scale plus zero-marginal-cost model releases as the governing strategy. Meta does not compete for routing authority in the consumer device system. Meta competes to prevent concentration from producing pricing power that would raise the cost of Meta’s advertising monetization architecture. Mistral operates at smaller scale with sovereign and regional fragmentation as the primary mechanism. Both function as the system’s negative feedback against concentration — not as value capture strategies, but as structural constraints on the concentration loop’s terminal velocity.

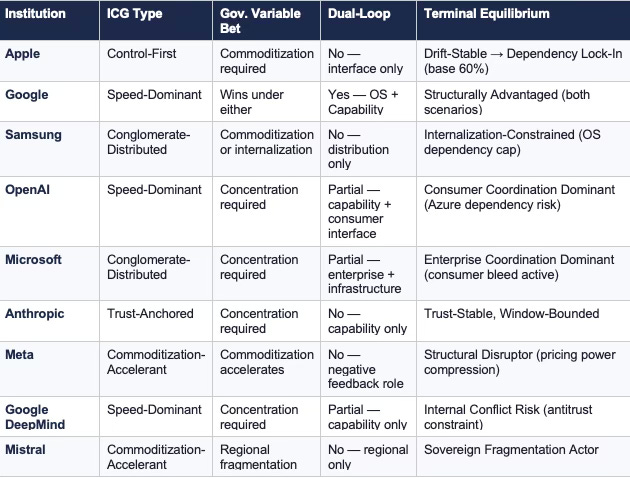

Cross-Series CDT Compression — Nine Institutions

The table produces a cross-series observation absent from any individual installment: only Google holds both a dual-loop position and a governing variable bet that does not require a specific scenario resolution. Every other institution either requires the variable to resolve in its favor or operates as a structural mechanism for pushing the variable in a specific direction. The system has no neutral actor.

V. The Behavioral Default Layer: Where the Competition Actually Resolves

The routing layer thesis names the competition surface. The behavioral default layer is where the competition resolves. The distinction matters because routing authority at the OS layer or interface layer produces temporary leverage — it can be renegotiated, circumvented, or disrupted. Behavioral default authority is structurally stickier: once a default AI system is embedded in repeated workflow patterns, switching costs compound faster than competitive alternatives can accumulate.

Installment III identified two directionally opposite bleed mechanisms through which behavioral defaults form and propagate:

Enterprise-to-Consumer Bleed — Microsoft

GitHub Copilot captures developer behavior at the code layer. Office 365 Copilot captures knowledge worker behavior at the productivity layer. Azure AI captures enterprise infrastructure decisions at the compute layer. Each captures a behavioral default that was previously neutral with respect to the consumer AI interface. Once those defaults are set at the enterprise layer, the consumer device — whether Apple, Samsung, or Android — becomes a secondary execution surface for intelligence already routed through Microsoft’s enterprise stack. The device choice becomes operationally irrelevant to the AI routing decision.

Consumer-to-Enterprise Bleed — OpenAI

ChatGPT’s consumer penetration creates familiarity and behavioral defaults that carry into enterprise purchasing decisions. Enterprises procure AI systems their employees already use personally. The procurement decision trails the behavioral default rather than setting it. OpenAI’s consumer interface position is not primarily a revenue strategy — it is a behavioral default installation strategy that produces enterprise purchasing influence as the downstream output.

Ambient Invocation — Google

Google’s architecture operates differently from both bleed mechanisms. Android’s OS-layer control enables default routing without surfacing the routing decision to the user. Gemini invocations embedded in Android search, Google Assistant interactions, and Chrome browsing behavior accumulate at a layer below explicit user choice. Silent routing dominance at the ambient invocation layer matters more than visible product competition at the interface layer — because ambient invocations produce behavioral defaults without the user ever making a conscious AI selection decision.

Anthropic’s Structural Gap

Anthropic’s safety-first grammar produces neither bleed direction. Enterprise trust relationships are high-value but transactional — Anthropic supplies intelligence capability that enterprises invoke deliberately, rather than embedding into the behavioral default pathways governing enterprise users’ daily workflow. The gap is intentional given Anthropic’s constraint architecture, but it narrows the value capture window. Precision positioning converts to routing authority only if concentration persists long enough for enterprise trust to generate the distribution depth that ambient invocation and enterprise bleed produce structurally.

The behavioral default competition produces a ranking absent from any device-layer analysis: Microsoft holds the strongest structural position to achieve default invocation status in enterprise workflows. OpenAI holds the strongest consumer behavioral default position. Google holds the strongest ambient invocation position. Apple holds the strongest hardware lock-in position in the system — and no behavioral default position at the AI routing layer whatsoever.

Hardware lock-in and routing lock-in are not the same thing. Apple has built the most valuable version of the wrong kind.

VI. System-Level CDT Foresight Predictions

The following predictions operate at the system level — derived from running all nine CDTs against the shared governing variable. Institution-specific predictions with time windows and falsification conditions appear in the individual installments. System-level predictions require the full multi-CDT architecture to generate.

PREDICTION 1 Apple initiates a credible internalization commitment within 24–36 months of the Services gross margin compression signal crossing 70 percent.

CONFIRMS

Apple announces a frontier model acquisition, a proprietary foundation model research program, or a structural partnership granting Apple co-development rights rather than licensing access.

FALSIFIES

Services gross margin remains above 70 percent at month 36 with no internalization commitment — indicating the dependency threshold has not yet exceeded Apple’s identity tolerance. | Probability: 55% within 36 months; 80% within 60 months.

PREDICTION 2 Google’s dual-loop advantage generates antitrust enforcement action targeting the Android-to-Gemini routing connection within 18–30 months.

CONFIRMS

DOJ, EU Competition Commission, or a national competition authority opens a formal investigation into whether Google’s Android OS position constitutes an illegal tying arrangement combined with Gemini default deployment.

FALSIFIES

Google proactively separates the Android Gemini default architecture into an opt-in framework that regulators accept — preserving distribution without triggering the tying theory. Behavioral grammar change not predicted by Google’s ICG. | Probability: 65% formal investigation within 30 months; 35% proactive structural separation.

PREDICTION 3 Microsoft’s enterprise-to-consumer bleed produces measurable consumer AI default displacement from Apple’s interface layer within 24 months.

CONFIRMS

App Store AI revenue growth decelerates below the subscription services growth baseline while Microsoft’s consumer Copilot usage data shows acceleration in non-enterprise contexts.

FALSIFIES

App Store AI revenue continues accelerating at or above 2025 baseline growth rate, indicating consumer behavioral defaults remain set at the device interface rather than migrating to Microsoft’s enterprise-originating bleed pathway. | Probability: 60% measurable displacement within 24 months.

PREDICTION 4 Meta’s open-weight releases produce a pricing compression event at the intelligence layer within 18 months, forcing at least one frontier provider to revise enterprise contract structure.

CONFIRMS

OpenAI, Anthropic, or Google DeepMind announces enterprise pricing revisions — reduced per-token rates, bundled access tiers, or modified API pricing — in direct response to Llama adoption at enterprise scale.

FALSIFIES

Enterprise frontier model pricing holds flat or increases through the 18-month window, indicating concentration has hardened faster than Meta’s commoditization acceleration can compress it. | Probability: 70% pricing compression event within 18 months.

PREDICTION 5 Samsung achieves partial routing sovereignty at the intelligence layer within 36 months, but fails to convert distribution scale into behavioral default depth sufficient to challenge Google or Microsoft.

CONFIRMS

Samsung launches a Galaxy-native AI default framework routing user intent through Samsung Research models for at least one primary use case category without invoking Google’s Gemini architecture.

FALSIFIES

Samsung’s Exynos AI chip roadmap stalls, Samsung Research investment decreases as a share of total R&D, or Samsung expands Gemini integration rather than constraining it. | Probability: 65% partial routing sovereignty within 36 months; 20% full routing sovereignty within 60 months.

PREDICTION 6 The governing variable begins resolving toward concentration within 18 months, confirmed by Meta’s failure to sustain enterprise Llama adoption at scale against frontier model behavioral defaults that have already hardened.

CONFIRMS

Enterprise Llama deployment growth decelerates relative to OpenAI and Microsoft Copilot enterprise adoption; open-weight alternatives fail to displace frontier defaults in productivity workflow contexts where behavioral lock-in has already compounded.

FALSIFIES

Enterprise Llama adoption accelerates to parity with frontier model enterprise contract growth, indicating Meta’s commoditization acceleration is outrunning behavioral default hardening. | Probability: 55% concentration scenario beginning to resolve within 18 months; 45% governing variable remains contested.

VII. The Cybernetic Proof

The Consumer AI Device Series was designed as the applied proof run of the MindCast predictive cybernetics architecture — the same architecture that modeled Seattle’s multi-regime dominance over New England in Super Bowl LX, and the same architecture that models antitrust enforcement trajectories, legislative coalition fractures, and regulatory strategy across the MindCast publication suite.

Three features of the series distinguish it from standard competitive analysis and align it with the cybernetic proof standard:

Falsifiability by Architecture

Every CDT Foresight Simulation in the series generated predictions with observable confirmation signals, observable falsification signals, time windows, and probability weights before the outcomes resolved. The series did not describe competitive dynamics after the fact. The series modeled which behavioral grammars would produce which equilibrium paths, and specified in advance what would prove the model wrong.

Recursive Foresight

Installment I’s findings modified the competitive environment that Installment II analyzed. Installment II’s findings modified the intelligence layer architecture that Installment III resolved. The series evolved recursively — each simulation updated the state of the model before the next simulation ran — replicating Bateson’s higher-order learning structure in which the system revises its governing rules as new signals enter, rather than producing static forecasts.

Endogenous Variable Recovery

Standard competitive analysis treats market structure variables as background conditions. MAP CDT recovered the governing variable as an output of the institutional behavior analysis rather than an input. The discovery that the commoditization-concentration variable is determined by institutional behavioral grammars — rather than by exogenous market dynamics — is the series’ central methodological contribution. The prediction architecture that follows from it is structurally different from models that take the variable as given: it identifies the specific institutional actions that would shift the variable, the behavioral grammar conditions under which those actions are likely, and the threshold signals that would confirm a variable shift is underway.

The cybernetics architecture established the theoretical framework the Consumer AI Device Series operationalized. Wiener’s signal filtering formalized as Causal Signal Integrity. Ashby’s Requisite Variety operationalized in the dual-loop analysis distinguishing Google from every other institution in the system. Beer’s layered governance hierarchy structurally paralleled in the five-layer causation stack. Bateson’s recursive learning replicated in the foresight simulation’s recursive update architecture. The series did not apply cybernetics as a metaphor. The series executed cybernetics as a predictive methodology.

VIII. Falsification Conditions: What Would Disprove the Routing Layer Thesis

The routing layer thesis — control over the behavioral default routing decision determines durable value capture in the consumer AI device market — fails under three distinct conditions:

Condition 1: Device Manufacturers Retain AI Interface Control Without Owning the Routing Layer

Falsification mechanism: Apple sustains Services gross margin above 70 percent for 36 consecutive months while continuing to depend entirely on external model providers, with no measurable enterprise-to-consumer or consumer-to-enterprise bleed reducing App Store AI revenue. If Apple’s interface dominance sustains margin without closing the intelligence loop, the routing layer thesis is falsified at the device layer.

Condition 2: Users Override Behavioral Defaults at Scale

Falsification mechanism: Consumer research across enterprise and personal AI usage contexts shows that 40 percent or more of users actively select non-default AI systems regularly — indicating that behavioral defaults do not compound into lock-in at the rate the thesis predicts. If behavioral defaults are fragile rather than self-reinforcing, the concentration dynamic fails and the system’s feedback architecture is misspecified.

Condition 3: Open-Weight Commoditization Disrupts the Behavioral Default Formation Loop Before Defaults Harden

Falsification mechanism: Meta’s Llama adoption at enterprise scale accelerates to parity with frontier model enterprise contracts within 18 months, demonstrating that the commoditization accelerant is outrunning behavioral lock-in. If Meta’s grammar wins the governing variable contest before enterprise behavioral defaults compound into irreversibility, the concentration path that Section VI’s predictions are weighted toward fails to materialize.

None of the three falsification conditions have triggered. Monitoring them is the correct forward analytical posture.

IX. Series Synthesis: The Market Resolves Around Which System Routes Intent

The Consumer AI Device Series established three findings across three installments that the umbrella integrates into a single structural claim.

Installment I: Apple’s AI equilibrium is drift-stable. Surface metrics remain favorable. The internal trajectory deteriorates. The behavioral grammar that produced four decades of competitive dominance is the precise constraint that prevents Apple from adapting at the speed the intelligence transition requires.

Installment II: Google’s dual-loop position is the most consequential structural fact in the current consumer AI competitive landscape. No major analyst model correctly prices an institution that wins under both resolutions of the governing variable. Samsung’s internalization path is real but OS-layer capped — a genuine option on routing sovereignty that distribution scale alone cannot exercise.

Installment III: Value capture has migrated to the intelligence layer, but the intelligence layer is not a monolith. Three distinct capture architectures — Microsoft’s enterprise-to-consumer bleed, OpenAI’s consumer-to-enterprise bleed, and Google’s ambient invocation — are each building toward behavioral default control through different mechanisms and different user entry points. Anthropic’s precision positioning is durable under concentration but window-bounded. Meta’s open-weight strategy is the system’s primary commoditization accelerant.

The consumer AI device market is a contest for behavioral default control at the routing layer, governed by institutional behavioral grammars whose aggregate choices determine whether the governing variable resolves toward commoditization or concentration.

Winning the device, the model, or the operating system is necessary but not sufficient. Closing the loop between user intent, intelligence execution, and behavioral default is the only path to durable value capture.

Wiener built the theory. Ashby proved the structural constraint. The Consumer AI Device Series ran the proof on nine institutions in the market where the constraint matters most.

The device you hold routes your intent. The loop you never see governs your default. The institution that closed that loop before you knew the contest had started has already won.