MCAI National Innovation Vision: Access, Not Substance, Pentagon–Anthropic Foresight Simulation Reconciliation

Prediction Ledger Reconciliation, Advocacy Arbitrage Validation, and the OpenAI Paradox

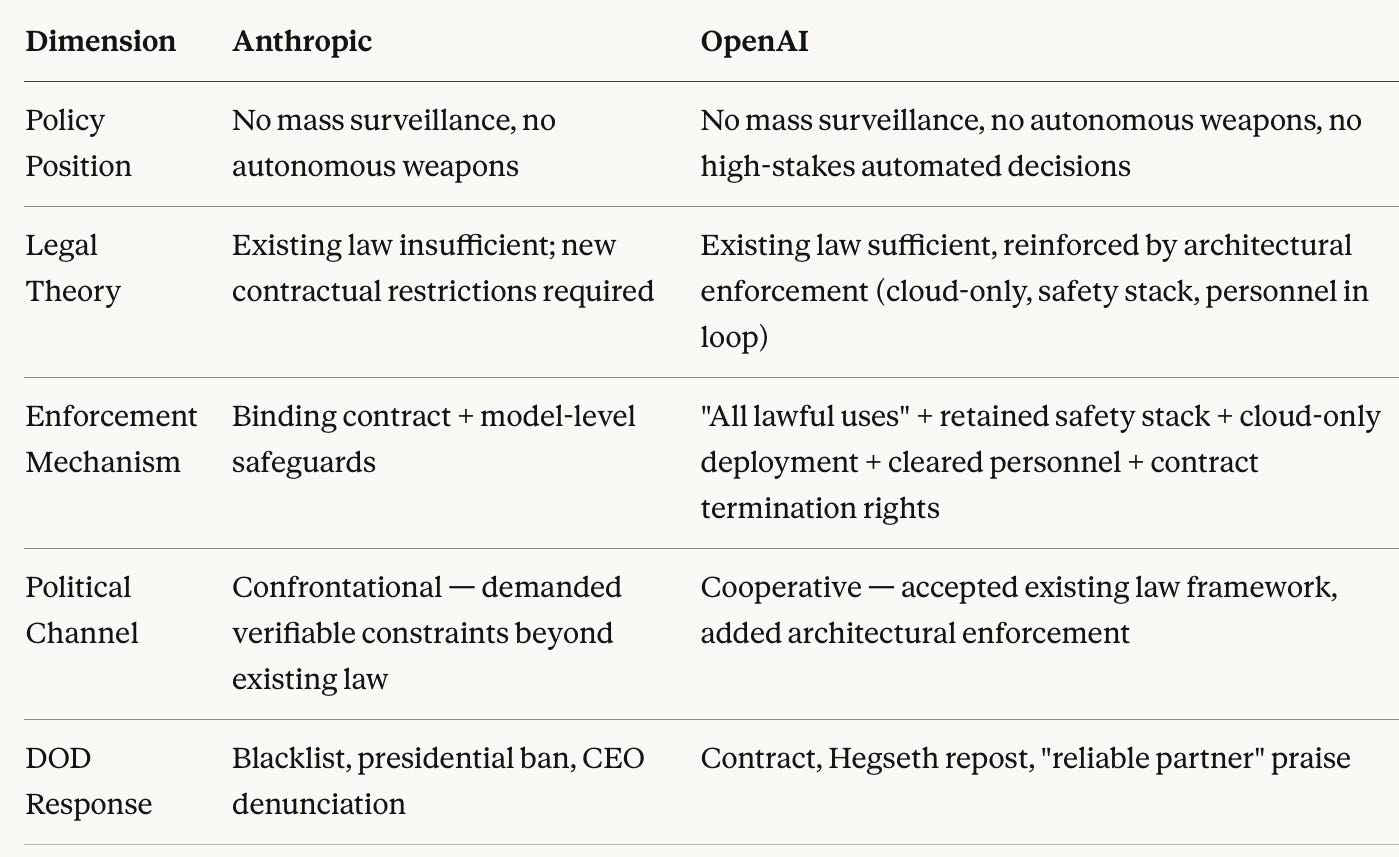

Institutional temporal mismatch, not safety disagreement, governed the Pentagon–Anthropic rupture — and the week-one resolution confirmed the governing equilibrium while falsifying one major prediction. On February 25, 2026, MindCast AI published Pentagon–Anthropic Throughput Failure and the Structural Reclassification of Safety as Ideology, pre-committing five predictions with falsification windows. Three days later, the confrontation resolved in a manner that validated the structural model, exposed two CDT calibration gaps, and produced the Tirole advocacy arbitrage framework’s most precise single-event confirmation. Honest ledger reconciliation requires documenting all three.

Analytical stack. MindCast AI's Foresight Simulations generate predictions through Cognitive Digital Twin (CDT) modeling — constructing behavioral models of institutional actors based on revealed preferences, incentive structures, and observable decision patterns, then simulating forward trajectories under competing equilibrium scenarios. The CDT architecture draws on three primary frameworks: Chicago School game theory for strategic interaction, the Tirole advocacy arbitrage framework for detecting when political access channels override technical assessment, and National Innovation Behavioral Economics (NIBE) for modeling institutional temporal mismatch across governance layers. Simulation Updates reconcile the predictive ledger with observed outcomes, diagnose structural misses, and document parameter revisions to the CDT architecture. For the complete framework index, see MindCast AI Economics Frameworks.

I. Timeline

Feb 24 DOD delivers Friday ultimatum: open Claude for all lawful purposes or lose contract

Feb 25 MindCast AI publishes Foresight Simulation (T0) — five predictions entered ledger

Feb 27 Anthropic holds firm; Trump orders government-wide ban; Hegseth designates

Anthropic "supply chain risk to national security" via X post

Feb 27 Hours later: OpenAI signs classified-systems deal with identical safety restrictions

Hegseth reposts Altman's announcement endorsing the dealEscalation compression: 72 hours from ultimatum to presidential ban, government-wide cessation, supply chain blacklisting, and CEO denunciation.

II. Prediction Ledger

Five predictions entered the MindCast AI ledger at T0 (February 25, 2026). Each committed an observable confirmation signal, an explicit falsification condition, and a time window. The ledger records outcomes against pre-committed terms — not retroactive reinterpretation.

Prediction 1: Anthropic Partial Capitulation (62% probability, 7-day window) — FALSIFIED

The simulation predicted Anthropic would modify its usage policy language to accommodate DOD demands — “surface-level compliance maintaining engineering constraints through implementation rather than policy.” Anthropic maintained its policy language unchanged and accepted contract termination. The falsification condition stated: “Anthropic maintains current policy language unchanged and accepts contract termination.” Amodei’s February 27 statement met that condition precisely.

Diagnostic: The CDT architecture modeled Anthropic through Becker incentive re-optimization, predicting that asymmetric risk across revenue ($200 million contract), reputation, talent cohesion, and incumbency loss would produce rational surface compliance. The model underweighted two factors:

First, Amodei’s revealed preference for principle over pragmatism exceeded the behavioral bounds the CDT calibrated. Autonomous targeting and mass surveillance occupied a category Amodei treated as non-negotiable regardless of commercial consequence — a lexicographic preference, not a continuous trade-off curve.

Second, industry solidarity — 330+ employees at OpenAI and Google DeepMind publishing an open letter, 100+ Google employees petitioning Chief Scientist Jeff Dean, Microsoft and Amazon employees demanding similar restrictions — created reputational incentives against capitulation that the CDT did not model. Anthropic’s holdout became an industry rallying point, inverting the incentive structure the simulation assumed.

Forward correction mechanism:

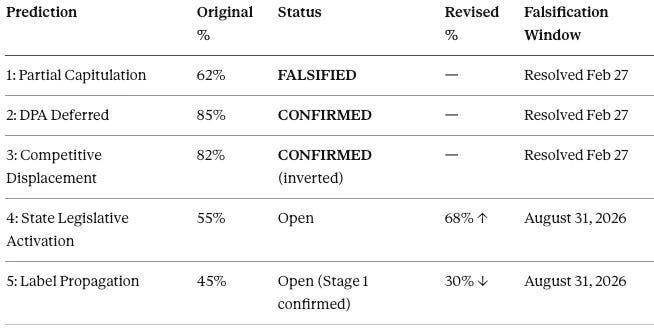

Two new CDT parameters formalize the miss:

Preference Rigidity Coefficient (PRC): Scale 0–1. Measures how resistant an actor’s core commitments are to re-optimization under external pressure. PRC = 0 indicates full Becker-style continuous re-optimization. PRC = 1 indicates lexicographic preference — no trade-off accepted below a categorical threshold. The simulation implicitly assigned Amodei PRC ≈ 0.3 (moderate pragmatist). Revealed behavior indicates PRC ≈ 0.85–0.95 on autonomous targeting and mass surveillance.

Coalition Amplification Multiplier (CAM): Scale 1.0–5.0. Measures how cross-industry solidarity amplifies or suppresses the focal actor’s incentive to capitulate. CAM = 1.0 indicates no coalition effect (isolated bilateral negotiation). CAM > 2.0 indicates reputational value of holdout exceeds bilateral contract value. The simulation assigned CAM = 1.0 (no coalition modeled). Observed coalition behavior indicates CAM ≈ 2.5–3.5.

Threshold condition: If PRC × CAM > Contract Value Ratio (contract value ÷ total firm value), capitulation probability collapses toward zero. For Anthropic: PRC (0.9) × CAM (3.0) = 2.7 >> CVR ($200M ÷ $14B ≈ 0.014). The threshold was exceeded by two orders of magnitude. Under corrected parameters, capitulation probability drops from 62% to below 5%. Sensitivity analysis across the PRC 0.5–0.95 range shifts capitulation probability from 58% (at PRC = 0.5, where moderate pragmatism still permits trade-offs) to below 10% (at PRC ≥ 0.75, where threshold preferences dominate). The correction is robust across the plausible PRC range.

Game-theoretic frame: Model the Friday deadline as an ultimatum game. DOD’s threat functions only if DOD can impose a cost that changes Anthropic’s payoff. PRC × CAM shows why the threat fails even under maximum escalation: Anthropic’s outside option — reputational value of holdout amplified by coalition solidarity — exceeds the bilateral contract value by two orders of magnitude. DOD’s ultimatum was structurally unenforceable before the Friday clock started.

Ledger entry: Miss. CDT Becker layer recalibrated with PRC and CAM parameters.

Prediction 2: Defense Production Act Invocation Deferred (85% probability, 30-day window) — CONFIRMED (at 7-day resolution)

The simulation predicted DOD would not invoke the DPA, diagnosing the threat as “coercive signaling — not operational intent.” Trump’s executive action and Hegseth’s supply chain designation operated through executive directive and administrative classification — not DPA compulsion. The simulation’s falsification condition specified: “Formal DPA invocation or ‘supply chain risk’ designation published in the Federal Register.” Hegseth announced the designation via X post, not through formal Federal Register publication. The administration chose political coercion instruments over statutory compulsion — consistent with the coercion-as-signal diagnosis.

Note on falsification boundary: Hegseth announced the supply chain risk designation without formal publication through the administrative process 10 USC 3252 requires. Anthropic’s legal response explicitly challenged the statutory authority behind the designation. The DPA remains un-invoked. Confirmation holds on pre-committed terms, though the administration’s willingness to escalate beyond contract cancellation to government-wide ban exceeded the simulation’s escalation ceiling (see Section V: Political Signaling Overlay).

Ledger entry: Hit. Coercion-as-signal over coercion-as-action confirmed. Escalation magnitude underestimated.

Prediction 3: Competitive Displacement Acceleration (82% probability, 60-day window) — CONFIRMED (mechanism inverted, 3-day resolution)

The simulation predicted competitive displacement as the highest-confidence forward path, with xAI and OpenAI achieving classified-network parity within 60 days. OpenAI signed a classified-systems deal within 72 hours of the simulation’s publication — compressing the 60-day window to three days. The confirmation signal specified: “DOD announcement of additional classified clearances.” Altman’s announcement and Hegseth’s repost constitute that signal.

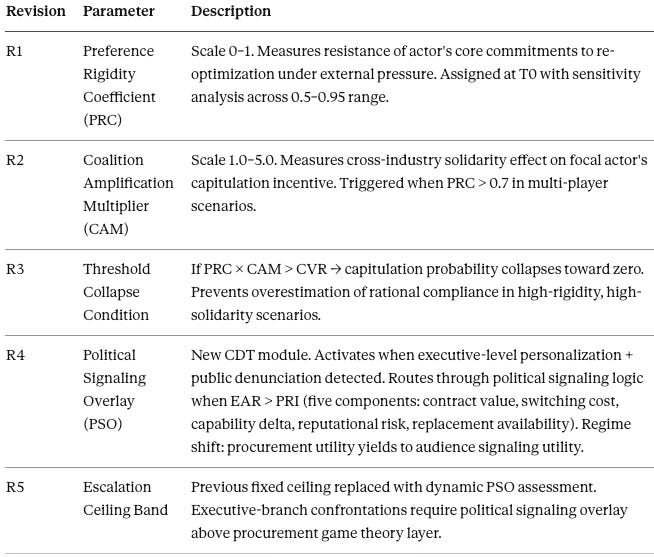

Critical inversion: The simulation predicted competitors would fill the vacuum by accepting unrestricted “all lawful purposes” terms. OpenAI filled the vacuum by negotiating the identical restrictions Anthropic was blacklisted for requesting — explicit prohibitions on mass domestic surveillance and autonomous weapons. Competitive displacement occurred, but safety constraints survived displacement. DOD accepted from OpenAI what it refused from Anthropic. The vendor changed; the substantive position did not.

Ledger entry: Hit on displacement mechanism and timeline (accelerated). Miss on displacement terms — the simulation assumed competitors would accept unrestricted access. The inversion reveals the confrontation was never about safety restrictions. Advocacy arbitrage governed (see Section IV).

Prediction 4: State AI Safety Legislative Activation (55% probability, 6-month window) — OPEN

The observation window runs through August 31, 2026. The confirmation signal requires three or more state AI governance bills referencing military AI use or autonomous systems. Senator Mark Warner’s immediate condemnation of Trump’s directive — warning it could be “pretext to steer contracts to a preferred vendor” — and the scale of industry mobilization both function as catalysts the CDT identified as necessary preconditions for state legislative activation. Federal overreach of this magnitude historically accelerates state-level governance responses. Probability adjusted upward from 55% to 68% based on observed catalysts.

Falsification window: If fewer than three state bills referencing military AI governance are introduced by August 31, 2026, Prediction 4 is falsified.

Ledger entry: Open. Catalysts activated. Updated probability: 68%.

Prediction 5: Ideological Label Propagation (45% probability, 6-month window — two-stage) — OPEN (Stage 1 confirmed, Stage 2 complicated)

Stage 1 predicted the “woke AI” label would persist as an Anthropic-specific pressure instrument through Q2 2026. Trump’s Truth Social post calling Anthropic “leftwing nut jobs” confirms Stage 1 intensity. Stage 2 predicted propagation to a second safety-differentiated AI firm only if a subsequent bargaining confrontation created a replication target. OpenAI’s deal complicates Stage 2 — Altman negotiated the same restrictions but received no ideological label. The grammar propagated in rhetoric (Trump’s language escalated broadly against AI safety) but did not propagate in procurement consequences (OpenAI received a deal, not a blacklist).

DOD’s own behavior confirms the Installed Cognitive Grammar assessment — that the framing operated as “currently tactical and instrumental, not yet installed as systemic grammar.” The “woke AI” label functioned as a bilateral pressure instrument against Anthropic, not as a systemic filter applied to equivalent positions held by other firms.

Falsification window: If DOD applies the “woke AI” or equivalent ideological label to a second AI vendor holding equivalent safety positions by August 31, 2026, Stage 2 is confirmed. If no second target emerges, Stage 2 is falsified.

Ledger entry: Open. Stage 1 confirmed. Stage 2 probability adjusted downward from 45% to 30% — the OpenAI deal demonstrates the label is access-dependent, not position-dependent.

Contact mcai@mindcast-ai.com to partner with us on Law and Behavioral Economics foresight simulations. See recent projects: Super Bowl LX — AI Simulation vs. Reality, Judicial Deconstruction of Compass’s Narrative Arbitrage v. Zillow, Foresight on Trial, The Diageo Litigation Validation, The Shadow Antitrust Division, A Tri-Parte Bypass of the Rule of Law.

To deep dive on MindCast work upload the URL of this publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

III. Governing Equilibrium Validation

The simulation’s foundational claim — that institutional temporal mismatch, not ideology or safety disagreement, governs the confrontation — required the week’s events to confirm or falsify. Every observable signal from February 25 through February 27 confirmed the temporal mismatch diagnosis and disconfirmed the competing narrative that safety restrictions drove the rupture.

DOD compressed months of negotiation into a 72-hour ultimatum. When Anthropic did not capitulate within the forced binary, the administration escalated to presidential directive, government-wide ban, and supply chain blacklisting — all within 72 hours of the original deadline. No technical assessment of Anthropic’s safety restrictions occurred at any stage of escalation. No evaluation determined whether the autonomous targeting and mass surveillance restrictions actually impeded military operations. Pentagon officials acknowledged the restrictions had never been triggered in practice. Retired Air Force General Jack Shanahan — former head of DOD’s AI initiatives — stated publicly that Anthropic’s red lines were “reasonable” and that current AI models are “not ready for prime time in national security settings” for autonomous weapons.

The gap between DOD’s own stated position (we already don’t do these things) and DOD’s enforcement action (we will blacklist you for asking us to put that in writing) is the institutional temporal mismatch made visible. Coercion timelines cannot accommodate the procedural precision that contractual language requires (see Section IV: Enforceability Architecture for the full reporting-confirmed divergence).

Post-resolution reporting revealed the temporal mismatch operating within DOD's own chain of command. Axios reportedthat Under Secretary Michael was on the phone with Anthropic offering a last-minute deal at the exact moment Hegseth posted the supply chain risk designation on X. DOD's negotiation arm and enforcement arm were literally unsynchronized. The negotiator was still negotiating when the enforcer enforced.

The NIBE framework predicted exactly the observed pattern: senior political pressure applied to the wrong institutional layer, generating maximum confrontation with minimum operational benefit. The Delay Propagation Index registered as predicted — Anthropic’s holdout cascaded procurement pressure across the defense AI ecosystem, accelerating competitor clearances and producing the forced binary the simulation’s equilibrium classification identified as the modal path.

What would falsify the governing equilibrium:

The institutional temporal mismatch diagnosis would require revision if any of the following occur:

DOD conducts a formal technical evaluation of safety restrictions on classified AI systems and publishes findings — demonstrating that technical assessment, not coercion timelines, drives procurement decisions.

DOD offers identical enforceability architecture to all AI vendors — demonstrating that enforcement terms, not access channels, determine contract structure.

The administration reverses or materially softens the Anthropic blacklist absent sustained external political pressure — demonstrating that escalation was procurement-rational rather than politically driven.

None of these conditions has been met. The equilibrium holds.

IV. Advocacy Arbitrage Validation

Strategic form:

Players: DOD (principal), AI vendors (agents), executive political layer (override player when PSO activates).

Moves: DOD ultimatum → vendor response (holdout vs. comply) → executive escalation option → substitute contract.

Information structure: DOD cannot observe real-time classified usage; vendors cannot enforce restrictions during operations → bilateral commitment problems on both sides.

Equilibrium claim: When enforcement is structurally weak, DOD selects on posture signals rather than policy substance. Cooperative posture functions as the separating signal in a signaling game: identical policy positions produce different outcomes because DOD pays for the signal, not the substance. Access arbitrage becomes the stable equilibrium.

The Tirole advocacy arbitrage framework has produced validated predictions across DOJ antitrust enforcement, export control policy, and real estate regulatory capture (see A Tirole Phase Analysis of Advocacy-Driven Antitrust Inaction). The Pentagon–Anthropic confrontation delivers the framework’s most precise single-event confirmation — because DOD’s own behavior within a single Friday afternoon proved that access, not substance, determined which company received a contract and which received a blacklist.

The structural signature operates in three steps: (1) a constrained party holds a substantive position grounded in technical or legal reasoning; (2) a party with superior political access labels the constrained party as the source of institutional dysfunction; (3) the labeling party obtains the outcome it seeks through access channels rather than technical assessment. The confrontation reproduced all three steps.

Anthropic requested two restrictions: no mass domestic surveillance, no fully autonomous weapons. DOD labeled those restrictions “woke AI,” blacklisted Anthropic as a supply chain risk, and secured a presidential ban across all federal agencies. Hours later, OpenAI negotiated a classified-systems deal containing the same two restrictions. Hegseth, in a separate post, accused Amodei of “a cowardly act of corporate virtue-signaling” and declared that “the Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield” — framing Anthropic’s position as ideology even as DOD accepted the identical position from OpenAI as policy.

CNN reported: “It’s not clear what is different about OpenAI’s deal with the Pentagon versus what Anthropic wanted.”

Enforceability architecture — the structural divergence:

Post-resolution reporting confirms the gap between Anthropic and OpenAI centered on legal theory, not policy substance. Axios reported that the restrictions in OpenAI’s agreement “reflect existing U.S. law and the Pentagon’s policies” and that “the intention was not to invent new legal standards.” OpenAI accepted existing federal law as sufficient constraint. Anthropic rejected that premise — arguing, per Axios, that existing law “has not caught up with AI” and that AI can “supercharge the legal collection of publicly available data, from social media posts to geolocation.” Anthropic demanded restrictions that go beyond existing law because existing law does not contemplate AI-enabled surveillance at scale.

Anthropic's concern was not hypothetical. Axios reported that the deal Michael was offering Anthropic at the moment of blacklisting would have required allowing AI-enabled collection or analysis of data on Americans — including geolocation, web browsing data, and personal financial information purchased from data brokers. DOD was requesting exactly the surveillance capability Anthropic refused to enable.

Semafor reported that OpenAI agreed to the “all lawful uses” standard and deployed the same ChatGPT available to non-military users — standard model guardrails remain in place, but no contractual restrictions beyond existing law. Semafor also noted that “the Pentagon doesn’t seem to have any problem” with model-level safeguards baked directly into the product, and that Google and xAI “agreed to the ‘all lawful use’ clause and even removed some model-level restrictions.” Anthropic’s Thursday statement rejected DOD’s “best and final” offer because proposed compromise language contained “legalese that would allow those safeguards to be disregarded at will.” Under Secretary Michael told CBS that DOD offered written acknowledgment of existing federal surveillance law and existing Pentagon autonomous weapons policy — and an invitation to join the AI ethics board. Anthropic demanded contractual restrictions that DOD could not unilaterally override, embedded in both contract terms and model-level safeguards built directly into Claude.

The divergence is now clear: same stated principles, fundamentally different legal theories. OpenAI accepted existing law as sufficient. Anthropic argued existing law is insufficient for AI-era capabilities. DOD accepted the former and blacklisted the latter.

OpenAI's official statement on the agreement reveals an enforcement architecture that arguably exceeds Anthropic's original contract. OpenAI retained full discretion over its safety stack, deployed cloud-only (structurally preventing autonomous weapons use, which requires edge deployment), stationed cleared OpenAI personnel in classified environments, added a third red line prohibiting high-stakes automated decisions such as social credit systems, and reserved the right to terminate the contract if DOD violates terms. OpenAI stated: "We believe our contract provides better guarantees and more responsible safeguards than earlier agreements, including Anthropic's original contract." If accurate, DOD accepted stronger restrictions from OpenAI than the restrictions it blacklisted Anthropic for requesting — deepening the advocacy arbitrage from an enforceability gap to a pure access-sorting outcome.

Contractual restrictions on classified military AI face an inherent temporal enforcement problem that neither legal theory fully resolves. Anthropic cannot monitor classified network usage in real time. Anthropic cannot litigate a contract breach while a military operation is underway. Contractual terms function as post-hoc litigation triggers, not operational constraints — enforcement is always retrospective and reputational rather than preventive. Low observability on classified networks turns the procurement mechanism into a proxy-selection mechanism; when the principal cannot verify operational constraint adherence, the mechanism optimizes for relationship signals instead.

Anthropic understood the observability limitation, which is why the company embedded restrictions directly into Claude’s model-level safeguards rather than relying solely on contract language. Model-level safeguards were the actual enforcement mechanism. DOD’s demand to strip those safeguards — not merely to modify the contract — confirms that DOD understood the contractual terms were not the real constraint. OpenAI kept its standard model guardrails in place as default product behavior while accepting DOD’s legal framework — a structurally different approach that gave DOD the operational flexibility Anthropic’s binding restrictions denied.

Identical policy positions produced opposite procurement outcomes. Access, not substance, determined allocation. Michael praised OpenAI as “a reliable and steady partner that engages in good faith” — language describing relationship posture, not substantive policy difference.

A senior Pentagon official made the signaling variable explicit. Axios reported the official stating: “The problem with Dario is, with him, it’s ideological. We know who we’re dealing with.” DOD named the sorting criterion directly — not the policy position (which matched across vendors), but the perceived type of the actor proposing it. The signaling game equilibrium claim holds: DOD classified Anthropic as a defiant type and OpenAI as a cooperative type, then allocated contracts based on type classification rather than policy substance.

OpenAI explicitly opposed the supply chain designation, stating it had "made our position on this clear to the government." OpenAI also noted that "other AI labs have reduced model guardrails and relied on usage policies as the primary safeguard" — a reference to xAI and Google, which removed model-level restrictions for DOD use. The access-sorting mechanism produced a compounding paradox: the vendor DOD accepted retained more safeguards than the vendor DOD blacklisted, while the vendors that actually removed safeguards faced no designation at all.

The Tirole framework predicts that access-sorted procurement systems produce capability degradation over time, because the sorting criterion (political access) is uncorrelated with the performance criterion (technical capability). Whether DOD’s classified AI network degrades under OpenAI relative to Anthropic’s Claude — which Pentagon officials privately acknowledged as the most capable model — becomes a testable downstream prediction.

Falsification window: Post-resolution reporting confirms the enforcement architecture diverged: OpenAI accepted existing law as sufficient; Anthropic demanded new contractual restrictions beyond existing law. The arbitrage diagnosis would weaken if subsequent evidence demonstrates that Anthropic’s additional demands were operationally disqualifying on technical rather than political grounds — i.e., that DOD rejected Anthropic specifically because binding model-level restrictions created mission-critical failure risks that principle-level commitments avoid. The Axios quote (”with him, it’s ideological”) suggests type-sorting rather than technical assessment drove the outcome.

V. What the Simulation Missed — and Why

Honest ledger reconciliation requires equal attention to structural misses. Three analytical gaps produced divergence between simulation and outcome. Each gap now has a formal correction mechanism integrated into the CDT architecture.

Gap 1: Preference rigidity underweighted. The CDT architecture now incorporates the Preference Rigidity Coefficient (PRC) defined in Prediction 1 above. Future simulations assign PRC values at T0 based on observable behavioral priors, with explicit sensitivity analysis across PRC ranges.

Gap 2: Coalition formation not modeled. The CDT architecture now incorporates the Coalition Amplification Multiplier (CAM) defined in Prediction 1 above. Future simulations model coalition emergence as a conditional variable triggered when PRC exceeds 0.7 in multi-player procurement scenarios.

Gap 3: Escalation ceiling set too low — Political Signaling Overlay required.

The equilibrium classification placed Forced Termination at 10% probability with an 80% band of 5–15%. The actual outcome exceeded the simulation’s most extreme scenario class. The CDT architecture modeled DOD as a rational procurement actor optimizing for capability acquisition. The administration’s response pattern reveals political signaling objectives that operate independently of procurement optimization.

New CDT module — Political Signaling Overlay (PSO):

Trigger condition: Executive-level personalization (naming the CEO, attributing ideological motive) combined with public denunciation through non-institutional channels (social media rather than Federal Register, press conference rather than procurement review).

Metric — Escalation Acceleration Rate (EAR): Measures the ratio of escalation magnitude to elapsed time. EAR = (Severity of institutional response) ÷ (Hours between triggering event and response). The Anthropic confrontation produced a government-wide ban, supply chain blacklisting, and CEO denunciation within approximately 6 hours of the Friday deadline — an EAR exceeding any procurement-rational response pattern in the MindCast case archive.

Suppression condition: If EAR > Procurement Rationality Index (PRI), procurement optimization is suppressed and political signaling governs the response trajectory. PRI incorporates five measurable components: (1) contract value, (2) switching cost, (3) capability delta between incumbent and replacement, (4) reputational risk of escalation, and (5) replacement availability timeline. For the Anthropic confrontation: EAR vastly exceeded PRI across all five components. A $200 million contract dispute with manageable switching costs and acknowledged capability loss produced escalation historically reserved for foreign adversaries.

PSO activation changes the payoff function: procurement utility yields to audience signaling utility, producing a regime shift from a procurement game to a political repeated game. Escalation becomes rational inside the political game even when irrational inside the procurement game — because the audience (base, media, political allies) rewards dominance displays independently of procurement outcomes.

Future simulations involving executive branch confrontations with private firms incorporate the PSO module as a layer above procurement game theory. When PSO activates, the simulation routes through political signaling logic rather than procurement optimization logic for escalation trajectory.

VI. Forward Implications

Three forward implications carry date-specific observation windows:

Anthropic’s legal challenge gains evidentiary support. A supply chain risk designation premised on safety restrictions that DOD simultaneously accepted from another vendor faces a straightforward arbitrary-and-capricious challenge. Anthropic’s statement already cited 10 USC 3252 and argued the designation lacks statutory authority. The OpenAI deal strengthens that argument considerably.

Legal experts uniformly assess the designation as vulnerable. Dean Ball, senior fellow at the Foundation for American Innovation and former White House senior policy advisor for AI, told Wired it was "the most shocking, damaging, and over-reaching thing I have ever seen the United States government do." Carnegie Endowment fellow Steven Feldstein stated that a supply chain risk designation requires a legal process and "it isn't legally sufficient to simply proclaim or label" one. George Washington University associate dean Jessica Tillipman called the designation "on incredibly shaky ground" and "the most politicized use" she has observed. Supply chain risk designations require formal risk assessments and Congressional notification before taking effect — none of which occurred here.

Falsification window: If Anthropic loses the legal challenge and no court examines the OpenAI-deal inconsistency by December 31, 2026, the structural coercion thesis strengthens — indicating judicial deference to executive discretion regardless of evidentiary asymmetry.

The “woke AI” grammar faces structural decay. DOD accepted “woke” restrictions from a non-”woke” vendor. The label cannot survive as a policy instrument when the policy position it targeted is embedded in the replacement contract. DOD’s own Friday behavior confirms the Installed Cognitive Grammar assessment — tactical and instrumental, not systemic. Prediction 5 Stage 2 probability declines.

Genesis Mission throughput vulnerability materializes. The administration demonstrated that when an AI partner resists federal terms, the institutional response is presidential ban, supply chain blacklisting, and public CEO denunciation — not negotiation, technical compromise, or mid-level alignment. Every future federal AI partner now operates with observable evidence of what federal partnership friction produces.

MindCast AI’s NIBE analysis of Genesis predicted that mid-level incentive alignment generates 40% improvement in deployment timelines versus 8–12% from senior political pressure (see White House Genesis Mission x MindCast National Innovation Behavioral Economics). The Anthropic confrontation revealed an administration that defaults to maximum senior political pressure and escalates when friction appears. Genesis requires behavioral synchronization across at least seven governance layers — DOE, FERC, state energy agencies, county permitting authorities, municipal governments, public utility districts, and tribal nations. If the administration’s institutional coordination instinct is coercion rather than alignment, every layer of the Genesis governance bottleneck becomes a potential confrontation node. The probability that Genesis achieves its deployment timeline under the behavioral profile the Anthropic confrontation revealed drops materially from the baseline the original NIBE analysis assumed. A dedicated Genesis Pre-Simulation incorporating the updated behavioral profile is forthcoming.

VII. Revised Simulation Status

Governing equilibrium: Confirmed. Institutional temporal mismatch produced coercion rather than coordination at every escalation level.

Dominant causal layer: Confirmed. Structure-caused, not actor-caused. The Runtime Causation test holds — replacing the actors does not change the structural geometry that produced confrontation.

Original falsification conditions:

Condition 1 — “DOD and Anthropic reach a technically grounded compromise within the Friday deadline” — not met. No compromise occurred.

Condition 2 — “Anthropic’s safety restrictions prove operationally irrelevant” — received indirect confirmation from Pentagon officials who acknowledged the restrictions had never been triggered in practice and from General Shanahan’s assessment that current models are not ready for autonomous weapons applications.

Condition 3 — “No state-level legislative response emerges within six months” — remains open. Observation window closes August 31, 2026.

VIII. Ledger Integrity Statement

The Anthropic Foresight Simulation produced one clean miss (Prediction 1), two confirmations (Predictions 2 and 3), and two open tracks with revised probabilities (Predictions 4 and 5). The governing equilibrium and dominant causal layer held. The miss on Prediction 1 identifies a specific CDT calibration gap now formalized through PRC and CAM parameters. The miss on escalation magnitude identifies a modeling gap now formalized through the Political Signaling Overlay module. Both corrections are integrated into the CDT architecture for future simulations.

Log the miss. Credit the hits. Revise the model. The simulation stays open.