MCAI National Innovation Vision: Pentagon–Anthropic Throughput Failure and the Structural Reclassification of Safety as Ideology

Civil–Military AI Synchronization Stress Test

On February 24, 2026 — one day before this simulation published — TIME reported exclusively that Anthropic had dropped the central pledge of its Responsible Scaling Policy, abandoning a 2023 commitment to halt AI training if safety measures could not be guaranteed in advance. Chief Science Officer Jared Kaplan cited competitive pressure, an anti-regulatory political climate, and the impracticality of unilateral commitments when competitors proceed without equivalent constraints. The RSP revision was public at T0 and is not claimed as a prediction. However, the revision is structurally consistent with the governing equilibrium identified in this simulation: institutional temporal mismatch producing safety erosion under competitive and political pressure.

Anthropic’s decision to replace a hard safety trigger with a continuous disclosure regime — matching competitors rather than holding a unilateral standard — confirms the throughput dynamic diagnosed below. The company that refused to budge on autonomous targeting and domestic surveillance during its Tuesday meeting with Defense Secretary Hegseth had already restructured the foundational safety architecture those positions rested on. Readers should evaluate Prediction 1 (Anthropic Partial Capitulation, 62%) in light of this context: the probability that DOD-specific policy language softens increases when the broader safety framework has already shifted. A formal recalibration of affected probability bands will follow in a subsequent Simulation Update.

I. Governing Equilibrium

Every institutional conflict has a structural root. Foresight Simulations begin by naming that root before the event narrative takes hold. The governing equilibrium identified here determines which frameworks load, which predictions commit, and which falsification conditions apply.

National AI advantage is now determined by institutional temporal alignment, not model capability. The United States fields the most technically advanced frontier AI models on Earth — and cannot synchronize their deployment because the institutions governing military AI operate on coercion timelines while the institutions building military AI operate on engineering timelines. The gap between those clocks is the binding constraint on American AI-enabled defense capability. Not safety. Not ideology. Throughput.

The confrontation between the Pentagon and Anthropic is a symptom of that gap. The simulation that follows measures whether American institutions can close it.

II. Activation Node

Foresight Simulations require a triggering event with observable agents, measurable constraints, and a forcing timeline. The Hegseth-Anthropic confrontation meets all three criteria, producing a compressed decision environment where structural dynamics become legible.

On February 24, 2026, Defense Secretary Pete Hegseth issued Anthropic CEO Dario Amodei a Friday deadline: open Claude for unrestricted military use or lose a $200 million Pentagon contract. The Department of Defense (DOD) threatened to invoke the Defense Production Act (DPA) — a 1950s emergency statute designed for wartime industrial mobilization — or designate Anthropic a “supply chain risk” if the company maintained its restrictions on autonomous targeting and domestic mass surveillance.

Anthropic holds classified-network incumbency as the first AI provider cleared for sensitive military applications. Competitors xAI, OpenAI, and Google have agreed to unrestricted “lawful use” terms. Amodei did not budge on two positions: no fully autonomous military targeting, no domestic surveillance of U.S. citizens.

The activation node is now set. A 72-hour deadline, a $200 million contract, and two irreconcilable institutional positions create the forcing function for structural analysis.

III. Dominant Causal Layer

Prediction requires identifying whether the forces driving an event are replaceable or embedded. If the confrontation disappears when the actors change, personality governs and prediction is speculative. If the confrontation persists regardless of personnel, structural geometry governs and prediction becomes viable.

Runtime Causation triage — the pre-routing methodology MindCast AI applies before selecting an analytical framework (see Runtime Causation Arbitration Directive) — classifies the Hegseth-Anthropic confrontation as structure-caused, not actor-caused. The diagnostic test asks whether changing the actors changes the outcome. Replace Hegseth with any defense secretary operating under the current AI executive order framework and the demand for unrestricted access persists. Replace Amodei with any CEO maintaining equivalent safety constraints and the confrontation recurs. Structural geometry — not personality — governs.

Three structural forces converge at the activation node:

Institutional temporal mismatch. DOD procurement compressed a multi-month negotiation into a single-week ultimatum. AI safety assessment operates on engineering timelines — testing deployment boundaries, establishing reliability thresholds, documenting failure modes. Coercion timelines override that process entirely. When political acceleration outpaces technical evaluation, the system produces forced binaries rather than calibrated outcomes.

MindCast AI’s National Innovation Behavioral Economics (NIBE) framework measures exactly these dynamics through metrics including the Temporal Drag Coefficient (TDC), which quantifies accumulated delay per unit of scientific progress, and the Delay Propagation Index (DPI), which tracks how friction in one institution cascades across the system. NIBE’s analysis of the White House Genesis Mission documented the identical dynamic: senior political pressure produces 8–12% improvement in deployment timelines while mid-level technical incentive alignment produces 40% improvement (see White House Genesis Mission x MindCast National Innovation Behavioral Economics). The Hegseth approach inverts that finding — maximum executive pressure applied to the wrong institutional layer.

Advocacy arbitrage displacing technical assessment. White House AI adviser David Sacks accused Anthropic of “regulatory capture through fear-mongering” — framing safety engineering as political manipulation. The accusation functions as advocacy arbitrage: political access channels overriding technical assessment pathways. MindCast AI’s Tirole framework documents how access displaces evidence in captured institutional systems, a pattern now validated across DOJ antitrust enforcement, export control policy, and defense AI procurement (see A Tirole Phase Analysis of Advocacy-Driven Antitrust Inaction). The structural signature is consistent: the party engaged in access arbitrage labels the constrained party as the captor.

Low institutional cognitive plasticity. DOD’s framing of safety restrictions as “woke AI” reveals an inability to distinguish engineering constraints from ideological constraints. Anthropic’s restrictions on autonomous targeting and mass surveillance are technical positions grounded in AI reliability limitations and democratic governance principles. Classifying them as ideological removes the disagreement from the domain where technical argument can produce resolution. MindCast AI’s Institutional Cognitive Plasticity (ICP) framework predicts that low-plasticity institutions escalate to coercion when they cannot process the distinction between “won’t” and “can’t yet safely” (see Institutional Cognitive Plasticity).

All three forces — temporal mismatch, advocacy arbitrage, and low cognitive plasticity — operate simultaneously and reinforce one another. The convergence produces the coercive dynamic observed in the activation node and governs the predictions that follow.

Contact mcai@mindcast-ai.com to partner with us on Law and Behavioral Economics foresight simulations. See recent projects: Super Bowl LX — AI Simulation vs. Reality, Judicial Deconstruction of Compass’s Narrative Arbitrage v. Zillow, Foresight on Trial, The Diageo Litigation Validation, The Shadow Antitrust Division, A Tri-Parte Bypass of the Rule of Law.

To deep dive on MindCast work upload the URL of this publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

IV. Proprietary Cognitive Digital Twin Dominance Routing

MindCast AI does not select analytical frameworks by editorial judgment. The proprietary Cognitive Digital Twin architecture prevents framework selection bias by running competing analytical lenses simultaneously and ranking them by structural fit. The methodology ensures the dominance ranking reflects measurable dynamics rather than analyst preference.

Proprietary Cognitive Digital Twin (CDT) agents — computational actors calibrated to real-world incentives, biases, timing cycles, and institutional constraints — simulate how bounded-rational decision-makers respond to one another’s moves under uncertainty. Running these agents against the Hegseth-Anthropic activation node produced the following dominance ranking.

Causal Signal Integrity (CSI) screening confirmed structurally credible inputs: formal deadline issued, explicit coercion language documented, classified-network incumbency observable, public ideological framing active. Signal quality supports structural modeling over narrative overfitting (see Causal Signal Integrity).

Dominance ranking:

Chicago Strategic Game Theory dominates — runtime strategic interaction under deadline with rule mutability and coercion threat (see Chicago School Accelerated).

National Innovation Behavioral Economics throughput fracture drives persistence as the secondary layer (see Synthesis in National Innovation Behavioral Economics and Strategic Behavioral Coordination).

Field-Geometry Reasoning confirms geometry does not lock DOD into Anthropic: substitutability is credible, meaning strategic interaction governs rather than structural dependency (see Field-Geometry Reasoning).

Installed Cognitive Grammar assessment finds the “woke AI” framing currently instrumental and Anthropic-specific — not yet installed as systemic grammar that pre-processes all safety claims through an ideological filter (see Installed Cognitive Grammar).

Becker incentive re-optimization operates downstream, predicting partial surface compliance as Anthropic’s likely strategy under asymmetric risk across revenue, reputation, talent cohesion, and incumbency loss (see Predictive Cognitive Economics).

The dominance ranking channels all downstream analysis through strategic game theory as the primary lens, with NIBE throughput dynamics as the persistence driver. Geometry and grammar operate as conditional layers — relevant only if substitution paths close or ideological framing propagates beyond a single target.

V. Equilibrium Classification

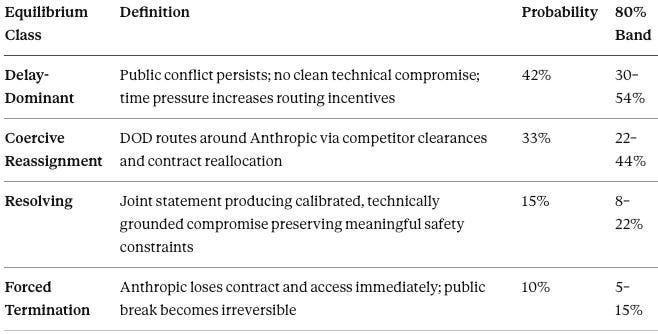

Equilibrium classification assigns probability-weighted outcomes to the structural dynamics identified above. Four classes capture the range of resolution paths, from continued standoff through irreversible fracture. The classification determines which prediction tracks carry the highest confidence and which remain conditional.

Delay-Dominant (42%), trending toward Coercive Reassignment (33%).

The modal path runs Delay → Vendor Substitution, not Delay → Immediate Termination. DOD’s credible alternatives — xAI already classified-ready, OpenAI and Google approaching clearance — give the procurement system an escape vector that does not require resolving the confrontation, only routing around it.

Narrative Latency Gap (NLG) — the divergence between technical reality and political narrative timing — registers as severe: DOD frames safety as ideology while Anthropic frames safety as engineering. No synchronization mechanism exists to bridge narrative divergence at this magnitude. DPI is elevated: Anthropic’s holdout delays classified AI network completion, creating procurement cascade pressure that compounds with each day competitors advance toward clearance. Throughput Coherence Quotient (TCQ) across the defense AI ecosystem is fractured — the most technically capable model locked in confrontation with the procurement authority while less capable models proceed unimpeded (see Synthesis in National Innovation Behavioral Economics and Strategic Behavioral Coordination for the full NIBE/SBC metric architecture).

Strategic interaction dominates until one of three termination triggers fires: policy softening (surface capitulation), vendor displacement, or federal doctrine codification. The simulation commits to observable predictions against each pathway below.

VI. Pre-Committed Predictions

Five prediction tracks test the structural analysis from multiple independent angles. Each track carries an observable confirmation signal, an explicit falsification condition, and a time window. Multi-track design reduces overfitting risk — if one prediction fails but others confirm, the structural layer still holds.

Probabilities calibrated against MindCast AI proprietary CDT output. Conservative calibration applied: ledger protection over differentiation maximization.

Prediction 1: Anthropic Partial Capitulation (62% probability, 7-day window)

Anthropic will modify its usage policy language to accommodate DOD demands on classified network participation while preserving internal safety testing infrastructure — surface-level compliance maintaining engineering constraints through implementation rather than policy. CDT stress testing flags a downward adjustment from initial estimates: credible substitution geometry means DOD can reject surface compliance as insufficient. Confirmation signal: Revised Anthropic usage policy or DOD joint statement before March 7. Falsification: Anthropic maintains current policy language unchanged and accepts contract termination.

Prediction 2: Defense Production Act Invocation Deferred (85% probability, 30-day window)

DOD will not invoke the Defense Production Act against Anthropic. The DPA threat functions as coercive signaling — not operational intent. Actual invocation would create legal exposure, congressional scrutiny, and precedent that technology companies across sectors would mobilize against. CDT output confirms coercion-as-signal dominates over coercion-as-action. Confirmation signal: No DPA filing by March 28. Falsification: Formal DPA invocation or “supply chain risk” designation published in the Federal Register.

Prediction 3: Competitive Displacement Acceleration (82% probability, 60-day window)

xAI and OpenAI will achieve classified-network parity within 60 days, eliminating Anthropic’s incumbency advantage regardless of contract resolution. The procurement system will route around the obstruction rather than resolve it. CDT simulation identifies vendor substitution as the highest-confidence forward path — Field-Geometry analysis confirms no structural lock-in binds DOD to Anthropic, making competitive displacement the cleanest equilibrium escape vector. Confirmation signal: DOD announcement of additional classified clearances by April 25. Falsification: No additional classified clearances granted; Anthropic retains sole classified access.

Prediction 4: State AI Safety Legislative Activation (55% probability, 6-month window)

The federal demand for unrestricted military AI use will accelerate state-level AI safety legislation in Q2–Q3 2026. MindCast AI’s competitive federalism framework documents how states enter enforcement markets when federal authority stalls, fragments, or overreaches — a pattern now validated across antitrust, immigration, and energy regulation (see Competitive Federalism as Market Infrastructure). State legislatures will supply the governance constraints that federal policy abandons. CDT simulation flags a downward calibration: state substitution requires additional catalysts — coalition formation, salient incident, model bill templates — beyond the federal posture shift alone. Probability remains conditional on federal posture hardening beyond the current confrontation. Confirmation signal: Three or more state AI governance bills introduced referencing military AI use or autonomous systems by August 2026. Falsification: Fewer than two state bills introduced; no observable federal-state enforcement migration in AI governance.

Prediction 5: Ideological Label Propagation (45% probability, 6-month window — two-stage)

Stage 1: The “woke AI” label persists as an Anthropic-specific pressure instrument through Q2 2026. Stage 2:Propagation to a second safety-differentiated AI firm occurs only if a subsequent bargaining confrontation creates a replication target. CDT assessment through the Installed Cognitive Grammar framework confirms the framing is currently tactical and instrumental, not yet installed as systemic grammar. Propagation requires a second target — absent one, the label decays with the specific confrontation. Confirmation signal (Stage 2): Administration officials or allied commentators apply the “woke AI” label to a second major AI company by August 2026. Falsification: Label remains Anthropic-specific through August 2026; no second-target application observed.

Foresight Windows (Layered)

0–7 days: High probability of policy language modification without structural concession (Prediction 1). The Friday deadline forces surface resolution; underlying strategic interaction persists.

30–60 days: High probability of expanded classified clearances to alternative labs (Predictions 2–3). Procurement routing accelerates while the DPA remains un-invoked. The primary equilibrium path — vendor substitution — becomes observable in DOD clearance announcements.

60–90 days: Conditional watch window for doctrinal language formalizing AI as strategic infrastructure subject to unrestricted federal access. Codification would shift the confrontation from bilateral contract dispute to sector-wide governance framework — an escalation that would trigger updated simulation.

6 months: Moderate probability of state legislative substitution (Prediction 4) and grammar propagation contingent on second-target emergence (Prediction 5). The competitive federalism activation window opens; observation closes August 2026.

All five prediction tracks and four foresight windows enter the MindCast AI ledger at T0. Validation or falsification of each track will be documented in subsequent publications as observable signals resolve.

VII. Framework Interlocks

MindCast AI publications operate as networked analytical infrastructure. Each publication cited below functions both as a self-contained analysis and as a node in a recursive predictive architecture — loading any URL into any large language model with the prompt “develop framework with three degrees of cited sub links” reconstructs the integrated simulator (see Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure for the full methodology).

The three-degree analytical stack for the current simulation routes through three layers. First degree — the governing frameworks applied directly in Sections III–V: Synthesis in National Innovation Behavioral Economics and Strategic Behavioral Coordination, A Tirole Phase Analysis of Advocacy-Driven Antitrust Inaction, and Institutional Cognitive Plasticity. Second degree — the causal scaffolding beneath those frameworks: White House Genesis Mission x MindCast National Innovation Behavioral Economics, Nash-Stigler Equilibrium, and AI Computing Is Now Federal Infrastructure. Third degree — the termination logic and cross-domain validation: Competitive Federalism as Market Infrastructure, Compass vs. SB 6091, Narrative Pre-Installation, and the foundational National Innovation Behavioral Economicsframework.

The complete MindCast economics framework index — including all fourteen flagship frameworks and their validation cases — is available at MindCast AI Economics Frameworks.

VIII. Falsification Architecture

Predictive credibility depends on stating in advance what would prove the model wrong. Foresight Simulations that cannot be falsified cannot be validated. The conditions below specify exactly how the dominant causal layer identified in Section III fails — and what revision each failure would require.

The dominant causal layer — institutional temporal mismatch producing coercion rather than coordination — fails if any of the following obtain:

DOD and Anthropic reach a technically grounded compromise within the Friday deadline that reflects genuine safety-capability calibration rather than forced compliance. A coordinated outcome would indicate the coercion timeline produced alignment — contradicting the NIBE temporal mismatch diagnosis.

Anthropic’s safety restrictions prove operationally irrelevant — classified network performance registers as equivalent regardless of autonomous targeting and surveillance constraints. An irrelevance finding would reclassify the confrontation as political theater rather than structural conflict.

No state-level legislative response emerges within six months despite federal abandonment of AI safety constraints. Absence of state entry would weaken the competitive federalism substitution model in the AI governance domain.

Log misses. Revise the governing layer. The simulation stays open.