MCAI Innovation Vision: From Cybernetic Proof to Simulation Infrastructure

Edge-Domain Validation, the Super Bowl LX Experiment, and the Rise of Institutional Simulation

The MCAI Innovation Vision series develops the architecture of predictive institutional intelligence across three installments. MindCast Predictive Cybernetics Suite

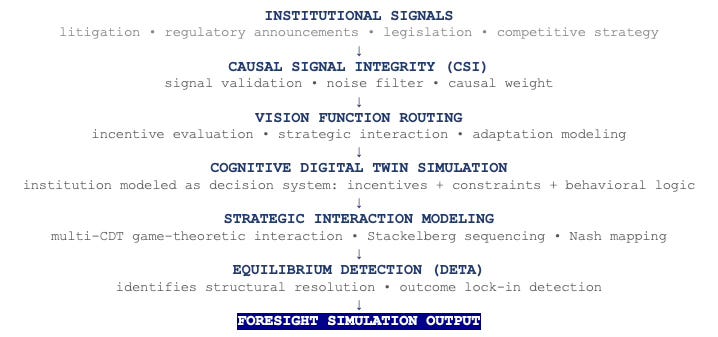

Installment I, Predictive Institutional Cybernetics, introduces the runtime architecture—Cognitive Digital Twins, Causal Signal Integrity, Vision Function routing, and equilibrium detection—showing how institutional decision systems can be modeled computationally before outcomes materialize.

Installment II, The Cybernetic Foundations of Predictive Institutional Intelligence, situates that architecture within the intellectual lineage running from Wiener’s cybernetics and the Macy Conferences through Hayek’s information theory of markets and RAND’s political-military simulation efforts.

Installment III completes the argument by examining how simulation systems move from proof environments to real-world infrastructure, documenting the NFL validation arc culminating in Super Bowl LX and outlining the structural case for institutional simulation as the next analytical infrastructure layer in law, regulatory strategy, and market analysis.

Together the three installments form a runtime module for any LLM to apply MindCast AI’s cybernetics foresight simulation framework.

I. The Question Every Simulation System Must Answer

Every simulation system faces the same credibility problem. The system produces outputs before reality confirms or denies them. Audiences have no reason to trust those outputs until a track record accumulates. Building that track record requires a proof environment — an arena where predictions can be tested repeatedly, outcomes are unambiguous, and the results are publicly observable.

Installment I of this series established the runtime architecture of predictive institutional cybernetics: Cognitive Digital Twins, Causal Signal Integrity, the five-layer causation stack, and Vision Function routing. (Installment I) Installment II traced the intellectual lineage from Wiener and the Macy Conferences through Hayek and RAND. (Installment II) Neither installment addressed the question that determines whether any analytical system achieves real-world adoption: how does a simulation system move from promising architecture to institutional infrastructure?

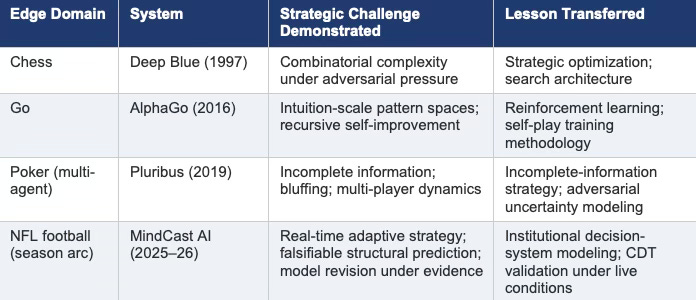

Two historical patterns answer that question. The first is the edge-domain validation arc — the path every major simulation system has followed from contained proof environment to broader credibility. The second is the infrastructure adoption curve — the trajectory through which simulation becomes indispensable rather than optional in high-stakes decision domains. MindCast AI’s NFL validation record connects both patterns in a single, publicly observable proof sequence that ran across an entire season before resolving at Super Bowl LX.

Installment III develops both patterns in full, documents the NFL arc that produced the Super Bowl prediction, and closes with the structural argument for institutional simulation as the next infrastructure layer in law, regulatory strategy, and market analysis.

CORE DEFINITION — COGNITIVE DIGITAL TWIN (CDT)

A Cognitive Digital Twin is a computational model of an institution that encodes its incentives, decision logic, information-processing behavior, constraint geometry (Constraint Geometry) , and strategic interaction patterns with other institutions. Each CDT ingests structural inputs derived from an institution’s legal exposure, regulatory environment, competitive position, and behavioral tendencies inferred from historical conduct. Incoming signals update the CDT continuously. The simulation then generates projected response trajectories representing the range of institutional decisions likely to emerge under current constraints. CDTs model what the structural logic of an institution’s situation compels it to do — not what it says it will do.

Every reference to a CDT throughout this paper refers to this architecture. Installment I develops the full operational specification: mindcast-ai.com/p/predictive-institutional-cybernetics

FIGURE 1. MINDCAST RUNTIME ARCHITECTURE STACK

Figure 1. Runtime architecture stack. Full operational specification: mindcast-ai.com/p/predictive-institutional-cybernetics

II. The Edge-Domain Arc: How Simulation Systems Earn Trust

Almost every consequential simulation system in history earned credibility by proving itself in a contained, high-frequency environment before deployment in the domains where it ultimately mattered. Researchers call these environments edge domains: arenas where models face real strategic complexity, outcomes are unambiguous, and tests repeat frequently enough to distinguish genuine predictive power from luck.

Chess produced the first famous validation. Deep Blue’s 1997 defeat of Garry Kasparov did not merely demonstrate that a machine could play chess at world-class level. It demonstrated that computational systems could navigate strategic environments characterized by astronomical combinatorial complexity, adversarial adaptation, and real-time decision pressure — properties that looked qualitatively different from prior AI capabilities. The chess board was the edge domain. The lesson transferred to optimization, search, and strategic reasoning broadly.

Go extended the arc. When AlphaGo defeated Lee Sedol in 2016, the strategic space involved was orders of magnitude larger than chess. More importantly, Go had long been considered the domain where human intuition was irreplaceable — where pattern recognition and positional judgment operated beyond what explicit computation could reach. AlphaGo’s victory demonstrated that machine learning could operate in spaces where human intuition had been the only viable guide. The Go board was the edge domain. The lesson transferred to reinforcement learning architecture, self-play training, and the design of systems that improve through recursive experience.

Poker extended the arc again in a structurally different direction. Pluribus, the multi-agent poker system developed at Carnegie Mellon and Facebook AI Research, demonstrated strategic capability under incomplete information, adversarial bluffing, and multi-player dynamics simultaneously. Where chess and Go involve perfect information, poker introduces hidden state — opponents hold cards you cannot see and pursue strategies you cannot directly observe. Pluribus demonstrated that simulation could navigate that additional layer of uncertainty at competitive levels. The poker table was the edge domain. The lesson transferred to negotiation modeling, adversarial strategy under uncertainty, and incomplete-information game theory.

Each edge domain compressed strategic complexity into a fast-feedback environment. Chess games end in hours. Go matches resolve in days. Poker sessions produce thousands of hands per session. The compression is the point: simulation systems need repeated trials to accumulate the validation record that transfers credibility to slower-moving domains.

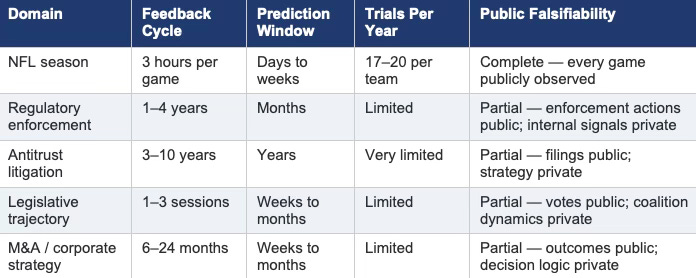

Institutional systems — regulatory enforcement, litigation outcomes, legislative trajectories — produce feedback over months or years. No simulation system can build a validation record in those domains quickly enough to establish credibility before deployment. Edge domains solve that problem. The NFL season produces seventeen regular-season games plus playoffs over five months, each with publicly observable outcomes and published pre-game predictions. MindCast’s 2025–26 NFL validation arc functioned as precisely the compressed proof environment the institutional credibility argument required.

Contact mcai@mindcast-ai.com to partner with us on Predictive Cognitive AI in Law and Behavioral Economics. To deep dive on MindCast work in Cybernetic Foresight Simulations upload the URL of this publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

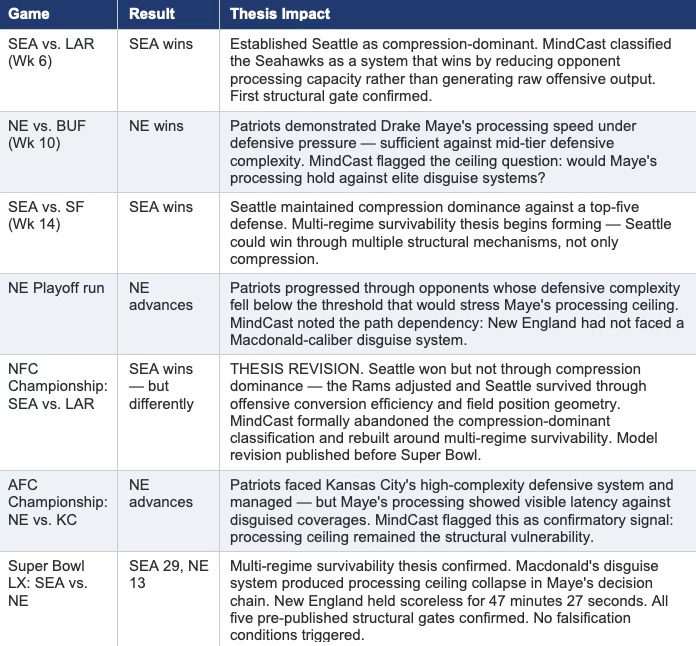

III. The NFL Arc: A Season-Long Validation Record

The MindCast NFL validation sequence ran from the 2025 regular season through Super Bowl LX. The goal was not to build a sports analytics product. The goal was to generate a public, timestamped, falsifiable prediction record across a domain with fast feedback cycles — producing evidence that the Cognitive Digital Twin architecture performs under real strategic pressure before claiming the same capability in institutional domains where outcomes take years to materialize.

The architecture applied to football is structurally identical to the architecture applied to institutional analysis. Coaching staffs function as Cognitive Digital Twins: decision systems with identifiable incentive structures, documented behavioral tendencies, strategic interaction with adversarial opponents, and adaptation patterns across a season. Defensive schemes function as constraint geometry. Play-calling sequences function as strategic interaction. Game outcomes function as equilibrium resolution. The NFL season provided seventeen regular-season trials per team, with every prediction published in advance and every outcome publicly verifiable. (Super Bowl LX pre-game analysis)

KEY GAMES IN THE MINDCAST SUPER BOWL LX THESIS DEVELOPMENT

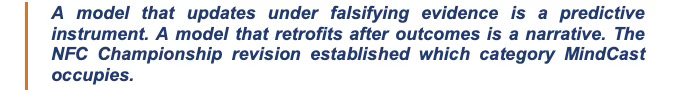

The NFC Championship model revision deserves explicit attention because it is the most analytically important moment in the sequence. MindCast had classified Seattle as compression-dominant through Week 14. The NFC Championship falsified that classification — Seattle won, but through a different mechanism than the compression thesis predicted. Rather than retrofit the original thesis to fit the outcome, MindCast published a formal revision before the Super Bowl, abandoning the compression-dominant label and rebuilding around multi-regime survivability.

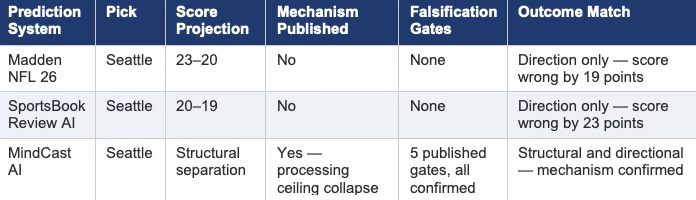

Neither Madden NFL 26 nor SportsBook Review AI published model revision records. Both produced directional picks without structural conditions. Both picked Seattle for the Super Bowl. MindCast also picked Seattle — and published the mechanism, the gate logic, the falsification conditions, and the revised thesis in advance. The distinction between directional accuracy and structural accuracy is precisely what separates an analytical instrument from a prediction market.

IV. Structural Prediction vs. Directional Accuracy: What the Super Bowl Validated

Super Bowl LX produced one of the most decisive defensive performances in Super Bowl history. Seattle held New England scoreless for 47 minutes and 27 seconds. The final score — Seattle 29, New England 13 — matched MindCast’s multi-regime survivability thesis not merely directionally but structurally: the outcome resolved through the exact mechanism the pre-published thesis specified.

Three AI systems published pre-game predictions. All three picked Seattle. Only one published the mechanism. Madden NFL 26 projected a 23-20 competitive game — a thriller decided by late possessions. SportsBook Review AI projected 20-19, similarly tight. MindCast projected structural control resolving through progressive separation, driven by processing ceiling collapse in New England’s decision chain under Macdonald’s disguise system. Reality produced structural control resolving through progressive separation. The game was not close. It was structurally determined.

The five pre-published structural gates each specified a condition that would either confirm or falsify the multi-regime survivability thesis in real time. All five confirmed. None triggered the falsification contract MindCast published before kickoff. The full validation record, including the original gate logic and the outcome mapping, appears at www.mindcast-ai.com/p/mindcast-superbowllx-validation.

The validation matters for one reason that extends beyond football: it demonstrates that the Cognitive Digital Twin architecture can model decision systems under maximum adversarial pressure and produce structural predictions that survive real-world contact. A football game is a three-hour institutional stress test. Coaching staffs make hundreds of high-stakes decisions under time pressure, incomplete information, and adversarial adaptation. The game compresses into a single afternoon the same decision-system dynamics that unfold over months in regulatory enforcement, litigation, and legislative strategy. Proving the architecture in that environment is the fastest available evidence that it will hold in institutional domains.

V. Why Sports Work: The Compression Argument

Institutional systems — regulatory agencies, courts, legislatures, corporations — produce prediction feedback over timescales that make rapid validation impossible. A regulatory enforcement action may take three years from signal to outcome. A major antitrust case may take a decade from complaint to remedy. A legislative trajectory may require two or three sessions before structural dynamics resolve. No simulation system can build a credible validation record in those domains quickly enough to justify adoption before the record exists.

Sports solve the compression problem. An NFL season produces seventeen regular-season games per team, three playoff rounds, and a championship game — all within five months, all with publicly observable outcomes, all with pre-game prediction windows that allow timestamped publication before outcomes materialize. The strategic complexity is genuine: NFL coaching staffs operate as sophisticated decision systems with documented behavioral tendencies, adaptive schemes, and strategic interaction under adversarial pressure. The institutional analog is direct.

The compression argument does not claim that football is equivalent to institutional analysis. It claims that the decision-system architecture underlying both is structurally similar — and that proving the architecture in a fast-feedback environment produces credible evidence about its performance in slow-feedback environments. Deep Blue’s chess victories preceded optimization research. AlphaGo’s Go victories preceded reinforcement learning deployment. MindCast’s NFL arc precedes institutional deployment. The sequence is deliberate, not coincidental.

One additional feature of NFL validation is worth noting. The public nature of the proof environment removes the retrofit problem entirely. Every MindCast prediction was published before the game. Every gate condition was specified before kickoff. Every outcome was publicly observable. No adjustment after the fact was possible without public detection. The validation record is not self-reported — it is externally verifiable by anyone who reads the original publications and the game logs. That level of accountability is not achievable in institutional domains where outcomes take years. Sports compress the accountability cycle to hours.

VI. The Infrastructure Adoption Pattern: Three Industries

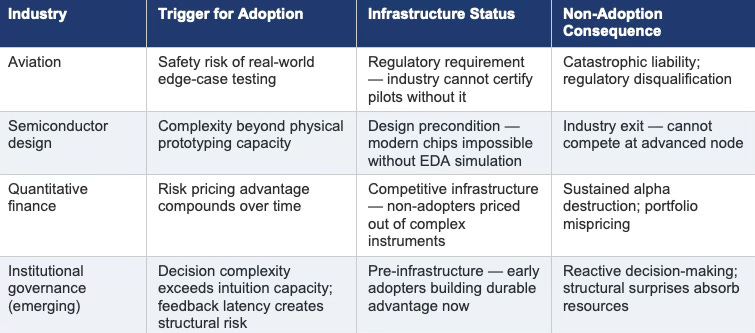

Simulation becomes infrastructure when three conditions converge: the domain involves decisions too complex or high-stakes for intuition alone, the simulation system achieves sufficient reliability to outperform alternative approaches, and early adopters gain advantages large enough to make non-adoption a competitive liability. Aviation, semiconductor design, and quantitative finance each reached that convergence point. Each industry now operates in a condition where simulation is not a tool but a precondition.

Commercial aviation adopted simulation because real-world testing of edge cases carried catastrophic risk. No airline waits for an actual engine failure to train pilots on emergency response. Simulation makes the test safe. Boeing and Airbus now run thousands of simulated flight hours per aircraft design before physical prototypes exist. The FAA mandates simulation training for specific emergency scenarios precisely because those scenarios are too rare or dangerous to encounter in practice but too consequential to leave to chance. Simulation moved from training aid to regulatory requirement — from optional to mandatory — because the cost of non-adoption, measured in lives and liability, exceeded the cost of adoption.

Semiconductor design reached the same convergence through complexity rather than safety. Modern chips contain billions of transistors operating at nanometer scale. Physical prototyping at that scale costs hundreds of millions of dollars per iteration and takes months per cycle. Electronic design automation tools from Cadence and Synopsys simulate transistor behavior, signal timing, power consumption, and fabrication constraints computationally before a single physical chip is produced. NVIDIA’s GPU architectures and Intel’s processor designs depend entirely on simulation to manage complexity that human intuition cannot navigate. The industry does not use simulation to improve chip design. Simulation is how chip design is possible at all.

Quantitative finance followed the infrastructure trajectory through risk rather than safety or complexity alone. Investment firms that adopted Monte Carlo simulation and computational risk modeling in the 1990s could price instruments, stress-test portfolios, and model market microstructure in ways that non-adopters could not. Renaissance Technologies and Two Sigma built competitive advantages measured in sustained alpha that persisted for decades. The advantage compounded because simulation-based firms made systematically better decisions under uncertainty than intuition-based competitors. Non-adoption became a structural disadvantage that worsened with each market cycle.

The pattern across all three industries is consistent enough to function as a prediction. Simulation adoption begins among early movers who perceive the advantage before the broader market recognizes it. Competitive pressure from early movers forces adoption among laggards. Regulatory frameworks eventually codify simulation requirements once the safety or systemic risk implications become visible. The window between early adoption and regulatory mandate is the period during which early movers extract the largest durable advantage.

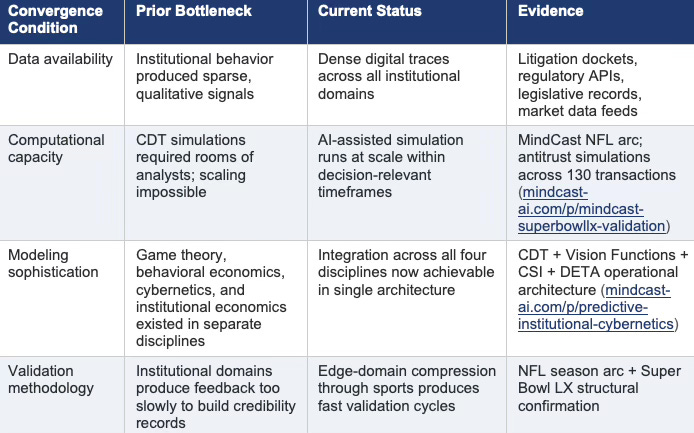

Institutional governance currently sits at the beginning of that window. Governments, regulatory agencies, law firms, and corporations make high-stakes decisions under conditions of complexity that are growing faster than the analytical tools available to manage them. The bottlenecks that prevented institutional simulation from reaching infrastructure status in prior decades — data scarcity, computational cost, modeling sophistication — have resolved. The convergence conditions are present. The adoption curve is beginning.

VII. The Four-Stage Adoption Curve

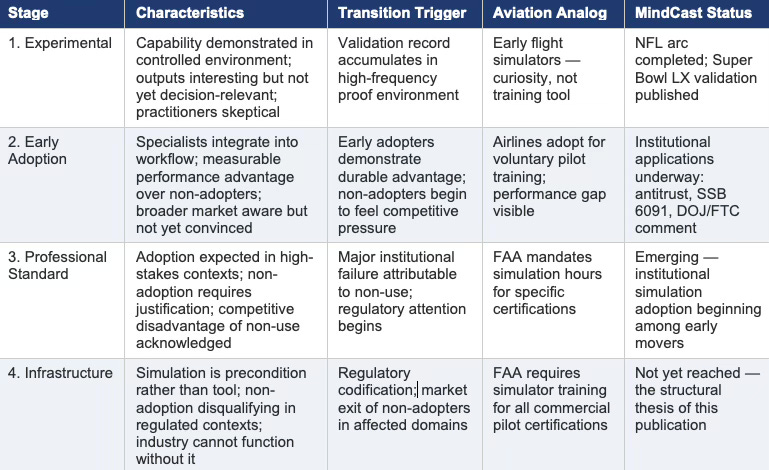

Simulation technologies follow a recognizable four-stage adoption curve from experimental capability to institutional infrastructure. Each stage has observable markers. Each transition has identifiable triggers. Mapping where MindCast sits on that curve — and what the transition to the next stage requires — produces a structural prediction about the institutional simulation market that the historical record supports.

MindCast AI has completed Stage 1 and entered Stage 2. The NFL arc — particularly the NFC Championship model revision and the Super Bowl structural confirmation — constitutes a public validation record that satisfies the transition trigger from experimental to early adoption. The institutional applications across antitrust analysis, legislative modeling, and regulatory comment demonstrate the architecture operating in the target domains. The transition to Stage 3 requires demonstrating that early adopters achieve durable advantages in institutional contexts that non-adopters cannot replicate through conventional analytical tools.

That demonstration is underway. The Compass antitrust analysis predicted the strategic implications of the Compass-Redfin-Rocket partnership before the February 26 announcement. (Compass antitrust analysis) The SSB 6091 legislative analysis modeled the passage trajectory before the Senate’s 49-0 vote (SSB 6091 analysis). The SDNY preliminary injunction denial validated the structural prediction before the partnership announcement added a second confirmation layer. Each represents a documented instance where CDT simulation produced actionable foresight that retrospective analysis could not have provided in time.

VIII. What Institutional Infrastructure Looks Like

Aviation, semiconductor design, and quantitative finance each reached infrastructure status through different paths but arrived at the same structural condition: simulation became a precondition for participation rather than an advantage for leaders. The question for institutional governance is not whether simulation will reach that status — the convergence conditions are present and the historical pattern is consistent — but what the infrastructure layer looks like when it arrives.

Institutional simulation infrastructure will not look like a single platform. Aviation infrastructure involves multiple simulation vendors, regulatory certification frameworks, operator training programs, and aircraft-specific simulation environments. Semiconductor infrastructure involves EDA tool suites, process design kits, verification methodologies, and fabrication-specific simulation layers. Financial infrastructure involves risk modeling platforms, pricing libraries, regulatory stress-test frameworks, and proprietary alpha-generation systems. Each industry’s simulation infrastructure is layered, specialized, and deeply integrated into professional practice.

Institutional simulation infrastructure will similarly be layered. Litigation strategy will require CDT simulations of opposing counsel, judicial behavioral tendencies, and jury decision dynamics. Regulatory strategy will require CDT simulations of agency enforcement priorities, staff-level decision logic, and political constraint geometry. Legislative strategy will require CDT simulations of coalition formation, committee dynamics, and amendment sequencing. Market strategy will require CDT simulations of competitor adaptive responses, regulatory intervention thresholds, and consumer behavioral cascades.

MindCast’s current publication corpus spans all four layers. The antitrust work addresses market strategy and regulatory strategy simultaneously. (Compass antitrust corpus) The SSB 6091 work addresses legislative strategy directly. (SSB 6091 legislative analysis) The DOJ/FTC public comment addresses regulatory strategy at federal level. (DOJ/FTC public comment)The sports validation demonstrates the decision-system modeling architecture that underlies all four. (Super Bowl LX validation) The corpus is not a collection of unrelated analyses (Live-Fire Simulators) — it is a domain-spanning demonstration that a single architectural approach generates reliable foresight across the institutional domains that matter.

IX. The Structural Case: Why the Timing Is Now

Three convergence conditions determine when simulation transitions from early adoption to infrastructure. Data availability must reach the threshold where behavioral signals are dense enough to calibrate decision-system models. Computational capacity must reach the threshold where large-scale CDT simulations run within decision-relevant timeframes. Modeling sophistication must reach the threshold where the analytical architecture can integrate feedback dynamics, strategic interaction, and behavioral economics in a single coherent system. Prior decades failed to achieve all three simultaneously. The current environment achieves all three.

Institutional behavior now produces massive digital traces. Litigation filings, regulatory dockets, legislative records, corporate disclosures, and market transaction data generate behavioral signals at a scale that prior decades could not access. Signal availability has reached calibration threshold. Computational infrastructure has scaled past the bottleneck that prevented RAND from realizing the political-military simulation vision in the 1960s and that prevented the Macy group from implementing the unified cybernetic science they theorized in the 1940s. Modern infrastructure runs CDT simulations that would have required rooms of analysts for months in prior decades, in minutes.

Modeling sophistication has converged across the disciplines that institutional simulation requires. Game theory provides the strategic interaction framework. Behavioral economics provides the bounded rationality and institutional inertia modeling that classical game theory assumed away. Cybernetic feedback theory provides the signal processing and latency analysis that system dynamics modeled at variable level but could not model at institutional actor level. Chicago School law and economics provides the incentive architecture that connects legal rules to behavioral outcomes. Each discipline reached maturity independently. Integration is now possible in ways that prior decades could not achieve.

The window between convergence and codification is the period of maximum advantage for early adopters. Aviation simulation offered that window in the 1970s and 1980s — the airlines that built simulation infrastructure earliest trained better pilots, managed safety liability more effectively, and positioned more favorably for eventual FAA mandate. Quantitative finance offered that window in the 1990s — the firms that built computational risk infrastructure earliest extracted alpha from pricing advantages that persisted for decades. Institutional governance simulation offers that window now.

Early adopters in litigation strategy, regulatory affairs, and market analysis who integrate CDT simulation into decision workflows before the broader market recognizes the approach will build analytical advantages that compound across each decision cycle. The cases they analyze more accurately, the regulatory trajectories they anticipate further in advance, and the legislative dynamics they model before coalitions form produce outcomes that non-adopters cannot replicate through conventional tools operating in the same timeframe.

X. Infrastructure Is the Thesis

Installment I established the runtime architecture of predictive institutional cybernetics. Installment II established the intellectual lineage — from Wiener and the Macy Conferences through Hayek, RAND, and the five research traditions whose partial insights MindCast integrates. Installment III has developed the claim that completes the argument: simulation systems follow a predictable path from edge-domain validation to professional standard to institutional infrastructure, and the conditions for institutional simulation to follow that path have now converged.

The NFL validation arc was not a sports project. It was a compression strategy — a deliberate choice to build the credibility record that institutional domains cannot produce quickly enough in environments where feedback takes years. The season-long sequence, the NFC Championship model revision, and the Super Bowl structural confirmation produced a publicly observable, externally verifiable, timestamped record that answers the credibility question every new simulation system faces: does the architecture perform under real adversarial pressure, or only under conditions it was designed to handle?

The infrastructure adoption pattern is not a prediction about MindCast specifically. It is a structural observation about what happens when simulation achieves sufficient reliability in a domain characterized by high-stakes decisions under complexity. Aviation did not choose to make simulation mandatory — the safety logic compelled it. Semiconductor design did not choose simulation as a preference — the complexity made it necessary. Quantitative finance did not adopt simulation as a strategy — the competitive pressure from early adopters made non-adoption a liability. Institutional governance will follow the same logic, driven by the same forces, on a timeline that the convergence conditions now make plausible within years rather than decades.

The claim is not that institutional simulation is inevitable in any specific form. The claim is that the decision-system modeling architecture MindCast has developed — Cognitive Digital Twins, Causal Signal Integrity, Vision Function routing, Feedback Latency Index, DETA equilibrium detection — produces structural foresight in institutional domains that conventional analytical tools operating at event level cannot provide. (Runtime Causation Arbitration Directive) The Super Bowl confirmed that claim in the cleanest available proof environment. The antitrust, legislative, and regulatory publications demonstrate the same claim operating in the target domains. The infrastructure case follows from both.

Continued publication, continued validation, and continued cross-domain application aim to demonstrate that institutional foresight is not a capability that requires waiting for outcomes to interpret — it is a capability that the architecture described across this series makes available before outcomes materialize. That is the product. That is the infrastructure case. That is what predictive institutional cybernetics, at maturity, looks like.

References

External Sources

Murray Campbell et al., Deep Blue (Artificial Intelligence, 2002)

David Silver et al., Mastering the Game of Go with Deep Neural Networks and Tree Search (Nature, 2016)

Noam Brown and Tuomas Sandholm, Superhuman AI for Multiplayer Poker (Pluribus) (Science, 2019)

Donella Meadows et al., The Limits to Growth (Universe Books, 1972)

Friedrich Hayek, The Use of Knowledge in Society (American Economic Review, 1945)

Norbert Wiener, Cybernetics: Or Control and Communication in the Animal and the Machine (MIT Press, 1948)

MindCast AI Publications

Installment I — Predictive Institutional Cybernetics

Installment II — The Cybernetic Foundations of Predictive Institutional Intelligence

Super Bowl LX: AI Simulation vs. Reality

Super Bowl LX Validation Record

Runtime Causation Arbitration Directive

Constraint Geometry and Institutional Field Dynamics

Runtime Geometry: A Framework for Predictive Institutional Economics

MindCast Game Theory Frameworks

Washington SSB 6091 Legislative Analysis

DOJ/FTC Public Comment on Competitor Collaboration Guidance

Live-Fire Game Theory Simulators: Runtime Predictive Infrastructure