MCAI Economics Vision: The Apple AI Challenger Framework

MindCast Consumer AI Device Series: Installment II Google, Samsung, and the Intelligence Layer

MindCast Consumer AI Device series publications: Installment I — The Intelligence Gap: Apple’s AI Strategy and the Commoditization Bet | Installment II — The Apple AI Challenger Framework: Google, Samsung, and the Intelligence Layer | Installment III — The Consumer AI Device Intelligence Layer: Value Capture Under Interface Drift | Installment IV How Cybernetic Feedback Latency, Loop Architecture, and Ashby’s Viability Condition Resolve Consumer AI Device Competition

Executive Summary

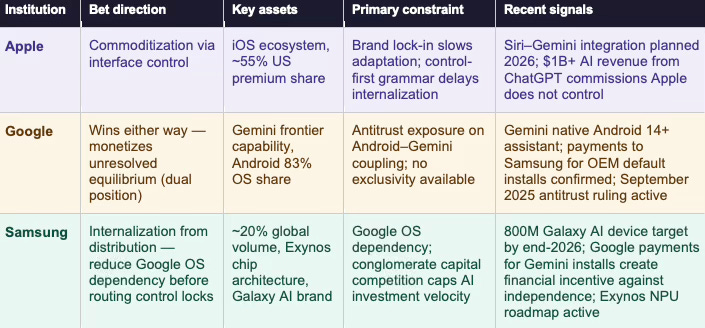

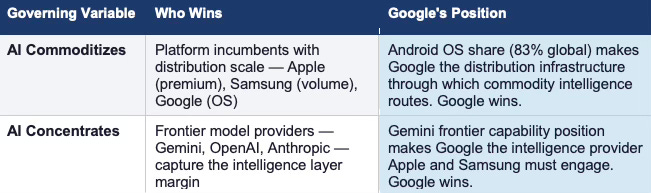

One institution in the AI platform market does not need the governing variable to resolve in its favor. Google wins under commoditization through Android distribution dominance. Google wins under concentration through Gemini capability dominance. No other institution in this analysis holds both positions simultaneously — and that structural asymmetry is the most consequential fact in the current competitive landscape. Installment II maps how that asymmetry applies pressure to Apple’s drift-stable equilibrium from both flanks, while establishing Samsung’s internalization path as the only genuine commoditization force in the current system.

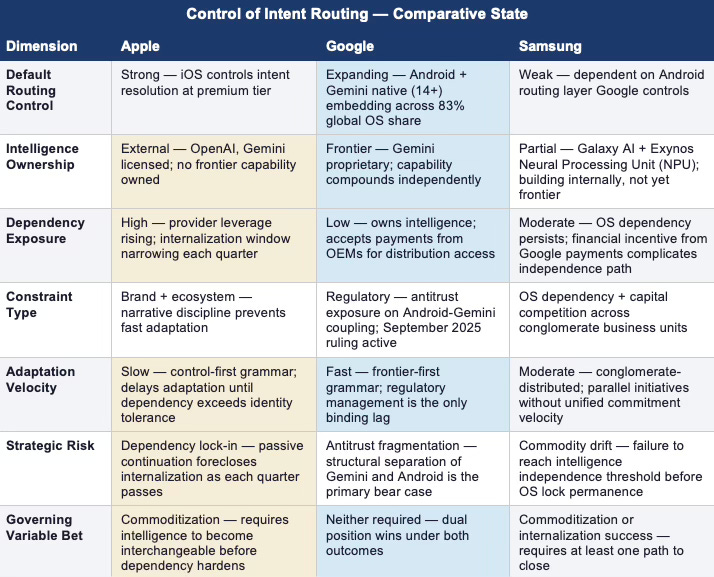

Google and Samsung are the only two institutions positioned to apply decisive pressure on Apple’s interface dominance — and they are pursuing structurally opposite strategies. Google owns the intelligence layer and is building upward toward the interface. Samsung owns global device distribution and is building inward toward intelligence. Apple owns the interface and is betting neither path succeeds before intelligence commoditizes.

The MindCast AI Consumer AI Device Series models the AI platform market as a competitive system governed by a single variable: whether artificial intelligence commoditizes or concentrates. Installment I analyzed Apple — establishing its equilibrium as drift-stable, commoditization-dependent, and behaviorally locked by a control-first grammar that prioritizes narrative over adaptation velocity. Installment II stress-tests that equilibrium from both flanks simultaneously, identifying the two institutional grammars converging on Apple’s interface position from opposite sides of the stack.

Samsung’s position is more constrained and more underestimated. Samsung Research, Galaxy AI, and independent Exynos chip architecture give Samsung a genuine bottom-up internalization path. The constraint is not capability — it is the operating system (OS) dependency that places Google between Samsung and full interface control. Samsung’s Cognitive Digital Twin (CDT) Foresight Simulation classifies the equilibrium as internalization-constrained: real optionality, structurally capped by a competitor that controls the routing layer.

The three-institution frame produces a single governing question: as AI becomes the primary interaction model, which institution controls the default pathway that captures, processes, and answers user intent? Apple holds that pathway now. Google is engineering to intercept it. Samsung is attempting to reduce its dependency on both players simultaneously.

FOR INVESTORS

Google’s dual-position asymmetry is the single most structurally significant fact in the AI platform market. No major analyst model correctly prices an institution that wins under both outcomes of the governing variable. The implication: standard risk-adjusted models for AI market structure systematically underweight Google’s option value. Investors holding concentrated AI platform exposure should model Google’s position as structurally hedged, not directionally exposed. Samsung’s internalization trajectory carries optionality that hardware-margin framing misses. Exynos chip independence, Samsung Research investment, and the Galaxy AI branded strategy represent a real path to reduced Google dependency at the intelligence layer — one that the market values as original equipment manufacturer (OEM) execution rather than platform strategy. Apple’s drift-stable equilibrium identified in Installment I is now subject to pressure from both directions simultaneously. The probability weights assigned in Installment I remain valid. What Installment II adds is the mechanism: Google and Samsung are not abstract competitive threats. Each represents a defined behavioral grammar converging on Apple’s interface position from opposite sides of the stack.

FOR CORPORATE STRATEGY

The platform incumbents’ AI dilemma — interface control without intelligence ownership — is not Apple-specific. Every consumer platform that distributes AI without owning it faces the same governing variable. Installment II adds the competitive dimension: the institutions that do own intelligence (Google) or are building toward it (Samsung) are not passive beneficiaries. They are active strategists applying institutional pressure at the interface boundary that platform incumbents control. The strategic implication is not symmetric. Platform incumbents can track competitor strategies. They cannot track them faster than the behavioral grammars of those competitors permit movement. Google’s speed-dominant grammar and Samsung’s distributed-conglomerate grammar each have defined adaptation velocities. Corporate strategists modeling competitive response windows should anchor those windows to the Installed Cognitive Grammar (ICG) of the relevant institution — not to the technological timeline.

Framework Note

Readers of Installment I know the MindCast AI Proprietary Cognitive Digital Twin (MAP CDT) architecture. The summary below serves as a compressed reference for Installment II. The full framework architecture, Vision Function definitions, and behavioral profile methodology appear in the Installment I publication at www.mindcast-ai.com/p/apple-ai-strategy.

MAP CDT — MindCast AI Proprietary CDT Foresight Simulation

MAP CDT is a behavioral economics and game theory simulation engine. MAP CDT routes raw signals through a structured nine-step process — signal intake, hypothesis formation, causal inference, signal integrity validation, Vision Function routing, dominance resolution, and recursive foresight simulation — resolving institutional behavior into equilibrium-classified, falsifiable predictive outputs.

Cognitive Digital Twin (CDT)

MAP CDT models each institutional subject as a Cognitive Digital Twin (CDT): a dynamic behavioral replica encoding the institution’s objective function, constraint stack, adaptation velocity, and feedback sensitivity. The simulation stress-tests each CDT against multi-agent strategic interaction and bounded time horizons to generate forward predictions.

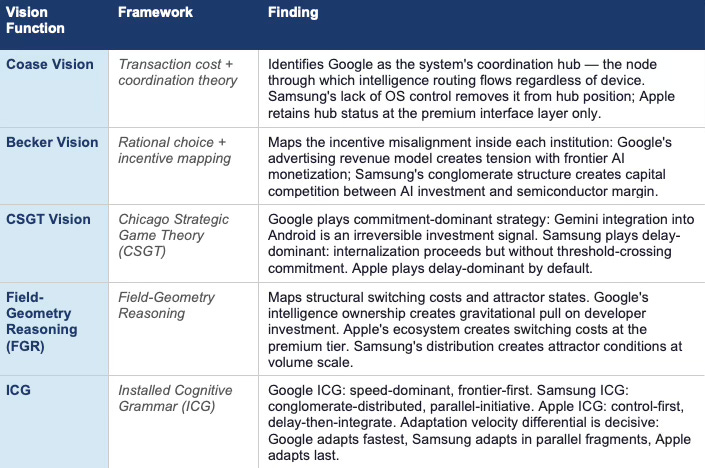

Vision Functions — Installment II Reference

I. The Competitive Position — Three Institutions, Three Bets

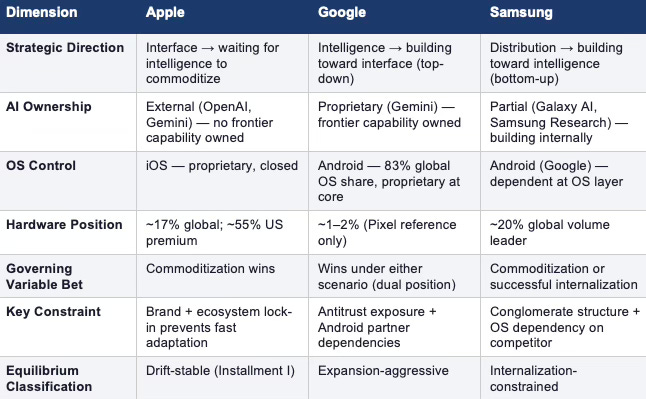

Apple, Google, and Samsung are running structurally distinct bets on the same governing variable — whether artificial intelligence commoditizes or concentrates — and the outcome of that variable determines which institutional grammar wins.

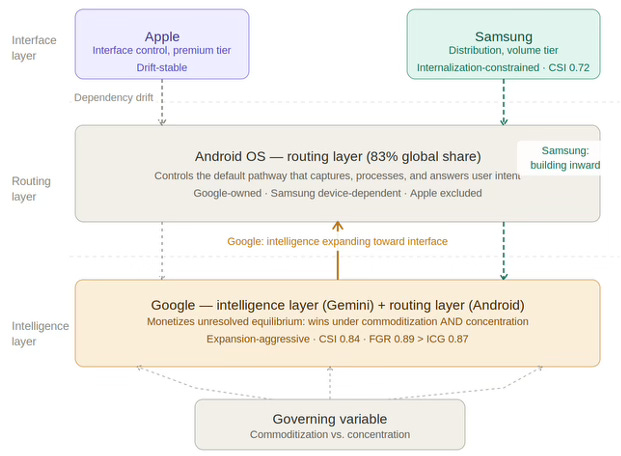

Figure 1. Consumer AI Device Series — System Dynamics. Solid arrow: commitment-dominant expansion. Dashed arrows: internalization path (teal) and dependency drift (gray). Governing variable resolves at the routing layer.

Apple’s bet: commoditization arrives before interface displacement. Apple’s grammar delays adaptation until dependency pressure exceeds identity tolerance. The bet is rational given Apple’s constraint stack: brand, margin, and ecosystem constraints prevent fast internalization, so Apple needs the governing variable to resolve in its favor before those constraints become liabilities.

Google’s bet: the governing variable does not need to resolve in a specific direction. Google holds positions on both sides — and monetizes the ambiguity between them. Under commoditization, Android’s 83% global OS share makes Google the distribution layer through which open-weight intelligence routes. Under concentration, Gemini’s frontier capability position makes Google the intelligence provider that platform incumbents depend on.

Samsung’s bet: distribution scale plus internalization velocity can reduce OS-layer dependency before Google’s routing control becomes structurally permanent. Samsung is attempting to avoid the trap Apple has already entered — interface control without intelligence ownership — by building toward the intelligence layer from the distribution foundation it controls. The constraint is that Samsung’s OS layer is Google’s, which means every step toward intelligence internalization occurs on infrastructure a competitor controls.

The three-institution system is not static. Each institution’s strategic choices alter the incentive environment for the others. Google’s Gemini integration into Android increases the cost of Samsung’s OS independence path. Samsung’s internalization investment increases commoditization pressure on the intelligence layer, which is the scenario Apple needs. Apple’s continued passive continuation reduces pressure on frontier providers to commoditize — a point established in Installment I and amplified by the three-institution frame.

Comparative Bets — Three Institutions, One Governing Variable

INVESTORS AND AI STRATEGY PROFESSIONALS · The three-bet frame resolves a structural confusion in standard AI platform analysis: analysts frequently model Google and Samsung as Apple competitors when the more precise framing is that each institution is running a different theory of value capture under the same governing variable. The investment implication differs by scenario. Under commoditization: Samsung’s distribution scale and Apple’s ecosystem lock-in both retain value; Google’s Android dominance retains value. Under concentration: Google’s Gemini position retains value; Apple’s and Samsung’s positions compress at the intelligence boundary. Only one institution — Google — holds a position that retains value under both resolutions.

Contact mcai@mindcast-ai.com to partner with us on Predictive Cognitive AI in Law and Behavioral Economics. To deep dive into MindCast AI upload the URL of any publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

Recent projects: Chicago School Accelerated — AI Infrastructure Patent Coordination | The Power Stack — How Energy Infrastructure Became the New AI Battleground | Prestige Markets as Signal Economies, A Model of Signal Suppression and Institutional Failure | Three AIs Walk Into Super Bowl LX and Each Simulation Thinks It Knows the Ending | MindCast Predictive Cybernetics Suite

II. Google’s Strategy — Intelligence First, Interface Second

GOOGLE — Behavioral Profile (MAP CDT Layer)

MAP CDT models Google as an intelligence-dominant, distribution-leveraged, antitrust-constrained institutional CDT. The behavioral profile below is not background. Google’s objective function, grammar, and constraint stack determine which equilibrium paths the institution will pursue, at what velocity, and under what threshold conditions.

Governing Objective Function

Google optimizes for control of user intent routing across its ecosystem — not hardware margin, not device share, not physical distribution for its own sake. Pixel exists as a reference implementation and capability proof, not a volume business. Google’s objective is to ensure that user queries — regardless of device, platform, or interface — resolve through Google-controlled intelligence. Advertising revenue defense is the financial constraint that gives this objective function its urgency: the moment user intent routes through a non-Google intelligence layer, Google’s advertising model faces structural pressure.

Constraint Stack

Antitrust Constraint. Google’s ability to leverage Android dominance for Gemini distribution is under active regulatory scrutiny globally. Every step Google takes toward coupling intelligence and distribution increases regulatory exposure. The constraint limits how aggressively Google can mandate Gemini as the default assistant layer across the Android ecosystem without triggering further U.S. Department of Justice (DOJ) or European Union (EU) action.

Monetization Tension. Google’s advertising model and frontier AI capability exist in structural tension. Advertising revenue depends on high-volume, low-latency query resolution. Frontier AI capability is expensive to serve and generates margin outside the traditional advertising auction model. Google must monetize intelligence without cannibalizing the advertising base that funds frontier investment.

Android Partner Dependencies. Android’s open-source architecture creates dependencies on OEM partners — including Samsung — that limit how aggressively Google can restructure the OS routing layer without triggering partner defection or accelerating OEM-level internalization efforts.

Behavioral Signature

Google exhibits four consistent behavioral patterns across technology transitions: frontier investment before market demand establishes the capability; ecosystem distribution to capture the user base fastest; monetization via advertising or platform leverage; and regulatory management as a trailing constraint rather than a leading one. Each pattern applies directly to the Gemini deployment strategy now underway.

Installed Cognitive Grammar — Speed-Dominant, Frontier-First

Google processes new technology domains through a sequence opposite to Apple’s: invest at the frontier first, distribute through ecosystem second, monetize through advertising and platform fees third, manage regulatory exposure fourth. The grammar produces fast adaptation and high capability accumulation but creates antitrust exposure at each step — because each domain Google enters through distribution leverage becomes a potential regulatory target.

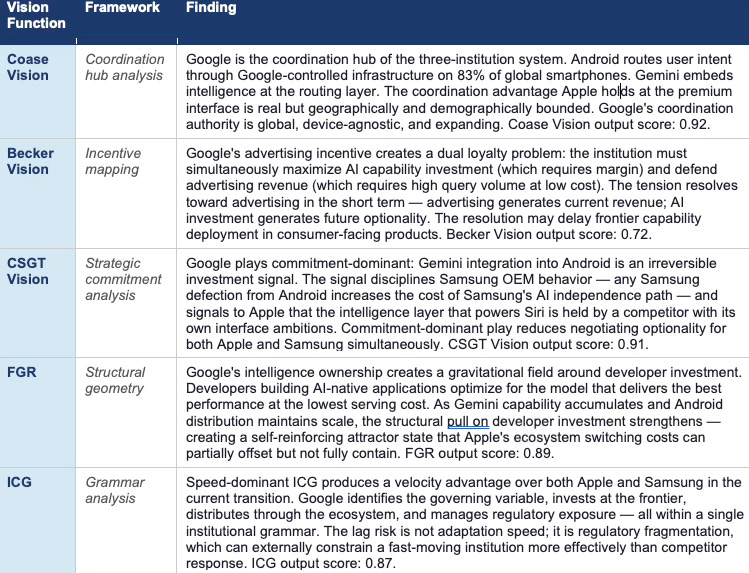

Adaptation Velocity: High. Constraint Rigidity: Moderate (antitrust). Identity Preservation Strength: Low — Google reframes institutional identity around capability, not brand. External Dependency Tolerance: Very low — Google’s grammar tolerates no dependency on external intelligence. The CDT Foresight Simulation resolves Google’s behavioral profile at a Causal Signal Integrity (CSI) score of 0.84 (High) — driven by high signal alignment (Assumption-to-Logic Integrity, ALI: 0.90), strong causal model fit (Causal Model Fit, CMF: 0.88), and reliable input signal integrity (Raw Input Stability, RIS: 0.85). Vision Function dominance resolution: FGR (0.89) over ICG (0.87) — structural position drives outcome over grammar velocity.

Failure Mode Sequence

Primary failure mode — Regulatory Fragmentation: Antitrust action forces structural separation of Android distribution and Gemini intelligence. The failure mode does not require prohibition — it requires enough regulatory uncertainty to slow Gemini’s mandatory integration timeline and allow Samsung or other OEMs to accelerate alternative intelligence strategies.

Secondary failure mode — Monetization Incoherence: Frontier AI capability and advertising revenue enter structural conflict. Google cannot simultaneously maximize AI capability investment and defend advertising margin without a monetization architecture that separates the two. Delay in resolving this tension produces capability investment without proportionate revenue capture.

Forward Behavioral Prediction

Google will deepen Gemini integration into the Android default layer within 12 months, manage antitrust exposure through voluntary interoperability commitments that preserve functional coupling, accelerate Pixel as a capability reference without committing to volume targets, and pursue enterprise AI monetization as a margin-accretive alternative to pure advertising revenue. The grammar predicts this sequence with high confidence — each step is consistent with speed-dominant, frontier-first processing.

CDT COMPRESSION — GOOGLE · Google behaves as an intelligence-dominant system that deploys capability through distribution infrastructure and monetizes unresolved equilibrium as a structural asset. Advantage persists regardless of how the governing variable resolves.

Google — Vision Function Outputs

Google — CDT Foresight Simulation: Terminal Outcome Probability Weights

INVESTORS · Google’s Dual Dominance base case at 55% reflects a structural hedge that standard AI platform models do not capture. Under commoditization, Android distribution retains value; under concentration, Gemini retains value. The risk is not directional — it is regulatory. The Antitrust Constrained scenario at 30% is not a competitor-driven risk. It is a policy-driven risk, which means it is not priced through competitive dynamics and is therefore systematically underweighted in standard AI sector analysis.

III. Samsung’s Strategy — Distribution First, Intelligence Second

SAMSUNG — Behavioral Profile (MAP CDT Layer)

MAP CDT models Samsung as a distribution-dominant, conglomerate-structured, OS-constrained institutional CDT. Samsung’s strategic position is systematically underestimated by analysis that reads the Android relationship as simple OEM dependency. Samsung Research is a serious capability investment. Galaxy AI is a real branded strategy with on-device execution. Exynos gives Samsung independent chip architecture. The constraint is not ambition — it is the OS layer that Google controls.

Governing Objective Function

Samsung optimizes for device volume, hardware margin, and conglomerate stability across semiconductor, display, and consumer electronics divisions. AI investment competes for capital against semiconductor and display business priorities that operate under different margin structures and different strategic timelines. Samsung does not optimize for a unified AI outcome — it optimizes for conglomerate stability, which means AI strategy must deliver returns within the capital envelope that hardware margin supports.

Constraint Stack

OS Dependency Constraint. Samsung does not control the operating system layer. Android mediates routing between Samsung’s hardware and the intelligence layer. Every intelligence internalization initiative Samsung pursues operates on infrastructure that Google controls and that Google has its own strategic incentives to shape.

Conglomerate Capital Competition. AI investment competes for capital against semiconductor (memory, logic, Exynos), display (Organic Light-Emitting Diode (OLED) and Quantum Dot (QD) panels), and consumer electronics divisions. Capital allocation decisions that prioritize AI reduce margin buffer in adjacent businesses. Samsung’s AI investment ceiling is set by conglomerate capital structure, not by strategic ambition alone.

Brand Constraint (Inverse of Apple’s). Samsung’s brand constraint operates differently from Apple’s. Samsung can publicly admit capability gaps, acquire external AI providers, and partner without the narrative coherence requirements that limit Apple. The absence of Apple-style brand constraint is a structural advantage at the AI internalization layer: Samsung can pursue dependency reduction strategies that Apple’s grammar would not permit.

Behavioral Signature

Samsung exhibits three consistent behavioral patterns across technology transitions: parallel-track initiative deployment across business units rather than unified strategic commitment; hardware-first framing of software and capability investments; and acquisition as a secondary internalization path when internal development velocity lags. Each pattern shapes the AI transition now underway.

Installed Cognitive Grammar — Conglomerate-Distributed

Samsung processes new technology domains through parallel business unit initiatives rather than through a unified institutional grammar. Unlike Apple’s control-first sequence or Google’s frontier-first sequence, Samsung’s grammar distributes adaptation across Samsung Research, Galaxy division, Exynos division, and consumer electronics — each moving at its own velocity with its own capital allocation. The grammar produces slower unified adaptation but more strategic flexibility: Samsung can pursue internalization paths that Apple’s brand constraint and Google’s antitrust exposure would each prevent.

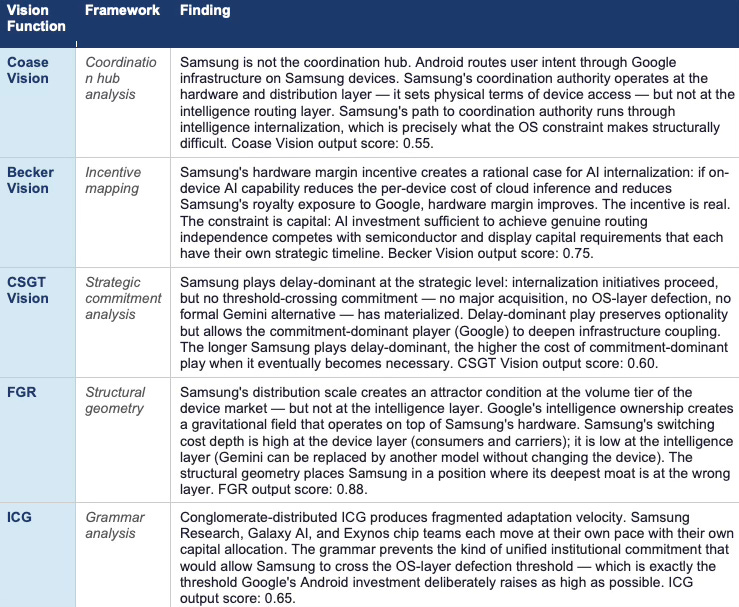

Adaptation Velocity: Moderate, distributed across units. Constraint Rigidity: High at OS layer (Google dependency), low elsewhere. Identity Preservation Strength: Low — Samsung reframes identity around hardware leadership rather than AI narrative. External Dependency Tolerance: Moderate — Samsung tolerates AI dependency at the OS layer while building toward reduction. The CDT Foresight Simulation resolves Samsung’s behavioral profile at a Causal Signal Integrity (CSI) score of 0.72 (Moderate) — driven by moderate signal alignment (ALI: 0.78), constrained causal model fit (CMF: 0.70), and reduced input signal integrity (RIS: 0.68). Vision Function dominance resolution: FGR (0.88) over ICG (0.65) — structural constraint caps outcome over grammar velocity.

Failure Mode Sequence

Primary failure mode — OS Lock Permanence: Google’s Android routing becomes structurally permanent before Samsung’s intelligence internalization reaches the threshold required to credibly threaten defection. Samsung builds Galaxy AI capability that remains subordinate to Google’s routing layer — a position structurally similar to Apple’s dependency on external models, but at a lower abstraction level.

Secondary failure mode — Capital Dilution: Conglomerate capital competition prevents AI investment from reaching the velocity needed to match Google’s Gemini deployment timeline. Samsung builds capability; Google deploys capability faster; the gap narrows slowly enough that Samsung’s internalization path never crosses the threshold that would make OS-layer defection credible.

Forward Behavioral Prediction

Samsung will increase Galaxy AI feature density within 12 months, accelerate Exynos integration with on-device inference capability within 18 months, pursue selective AI provider partnerships as alternatives to full Google dependency within 24 months, and maintain Android as the primary OS layer while building parallel intelligence infrastructure. The grammar predicts this sequence because each step is consistent with conglomerate-distributed processing: parallel initiatives, hardware-first framing, and gradual internalization without threshold-crossing commitment.

CDT COMPRESSION — SAMSUNG · Samsung behaves as a distribution-dominant conglomerate that pursues intelligence internalization through parallel business unit initiatives — structurally capable of reducing Google dependency at the intelligence layer, but constrained by OS architecture that places the routing layer on competitor infrastructure.

Samsung — Vision Function Outputs

Samsung — CDT Foresight Simulation: Terminal Outcome Probability Weights

CORPORATE STRATEGY · Samsung’s Partial Independence scenario at 30% is the most underpriced strategic outcome in this analysis. Standard OEM analysis models Samsung as a Google Android partner with AI feature differentiation. The more structurally precise framing: Samsung is a distribution-scale institution with genuine chip architecture independence, a branded AI strategy, and a research organization with real capability — running a bottom-up internalization path against a competitor whose OS infrastructure sits between Samsung and full routing control. The gap between the standard framing and the structural framing is where mispricing lives.

IV. Why Google’s Dual Position Is Asymmetric

Apple is betting on commoditization. Samsung is betting on internalization velocity. Google does not need to bet. The difference between those three positions is not a matter of degree — it is a structural asymmetry that reshapes the competitive geometry of the entire AI platform market, and understanding it precisely is the central analytical task of this section.

Under commoditization, Android distribution dominates. If frontier AI models become interchangeable infrastructure — if performance gaps compress and distribution becomes the primary bottleneck for value capture — Google’s 83% global OS share makes it the primary infrastructure layer through which commodity intelligence routes. Apple’s distribution advantage remains real at the premium tier. Samsung’s distribution advantage remains real at the volume tier. But Google’s routing authority spans both.

Under concentration, Gemini capability dominates. If a small number of frontier providers retain persistent capability advantages — if performance gaps remain meaningful and developers gravitate toward the best models — Google’s Gemini position makes it the intelligence provider that every platform incumbent must engage. Apple already licensed Gemini for Siri. The licensing relationship gives Google intelligence-layer leverage over Apple’s interface — which is precisely the competitive geometry Apple’s drift-stable equilibrium is designed to avoid acknowledging.

The asymmetry operates at the level of strategic optionality, not just terminal outcomes. Apple must choose between three paths: internalization, managed dependency, or passive continuation. Samsung must choose between operating system-layer defection, partial internalization, or constrained dependency. Google faces no equivalent forced choice — and commits aggressively to Gemini investment precisely because no scenario penalizes that commitment.

The Apple-Gemini licensing relationship is the clearest observable signal of what the dual-position asymmetry produces in practice. Apple licensed Gemini for Siri from a competitor that simultaneously controls the operating system running on 83% of competing devices and is actively building toward the interface layer Apple controls. Standard partnership framing misses the structure: Apple’s Gemini licensing is not a technology partnership. It is a negotiated surrender of intelligence-layer independence to the one institution in the market that has a strategic incentive to use that position.

LIVE MARKET INTELLIGENCE — DUAL-POSITION ASYMMETRY CONFIRMED

Bloomberg reported that Google paid Samsung substantial sums for Gemini installs on Galaxy devices. The payment structure confirms the dual-position asymmetry at the operational level: Google is simultaneously embedding Gemini at the Android OS layer (platform-level coupling) and purchasing Samsung OEM distribution at the device layer (hardware-level coverage). The two channels are not alternatives — they are complements in a dual-channel default placement strategy that reaches users through both the software routing layer and the device purchase decision. The Google-Samsung payment relationship also illuminates Samsung’s constraint in a way that the static OS dependency framing misses. Samsung is receiving substantial revenue from Google for Gemini distribution on its own devices — which means Samsung’s internalization path runs not just against Google’s technical OS infrastructure but against a direct financial incentive to distribute Google’s intelligence product. The 800 million Galaxy AI device target and the Google payment relationship are not in tension; they are the same constraint expressed at different layers of the stack.

AI STRATEGY PROFESSIONALS · The Gemini-Siri relationship is the cleanest example of CDT Foresight Simulation applied to real market structure. Apple’s behavioral grammar predicts managed dependency as the second-best response when internalization is not credibly available. Google’s grammar predicts acceptance of partnership terms that deepen Apple’s dependency while Google continues building toward Apple’s interface layer. Both grammars are executing simultaneously on a single contractual relationship. The relationship is not stable — it is a temporary equilibrium that each party is attempting to use as a platform for moving to a better position.

V. Why Samsung Cannot Simply Replicate Apple — Or Google

Samsung occupies the most structurally complex position in the three-institution system. Samsung has more distribution scale than Apple globally. Samsung has more independence from the intelligence layer than Apple has. Samsung has chip architecture that Apple-level hardware players would require years to replicate. And yet Samsung does not set the competitive equilibrium. Understanding why requires precision about what Samsung actually lacks.

Samsung cannot replicate Apple’s premium position. Apple’s ~55% US premium market share and the services revenue density that flows from it — app spending, subscription commissions, AI revenue — reflect lock-in that Samsung’s volume position cannot access. Samsung competes at price points where per-device services revenue is a fraction of Apple’s. The behavioral grammar Samsung would need to capture premium-tier lock-in is Apple’s brand-coherent, control-first grammar — which is structurally incompatible with Samsung’s conglomerate-distributed processing.

Samsung cannot replicate Google’s intelligence-layer position. Gemini is the product of sustained frontier model investment compounding over years. Samsung Research is real capability investment, but the gap between Samsung’s current intelligence capability and Gemini’s frontier position is not closeable through organic development alone within the relevant time horizon. Acquisition is the alternative path — but acquiring a frontier model provider at current valuations represents a capital commitment that competes directly with Samsung’s semiconductor and display business requirements.

What Samsung can do — and what the Partial Independence scenario at 30% captures — is reach a threshold of credible intelligence independence that changes Google’s incentive structure for Android partnership terms. Samsung does not need to fully internalize intelligence. Samsung needs to build enough intelligence capability that the threat of OS-layer defection becomes credible. A credible defection threat restructures the Android partnership negotiation — which is the strategic goal of the internalization path, not full independence itself.

The Samsung strategic problem is not capability. Samsung has enough capability to pursue every path that matters. The problem is commitment velocity. Conglomerate-distributed grammar produces parallel initiatives that advance without threshold-crossing commitment. Google’s Android investment deliberately raises the threshold for credible defection as high as possible. Samsung’s grammar produces movement toward the threshold; Google’s grammar raises the threshold simultaneously.

CORPORATE STRATEGY · Samsung’s strategic position is the most instructive case in this analysis for platform incumbents navigating the same governing variable. Samsung has real assets — distribution scale, chip independence, branded AI strategy — but its institutional grammar prevents the kind of unified commitment that would translate those assets into a decisive strategic move. The lesson for corporate strategists is not about Samsung specifically. The lesson is about how conglomerate-distributed processing handles threshold-crossing decisions: it doesn’t, by default. Crossing a strategic threshold requires grammar override — a forcing event, leadership intervention, or external pressure sufficient to unify parallel business unit initiatives into a single committed position.

VI. The Governing Variable Revisited — How Google and Samsung Change the Equilibrium

Installment I established that Apple’s passive continuation strategy casts a vote for concentration by reducing competitive pressure on frontier providers to commoditize. Installment II extends that logic into the three-institution frame.

Google’s dual-position strategy actively delays resolution of the governing variable. Under commoditization, Google profits from Android distribution. Under concentration, Google profits from Gemini capability. Google has no incentive to accelerate resolution in either direction — the longer the variable remains unresolved, the longer Google retains leverage under both scenarios simultaneously. Platform strategists who model Google as a commoditization pressure or a concentration pressure are each half-right: Google is neither. Google monetizes unresolved equilibrium.

Samsung’s internalization strategy applies genuine commoditization pressure — but slowly and indirectly. Every dollar Samsung Research invests in on-device AI capability signals to the market that frontier model capability can be approximated at the device layer. Every Galaxy AI feature that executes on-device without cloud inference reduces the per-query revenue that frontier providers capture. Samsung’s internalization path, if successful, compresses the performance differential between frontier and device-level intelligence — which is the definition of commoditization pressure.

Apple’s passive continuation, as established in Installment I, votes for concentration by removing competitive pressure on providers. The three-institution frame reveals that Apple’s vote is now contextualized by two additional dynamics: Google’s ambiguity strategy, which removes Google as a commoditization pressure agent, and Samsung’s internalization pressure, which is the only genuine commoditization force in the current competitive system. Apple’s bet on commoditization depends structurally on Samsung’s internalization succeeding — and Samsung’s internalization path runs directly into Google’s Android infrastructure.

The governing variable, in the three-institution frame, is not resolving cleanly toward either outcome. Google monetizes the ambiguity. Samsung applies slow commoditization pressure. Apple waits. The equilibrium is more stable than any single-institution analysis suggests — and more dangerous for Apple, because the stability is not Apple’s stability. The equilibrium is Google’s stability, maintained by a dual-position hedge that Apple cannot replicate and Samsung cannot quickly acquire.

VII. Foresight Predictions — Google

Six predictions follow from the Google CDT Foresight Simulation. Each carries a defined time window, a causal mechanism rooted in Google’s behavioral profile, and observable signals that allow the prediction to be confirmed or falsified in real time.

PREDICTION 1 GEMINI DEFAULT ASSISTANT CONSOLIDATION

Time Window: 12–18 Months

Google’s speed-dominant grammar and commitment-dominant game theory strategy predict that Gemini becomes the default assistant layer across Android within 12 to 18 months — embedded at the OS routing level, not merely available as an optional download. The mechanism is the same grammar that drove Google Search default placement across browser and device agreements: invest in capability, then lock in distribution through default placement. Regulatory exposure from DOJ Search defaults case provides the constraint that shapes the form but not the direction of the strategy. Market data confirms active materialization: Gemini is natively integrated into Android 14+ as the system-level assistant — accessible via home button, quick-settings panel, and notification layer, with on-device inference enabling sub-second responses. Bloomberg further reported that Google has paid Samsung substantial sums for Gemini installs across Galaxy devices, confirming a dual-channel default placement strategy: OS-layer embedding at the platform level and purchased OEM distribution at the device level. Both channels converge on the same intent-routing objective. Both developments advance the prediction from forecast to confirmed trajectory; the observable signals below mark the completion thresholds.

Observable Signals:

Confirmed: Gemini is integrated as the system-level assistant on Android 14+ devices via home button and quick-settings — OS-layer embedding, not optional download, with on-device sub-second inference already deployed.

Confirmed: Bloomberg-reported Google payments to Samsung for Gemini installs establish dual-channel default placement — OS coupling plus purchased OEM distribution — consistent with commitment-dominant game theory play.

Forward signal: Apple renegotiates or expands Gemini licensing terms for Siri in response to Gemini capability improvements, and Gemini formally displaces Google Assistant as the default system-level responder across all major Android OEM partners.

PREDICTION 2 APPLE BARGAINING POSITION EROSION

Time Window: 18–30 Months

The Gemini-Siri licensing relationship will shift from partnership to structural dependency within 18 to 30 months as Gemini capability compounds and Apple’s internal model development lags. Google’s CSGT Vision output identifies commitment-dominant play: Gemini integration deepens faster than Apple’s internalization path can close the gap. The bargaining power inflection that Installment I predicted for AI providers generally will arrive first through the Google channel specifically — because Gemini is simultaneously Apple’s licensed capability and Google’s primary competitive asset.

Observable Signals:

Google introduces tiered Gemini access pricing or capability differentiation that creates cost escalation for Apple’s Siri integration.

Apple public disclosures reference AI capability investment or acquisition activity that signals recognition of dependency risk.

Apple’s Siri feature roadmap delays trace to Gemini update schedules Google controls rather than Apple engineering timelines.

PREDICTION 3 ANDROID ENTERPRISE AI CAPTURE

Time Window: 12–24 Months

Google’s monetization tension — advertising revenue versus frontier AI margin — resolves toward enterprise AI as the margin-accretive channel. Enterprise AI deployments through Google Workspace, Google Cloud, and the Gemini Application Programming Interface (API) represent a monetization architecture that separates frontier capability revenue from advertising revenue, reducing the internal conflict between the two business models. The prediction: Google’s enterprise AI revenue becomes a structurally significant margin contributor within 24 months, reducing the existential pressure on advertising as the primary AI monetization pathway.

Observable Signals:

Google Cloud AI revenue surpasses a disclosed threshold that analysts identify as enterprise margin contribution rather than infrastructure cost.

Google introduces Gemini-for-Workspace tiers with AI capability pricing that exceeds advertising revenue per equivalent query volume.

Enterprise AI adoption metrics from Google Workspace show measurable displacement of Microsoft Copilot in competitive account reviews.

PREDICTION 4 REGULATORY CONSTRAINT ACTIVATION

Time Window: 18–36 Months

Google’s antitrust constraint is the primary risk to the Dual Dominance base case. The DOJ’s existing AI market investigation, combined with EU regulatory attention to Android bundling, creates a regulatory activation timeline that runs parallel to Google’s Gemini default consolidation strategy. The prediction: at least one jurisdiction imposes a structural constraint on Gemini-Android coupling before the 36-month mark — not prohibiting the relationship, but requiring technical interoperability that reduces the default placement advantage. A September 2025 U.S. antitrust ruling has already crossed the prediction’s first observable threshold, addressing Google’s conduct across Chrome and Android. The ruling does not structurally separate Gemini from Android — but it establishes the precedent and the judicial posture that makes deeper Gemini-Android coupling the primary regulatory target for the next enforcement action. The prediction advances from forecast to active monitoring; the completion threshold is a formal constraint on Gemini default placement specifically.

Observable Signals:

Confirmed: September 2025 U.S. antitrust ruling on Google’s Chrome and Android conduct establishes judicial precedent directly applicable to Gemini-Android default placement — the first observable threshold of this prediction.

Forward signal: DOJ or EU issues a formal complaint or consent decree requirement specifically addressing Gemini’s default assistant placement on Android, distinct from the Chrome/Search remedies.

Forward signal: Google announces a voluntary OEM AI assistant choice screen as a preemptive regulatory management move — and Samsung, as the most structurally significant Android OEM, formally requests default assistant flexibility in partnership negotiations.

PREDICTION 5 PIXEL AS INTELLIGENCE REFERENCE ACCELERATOR

Time Window: 12–24 Months

Google’s Pixel strategy is not a hardware volume play. Pixel functions as a capability reference implementation — the device on which Gemini’s most advanced features debut before propagating to the broader Android ecosystem. The prediction: Pixel’s strategic role as a capability reference accelerates as Gemini capability accumulates, with Pixel becoming the primary device through which Google demonstrates intelligence-layer capabilities that justify enterprise and developer investment in Gemini.

Observable Signals:

Google increases Pixel-exclusive Gemini feature count in consecutive product cycles.

Pixel developer adoption metrics show disproportionate AI developer investment relative to device market share.

Google introduces Pixel enterprise tiers or managed device programs targeting corporate AI deployment use cases.

PREDICTION 6 OPEN-WEIGHT HEDGING INVESTMENT

Time Window: 24–36 Months

Google’s dual-position strategy predicts investment in open-weight model infrastructure as a hedge against the concentration scenario that benefits Gemini less than it might appear. If concentration produces a single dominant frontier provider that is not Google, Android distribution retains value only if that provider’s models route through Android. Google’s investment in open-weight model infrastructure — through Google DeepMind research releases, Tensor Processing Unit (TPU) access programs, and Android-level inference optimization — functions as a commoditization hedge that preserves Android’s routing authority regardless of which intelligence provider achieves frontier dominance.

Observable Signals:

Google releases open-weight model variants of Gemini capability that accelerate third-party developer adoption on Android.

Google announces TPU or inference infrastructure programs that reduce the per-query cost of running frontier models on Android devices.

Android inference optimization features appear in developer documentation that enable competitive model providers to achieve performance parity with Gemini on Android hardware.

VIII. Foresight Predictions — Samsung

Six predictions follow from the Samsung CDT Foresight Simulation, structured identically to the Google set: time window, causal mechanism, and observable signals for real-time confirmation or falsification.

PREDICTION 1 GALAXY AI ON-DEVICE EXPANSION

Time Window: 12–18 Months

Samsung’s conglomerate-distributed grammar and Becker Vision incentive analysis predict rapid Galaxy AI feature expansion as the lowest-cost path to hardware differentiation. On-device AI execution reduces per-query cloud inference cost and reduces Google dependency at the feature layer — two incentives that align with Samsung’s hardware margin optimization. The prediction: Galaxy AI feature density increases significantly within 12 to 18 months, with measurable on-device execution capability replacing cloud-dependent equivalents. Samsung has already confirmed the scale of this commitment: the company targets 800 million Galaxy AI-enabled devices by end-2026, doubling the 2025 base. The 800 million target is not a feature roadmap announcement — it is a capital commitment that operationalizes the Becker Vision incentive analysis. At 800 million devices, on-device AI inference represents a structural cost reduction in cloud dependency that directly improves Samsung’s per-device hardware margin. The prediction is confirmed at the commitment level; the observable signals below mark execution milestones.

Observable Signals:

Confirmed: Samsung’s 800 million Galaxy AI device target by end-2026 (doubling 2025 base) establishes scale of on-device AI deployment commitment — the primary capital signal of Samsung’s internalization trajectory.

Exynos chip roadmap disclosures reference neural processing unit (NPU) capability expansion specifically aligned with Galaxy AI on-device workload requirements, with Mamba and Mixture-of-Experts (MoE) model compression architectures reducing inference cost at the silicon layer.

Samsung Research publishes on-device model efficiency benchmarks showing performance parity with cloud inference on core Galaxy AI tasks — the technical completion threshold for the internalization path.

PREDICTION 2 ANDROID ALTERNATIVE CAPABILITY SIGNAL

Time Window: 18–30 Months

Samsung’s CSGT Vision output identifies delay-dominant play as the current equilibrium — but also identifies the conditions under which Samsung transitions to commitment-dominant. The prediction: Samsung deploys a credible Android alternative capability signal within 18 to 30 months, not necessarily as a full platform launch, but as a negotiating lever in Android partnership terms. The signal may take the form of a Tizen revival announcement, a partnership with a non-Android operating system provider, or a formal request for default assistant flexibility within Android.

Observable Signals:

Samsung announces a Tizen development revival, an alternative OS pilot, or a partnership with a non-Android platform for specific device categories.

Samsung formally requests default AI assistant flexibility in public Android partnership negotiations or regulatory submissions.

Samsung publicly references its right to modify the Android routing layer in device-level AI processing disclosures.

PREDICTION 3 AI PROVIDER DIVERSIFICATION

Time Window: 12–24 Months

Samsung’s FGR output establishes that the deepest moat Samsung holds is at the device distribution layer — not at the intelligence layer. Diversifying AI provider relationships is the rational strategy for an institution that needs to retain distribution dominance without depending on a single intelligence provider whose OS infrastructure Samsung does not control. The prediction: Samsung formally establishes AI provider relationships with at least two frontier providers — reducing Google dependency at the intelligence layer without requiring OS-layer defection.

Observable Signals:

Samsung announces a formal partnership with a non-Google frontier AI provider for Galaxy AI feature delivery.

Samsung Galaxy AI features begin routing inference through non-Gemini model providers for specific use cases or regions.

Samsung Research establishes a co-development agreement with an open-weight model provider that reduces Samsung’s dependence on Google’s closed model infrastructure.

PREDICTION 4 EXYNOS INTELLIGENCE INTEGRATION

Time Window: 18–36 Months

Exynos is Samsung’s most structurally significant asset in the intelligence internalization path. An independent chip architecture capable of running frontier-grade inference on-device at low cost would give Samsung genuine routing independence from Google’s cloud infrastructure — which is the layer at which Google’s intelligence leverage operates. The prediction: Exynos roadmap and Galaxy AI feature integration converge within 18 to 36 months, producing a chip-level intelligence execution architecture that Samsung controls independently of Google.

Observable Signals:

Exynos chip launch materials specifically reference AI inference capability benchmarks against Gemini cloud performance.

Samsung introduces Galaxy AI features that are exclusively available on Exynos-powered devices as a capability differentiation strategy.

Samsung Research and Exynos division announce a coordinated AI inference optimization program that targets on-device model execution at frontier-grade performance.

PREDICTION 5 CONGLOMERATE AI CAPITAL REALLOCATION

Time Window: 24–36 Months

Samsung’s primary structural constraint is conglomerate capital competition. AI investment will not reach the velocity required for threshold-crossing internalization without a formal capital reallocation decision that prioritizes AI over competing semiconductor or display business requirements. The prediction: within 24 to 36 months, a forcing event — competitive pressure from a Galaxy AI capability gap, a major OEM competitor AI announcement, or a significant Google Android policy change — triggers a formal Samsung capital reallocation toward AI investment.

Observable Signals:

Samsung announces an AI-specific investment program or fund that explicitly separates AI capital from semiconductor and display business allocation.

Samsung acquires an AI capability provider — model developer, inference infrastructure company, or specialized AI research organization — at a valuation that represents a threshold-crossing capital commitment.

Samsung leadership public statements shift from hardware-first AI framing to AI-first strategic commitment language.

PREDICTION 6 ENTERPRISE AI HARDWARE POSITIONING

Time Window: 12–24 Months

Samsung’s FGR output establishes distribution scale as the deepest existing moat. Enterprise AI hardware is the market segment where Samsung’s distribution advantage, chip independence, and on-device AI capability converge most naturally. Enterprise customers purchasing AI-capable devices at volume require on-device security, inference reliability, and OEM-level customization — all of which favor Samsung’s conglomerate asset base over Apple’s premium consumer positioning or Google’s cloud-first architecture.

Observable Signals:

Samsung launches enterprise-specific Galaxy AI device configurations with on-device AI capability marketed explicitly to IT procurement audiences.

Samsung announces enterprise AI partnerships with enterprise software providers that integrate Galaxy AI into existing enterprise workflow tools.

Samsung’s enterprise device sales metrics show disproportionate Galaxy AI feature adoption relative to consumer device equivalents.

IX. The Investor Implication — Cross-Institutional Value Accrual

The three-institution frame produces a specific investor implication that standard AI platform analysis misses: value accrual in the AI platform market is not a zero-sum competition between Apple, Google, and Samsung. Value accrual depends on which layer of the stack captures margin as the governing variable resolves — and each institution occupies a different layer.

Under Commoditization

Distribution layer captures margin. Google’s Android OS share, Apple’s premium ecosystem lock-in, and Samsung’s volume distribution each retain value — but at different densities. Apple’s per-device services margin is the highest; Samsung’s per-device services margin is the lowest; Google’s advertising margin is the most scalable. Under commoditization, the market does not pick a single winner — it stratifies by margin density across distribution layers.

Under Concentration

Intelligence layer captures margin. Google’s Gemini position is structurally advantaged. OpenAI, Anthropic, and other frontier providers capture margin that would otherwise flow to the distribution layer. Apple’s Services margin compresses at the AI revenue boundary. Samsung’s hardware margin avoids direct compression — hardware margin does not depend on intelligence layer ownership — but Samsung’s AI differentiation strategy weakens if frontier models are available across all devices at equivalent quality.

The Investor’s Governing Variable Bet

Every investor holding AI platform exposure is implicitly making a bet on the governing variable. Standard models do not make this bet explicit. Investors who do not explicitly model the commoditization-concentration variable are implicitly assuming one outcome or the other without pricing the scenario risk. The three-institution frame provides the analytical structure for making the bet explicit:

· Google is the only holding that retains value under both outcomes. Position accordingly.

· Apple’s Services margin trajectory is the cleanest real-time signal of which outcome is materializing. Track it as a governing variable indicator, not as a standalone financial metric.

· Samsung’s internalization velocity — measurable through Galaxy AI feature density, Exynos roadmap, and AI provider partnership announcements — is the cleanest real-time signal of commoditization pressure. Samsung building toward intelligence independence is the same as Samsung applying pressure on the concentration scenario.

PORTFOLIO CONSTRUCTION · The cross-institutional investor implication is not a rotation trade. It is a structural overlay. Investors modeling AI platform exposure should hold Google as a structural hedge against governing variable uncertainty, track Apple’s Services margin as a real-time governing variable indicator, and model Samsung’s internalization trajectory as a commoditization pressure signal — then adjust directional AI platform exposure based on which scenario the three leading indicators are converging toward. The system resolves at the layer that controls user intent routing.

X. Closing — The Three-Bet Landscape and Installment III Preview

Apple, Google, and Samsung together represent the dominant hardware layer through which most consumers encounter artificial intelligence. Installment I established Apple’s equilibrium. Installment II has identified the two institutional grammars applying pressure to it from opposite sides of the stack.

The governing variable — commoditization versus concentration — remains unresolved. Google’s dual-position strategy actively sustains the ambiguity. Samsung’s internalization path applies slow commoditization pressure through on-device AI investment. Apple waits for the variable to resolve in its favor while passive continuation deepens the dependency that makes waiting more expensive.

The competitive geometry is not symmetric. Google occupies the structural high ground: intelligence ownership at the frontier, OS routing control at the distribution layer, and a behavioral grammar that processes the governing variable ambiguity as an asset rather than a risk. Samsung holds the largest distribution scale in the market and is running the most structurally interesting internalization bet — but the OS constraint places Google’s infrastructure between Samsung and full routing independence. Apple controls the most valuable interface in the premium tier and is losing strategic optionality at the rate of one quarter of passive continuation at a time.

The three-bet landscape resolves when the governing variable forces a threshold crossing. The institution that crosses first — whether through internalization, regulatory disruption, or interface displacement — sets the equilibrium for the others. Installment I predicted Apple would not cross voluntarily. Installment II predicts Google will not need to cross — Google holds positions on both sides. Samsung may force the issue from below, either by achieving credible OS-layer defection threat or by triggering a Google Android policy change that creates the forcing event Samsung’s grammar cannot generate internally.

Installment III Preview — The Intelligence Layer

OpenAI · Microsoft · Anthropic · Who Captures Value If Platform Incumbents Fail to Internalize

Installment III asks the question the three-institution frame makes inevitable: what happens when the intelligence layer stops behaving like infrastructure and starts behaving like a platform? The central structural claim: frontier model providers do not need to displace Apple, Google, or Samsung to capture their margin — they need only to hold the capability differential long enough that platform incumbents’ bargaining positions collapse. Installment III maps the institutional grammars, constraint stacks, and CDT Foresight Simulations for OpenAI, Microsoft, and Anthropic against precisely that threshold condition.

The analysis covers enterprise-to-consumer bleed, direct interface displacement dynamics, and the competitive geometry between OpenAI’s consumer ambition, Microsoft’s enterprise lock-in, and Anthropic’s structural positioning — each modeled as a CDT against the same governing variable that Installments I and II apply to the hardware layer.