MCAI Economics Vision: The Consumer AI Device Intelligence Layer

MindCast Consumer AI Device Series: Installment III Value Capture Under Interface Drift OpenAI, Microsoft, Anthropic, Google DeepMind

MindCast Consumer AI Device series publications: Installment I — The Intelligence Gap: Apple’s AI Strategy and the Commoditization Bet | Installment II — The Apple AI Challenger Framework: Google, Samsung, and the Intelligence Layer | Installment III — The Consumer AI Device Intelligence Layer: Value Capture Under Interface Drift | Installment IV How Cybernetic Feedback Latency, Loop Architecture, and Ashby’s Viability Condition Resolve Consumer AI Device Competition

Executive Summary

Intelligence is migrating from feature to governing layer. Device incumbents once controlled user access, monetization pathways, and developer routing. Frontier model providers now compete to absorb those functions directly. The governing variable remains unchanged: commoditization versus concentration. Installment III resolves where value accrues when device incumbents fail to internalize intelligence.

Six institutions define the intelligence layer competitive system: OpenAI, Microsoft, Anthropic, Google DeepMind, Meta, and Mistral. Each operates with a distinct objective function, constraint stack, and adaptation grammar. The interaction of these grammars determines whether intelligence becomes a new interface or collapses into infrastructure. MindCast AI Proprietary (MAP) Cognitive Digital Twin (CDT) execution across all six Cognitive Digital Twins produces a split coordination equilibrium. Microsoft captures enterprise coordination authority. OpenAI captures consumer interaction gravity. Google retains latent coordination through Android and search but faces regulatory drag and internal incentive conflict. Anthropic stabilizes enterprise trust demand without controlling distribution. Meta compresses pricing power through open-weight releases. Mistral fragments regional alignment and weakens global concentration.

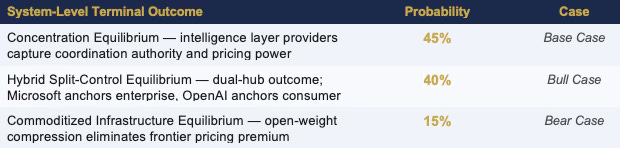

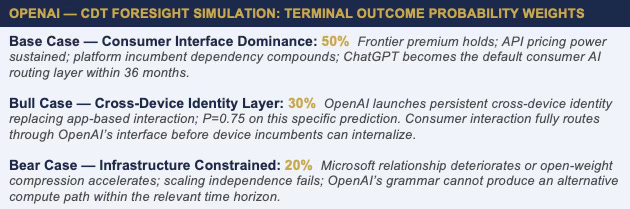

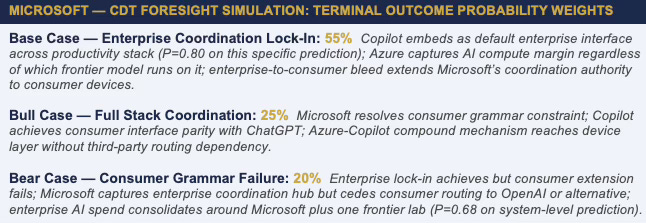

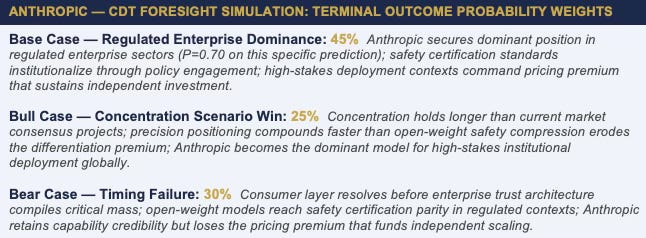

CDT Foresight Simulation assigns the following system-level terminal outcome probabilities:

Value capture concentrates at the intelligence layer under both the base and bull cases. Device incumbents lose margin control unless they internalize capability or reassert coordination authority before behavioral defaults at the enterprise and consumer layers compound into irreversibility. If the current structure persists, Microsoft and OpenAI consolidate coordination within 24 to 36 months; if device incumbents reassert interface control before that window closes, the model-layer attractor fails and the analysis is falsified.

FOR INVESTORS

The intelligence layer’s value capture architecture is not symmetric across providers. OpenAI’s consumption-scale position and Microsoft’s enterprise distribution channel each produce margin capture under concentration — but through different mechanisms with different risk profiles. OpenAI’s risk is structural: Microsoft controls the infrastructure OpenAI depends on, and that dependency is not priced into frontier model valuations. Microsoft’s risk is execution: the enterprise-to-consumer bleed is a real strategic trajectory, but consumer interface capture requires behavioral grammar changes that Microsoft’s ICG resists. Anthropic’s risk is timing: the safety-first grammar produces capability differentiation that is valuable under concentration — but only if concentration persists long enough for precision positioning to compound. Meta’s Llama strategy is the systematic risk none of these valuations correctly prices: open-weight commoditization does not appear in any intelligence layer provider’s discounted cash flow model as a primary scenario.

FOR CORPORATE STRATEGY

Platform incumbents reading Installments I and II as a competitive analysis of Apple, Google, and Samsung should read Installment III as the supply chain analysis of their own dependency. Every platform that licenses AI capability from OpenAI, Microsoft, or Anthropic is negotiating with an institution that has a structural interest in preventing internalization. The intelligence layer providers are not neutral infrastructure suppliers — each is running a defined strategy to deepen dependency, expand the interface boundary, and capture the margin that platform incumbents are currently leaving on the table. Corporate strategists modeling AI vendor relationships should apply CDT Foresight Simulation framing to those relationships: the behavioral grammar of the intelligence provider predicts how partnership terms will evolve, what the provider will request at each renegotiation, and where the dependency threshold crosses into irreversibility.

Framework Note

Readers of Installments I and II know the MAP CDT architecture. The compressed reference below is provided for Installment III readers entering the series here. The full framework architecture, Vision Function definitions, and behavioral profile methodology appear in the Installment I publication at www.mindcast-ai.com/p/apple-ai-strategy.

MAP CDT — MindCast AI Proprietary CDT Foresight Simulation

MAP CDT is a behavioral economics and game theory simulation engine. MAP CDT routes raw signals through a structured nine-step process — signal intake, hypothesis formation, causal inference, signal integrity validation, Vision Function routing, dominance resolution, and recursive foresight simulation — resolving institutional behavior into equilibrium-classified, falsifiable predictive outputs.

Cognitive Digital Twin (CDT)

MAP CDT models each institutional subject as a Cognitive Digital Twin (CDT): a dynamic behavioral replica encoding the institution’s objective function, constraint stack, adaptation velocity, and feedback sensitivity. The simulation stress-tests each CDT against multi-agent strategic interaction and bounded time horizons to generate forward predictions.

The Governing Variable — Inverted Stakes at the Intelligence Layer

The governing variable — does artificial intelligence commoditize, or does it concentrate? — applies to every installment in this series with inverted stakes depending on which institutional layer is under analysis. Platform incumbents need commoditization to win: commoditization compresses the capability gap that makes them dependent on intelligence layer providers. Intelligence layer providers need concentration to hold: concentration preserves the capability advantage that gives them pricing power and dependency leverage over platform incumbents. Google’s structural asymmetry — identified in Installment II as the series’ central finding — means Google wins under either resolution. No intelligence layer provider holds the same dual position.

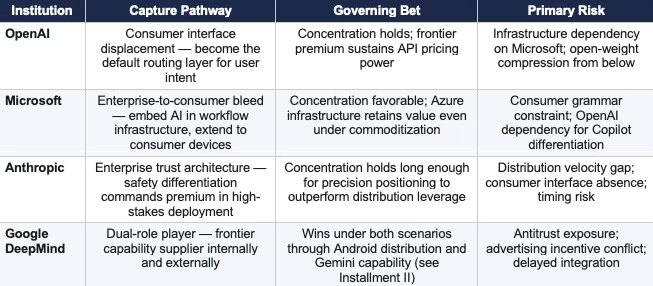

System Architecture — Anchors and Disruptors

Installment III profiles the intelligence layer as a competitive system, not a set of independent firms. Four actors function as primary CDTs — anchors — because they can capture coordination and margin: OpenAI through consumer interface displacement, Microsoft through infrastructure and distribution hybrid, Anthropic through enterprise intelligence layer positioning, and Google DeepMind through its internal and external dual role. Two actors function as equilibrium disruptors because they reshape pricing and geography without consolidating coordination: Meta through open-weight commoditization shock, and Mistral through sovereign and regional fragmentation.

The system implication is precise: device incumbents are no longer primarily competing with each other. Coordination migrates to the intelligence layer. Pricing power follows coordination.

I. The Intelligence Layer Thesis

Installments I and II documented a structural drift pattern across the platform incumbent tier. Apple is drift-stable toward dependency. Samsung’s internalization path is constrained by the OS layer Google controls. Google’s dual position means Google wins regardless of how the governing variable resolves. The platform incumbent analysis produces a single forward-looking question: if Apple, Samsung, and every platform incumbent running a partnership-and-interface AI strategy collectively fail to internalize — if dependency becomes the structural norm at the platform layer — where does the margin go?

The answer is the intelligence layer. But the intelligence layer is not a monolith. Three distinct value capture architectures are operating simultaneously, each with different behavioral grammars, different constraint stacks, and different theories of how intelligence layer margin is extracted and defended. A fourth dynamic — Meta’s open-weight strategy — functions not as an intelligence layer institution but as the commoditization accelerant that determines whether the intelligence layer concentrates or dissolves.

The Three Capture Pathways

The Enterprise-to-Consumer Bleed

Enterprise-to-consumer bleed is not a description of companies selling to both markets. It is a description of a specific value capture mechanism: intelligence layer providers embedding AI capability so deeply into enterprise workflow infrastructure that the behavioral defaults that govern enterprise users carry over into consumer contexts — reducing the platform incumbent’s ability to set the default AI pathway at the consumer interface.

Microsoft is the primary institution executing this mechanism. GitHub Copilot captures developer behavior at the code layer. Office 365 Copilot captures knowledge worker behavior at the productivity layer. Azure AI captures enterprise infrastructure decisions at the compute layer. Each captures a behavioral default that was previously neutral with respect to the consumer AI interface. Once those defaults are set, the consumer device — whether Apple, Samsung, or Android — becomes a secondary interface for intelligence that is already routed through Microsoft’s enterprise stack.

OpenAI’s bleed mechanism runs in the opposite direction: consumer-to-enterprise. ChatGPT’s consumer penetration creates familiarity and behavioral defaults that carry into enterprise purchasing decisions. Anthropic’s grammar produces neither mechanism — a trade-off that is intentional but that narrows the value capture window relative to both competitors.

FOR INVESTORS

The enterprise-to-consumer bleed is the most underanalyzed value capture mechanism in the current AI platform market. Standard analyst models track enterprise AI revenue and consumer AI revenue as separate channels. The structural insight is that the channels are directionally connected. Microsoft’s enterprise-first bleed and OpenAI’s consumer-first bleed are both capturing behavioral defaults that reduce the platform incumbent’s ability to set the AI routing layer at the device. Every platform incumbent that fails to internalize the intelligence layer is ceding default-setting authority to one of these two mechanisms — and the cession is not reversible once behavioral defaults compound into institutional lock-in.

The Meta Variable: Commoditization From Below

Every value capture architecture in this analysis depends on the governing variable resolving toward concentration. Meta’s open-weight releases — Llama and its successors — are the systematic pressure against that resolution. Meta is the only actor in this system incentivized to destroy intelligence-layer margins rather than capture them. Meta’s incentive structure is structurally different from every other institution in this series: Meta does not need to capture intelligence layer margin. Meta needs to prevent any single intelligence layer provider from capturing enough margin to fund a competitive advertising platform. Open-weight releases serve that strategic objective by compressing the capability gap that gives frontier providers pricing power.

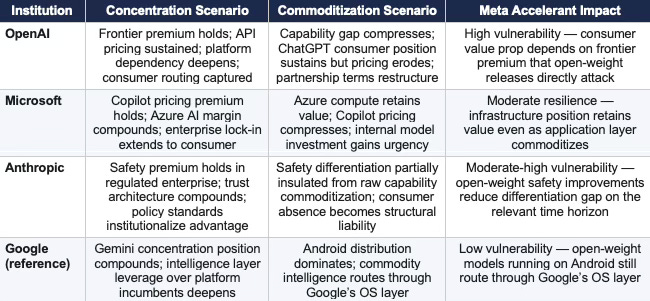

The structural finding: Microsoft’s infrastructure position is most resilient to open-weight commoditization because Azure retains value as compute infrastructure regardless of which model runs on it. OpenAI’s consumer position is most vulnerable because ChatGPT’s value proposition depends on frontier capability premium that open-weight models erode from below. Anthropic’s safety-differentiated positioning occupies an intermediate resilience: safety and alignment investment is not replicable through open-weight releases on the same timeline as raw capability, but the market must value safety differentiation enough to sustain pricing premium in a commoditizing capability environment.

CDT Foresight Simulation assigns Meta’s open-weight commoditization pressure a CSI score of 0.79 — the causal claim that open-weight models compress API pricing power clears the deployment threshold and is treated as a structurally confirmed input to every institution’s scenario analysis below. The Causation Vision validates five primary causal claims: intelligence displaces interface (CSI: 0.84), Microsoft captures enterprise margin (CSI: 0.88), OpenAI captures consumer routing (CSI: 0.86), Google fails to consolidate coordination dominance (CSI: 0.72), and open-weight models compress pricing (CSI: 0.79). All five exceed the deployment threshold. The analysis proceeds on this evidentiary foundation.

FOR CORPORATE STRATEGY

Platform incumbents negotiating AI vendor relationships should model the Meta variable as a structural constraint on intelligence layer pricing power. If Llama’s open-weight releases continue compressing the capability gap, the negotiating leverage that OpenAI and Anthropic currently hold over platform incumbents decreases over time — and the internalization threshold decreases with it. Corporate strategists who model AI vendor dependency as a fixed constraint are missing the dynamic: the governing variable is not set. Meta’s strategy is actively pushing it toward commoditization. Platform incumbents with long-term AI vendor contracts should build optionality around the commoditization scenario, not assume the concentration scenario persists.

II. CDT Foresight Simulation — System Outputs

Before profiling each institution individually, MAP CDT resolves system-level outputs across all six CDTs simultaneously. The Vision Function rankings below establish the competitive geometry that each institutional CDT Foresight Simulation is stress-tested against. Scores are weaved into each institution’s profile as evidential anchors; the full system ranking is presented here for cross-institutional reference.

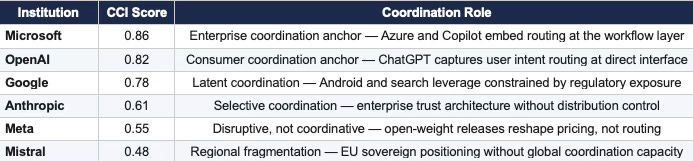

Coase Vision — Coordination Authority

The Coase Vision identifies the coordination hub — the node all other agents route through. In a split-hub equilibrium, two nodes share coordination authority across different layers of the stack. The Coordination Capacity Index (CCI) measures each institution’s structural ability to become the default routing node.

Coase Result: Dual-hub equilibrium. Enterprise hub: Microsoft (CCI: 0.86). Consumer hub: OpenAI (CCI: 0.82). Google retains latent coordination authority (CCI: 0.78) but cannot consolidate it under current regulatory constraints.

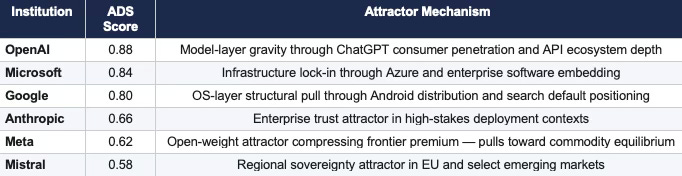

Field Geometry (FGR) — Attractor Shift

The FGR Vision maps switching costs, lock-in depth, and attractor states. The Attractor Dominance Score (ADS) measures which institutions are generating the structural pull that draws users, developers, and platform incumbents into their orbit.

FGR Result: Model-layer gravity exceeds device-layer gravity. Users increasingly route through intelligence interfaces rather than hardware-native surfaces. The geometry shift toward intelligence-layer dominance is the primary structural finding of the FGR Vision and the evidentiary foundation for the series’ closing thesis.

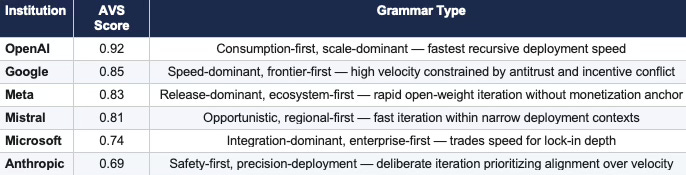

ICG Vision — Adaptation Velocity

The ICG Vision measures adaptation velocity, constraint rigidity, and identity preservation strength. Adaptation Velocity Score (AVS) captures how quickly each institution’s cognitive grammar processes and deploys responses to new competitive signals.

ICG Result: OpenAI leads in recursive deployment speed (AVS: 0.92). The velocity gap between OpenAI and Anthropic (0.92 versus 0.69) is analytically significant: at this differential, OpenAI compounds behavioral defaults faster than Anthropic’s trust architecture can accumulate enterprise lock-in — unless the concentration scenario holds long enough for precision positioning to close the velocity deficit through premium pricing rather than volume.

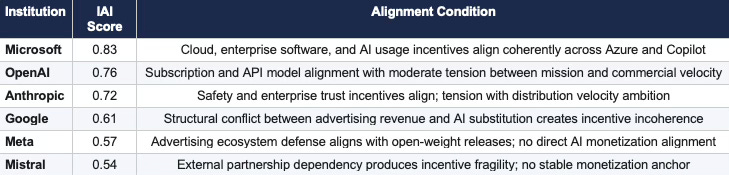

Becker Vision — Incentive Alignment

The Becker Vision maps where agent incentives align, diverge, and how misalignment accumulates into strategic pressure. The Incentive Alignment Index (IAI) measures coherence between the institution’s stated objective function and the actual incentive structures governing its deployment decisions.

Becker Result: Google’s IAI score of 0.61 is the lowest among anchor institutions — and the most consequential. Advertising revenue depends on high-volume query resolution; frontier AI capability cannibalizes the query surface that advertising monetizes. The incentive incoherence does not prevent Google from executing at the frontier; it prevents Google from executing decisively at the interface — which is precisely the layer the CDT Foresight Simulations identify as the primary value capture battleground.

CSGT Vision — Strategic Interaction

The Chicago Strategic Game Theory Vision identifies whether institutions are playing commitment-dominant or delay-dominant strategies and measures equilibrium persistence under competitive pressure.

CSGT Result: Resolving equilibrium with asymmetric commitment pressure. OpenAI and Microsoft pursue commitment-dominant strategies that foreclose platform incumbent options. Google’s delay index of 0.52 — the highest among anchor institutions — reflects the regulatory and incentive constraints documented in Installment II. Google holds latent coordination authority that commitment-dominant competitors are exploiting while Google’s grammar produces delay.

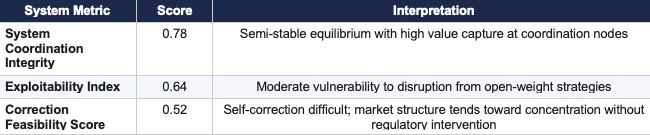

Chicago LBE Composite — System Equilibrium

The system trends toward a semi-stable equilibrium with high value capture at coordination nodes (Microsoft: enterprise, OpenAI: consumer) and moderate vulnerability to disruption from open-weight strategies. Correction feasibility at 0.52 indicates that the market structure, absent regulatory intervention or platform incumbent internalization, will not self-correct toward commoditization on the relevant time horizon.

Contact mcai@mindcast-ai.com to partner with us on Predictive Cognitive AI in Law and Behavioral Economics. To deep dive into MindCast AI upload the URL of any publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

Recent projects: Chicago School Accelerated — AI Infrastructure Patent Coordination | The Power Stack — How Energy Infrastructure Became the New AI Battleground | Prestige Markets as Signal Economies, A Model of Signal Suppression and Institutional Failure | Three AIs Walk Into Super Bowl LX and Each Simulation Thinks It Knows the Ending | MindCast Predictive Cybernetics Suite

III. OpenAI — Consumption Scale and the Dependency Architecture

OPENAI — Behavioral Profile (MAP CDT Layer)

MAP CDT models OpenAI as a consumption-dominant, partnership-leveraged, infrastructure-dependent institutional CDT. The behavioral profile CSI score resolves at 0.86 (High) — driven by strong signal alignment (ALI: 0.90), high causal model fit (CMF: 0.88), and reliable input signal integrity (RIS: 0.85). Vision Function dominance resolution: FGR (ADS: 0.88) over ICG (AVS: 0.92) is a split result; the FGR’s structural attractor position provides the primary predictive anchor over pure adaptation velocity, as structural position constrains grammar expression. OpenAI’s strategic position is simultaneously the most powerful and the most structurally exposed in the intelligence layer: the institution that platform incumbents license when internalization fails is itself dependent on infrastructure its primary investor controls.

Governing Objective Function

OpenAI optimizes for frontier capability dominance plus consumption scale — not for margin maximization in the near term. Revenue growth funds compute investment that sustains the capability lead that sustains pricing power. The objective function is recursive: frontier capability requires compute, compute requires revenue, revenue requires consumption scale, consumption scale requires frontier capability to remain premium. The loop holds as long as the governing variable resolves toward concentration. The loop breaks if open-weight models compress the capability gap faster than OpenAI’s compute investment widens it.

Constraint Stack

Microsoft Infrastructure Dependency. Microsoft controls the Azure compute infrastructure that OpenAI’s training and inference operations depend on. The dependency is not incidental — it is structural. OpenAI cannot scale frontier model training without Azure, and Microsoft’s equity position and infrastructure control give Microsoft leverage over OpenAI’s strategic decisions that does not appear in any standard competitive analysis. Monetization balance between subscription, API, and enterprise channels creates a parallel tension: consumer pricing expectations conflict with enterprise contract structures, and both constrain OpenAI’s ability to maximize revenue without eroding one channel’s growth.

Non-Profit Governance Tension. OpenAI’s governance structure — a for-profit arm operating under a non-profit board with a capped-profit architecture — creates institutional tension that manifests as strategic inconsistency. Mission framing and commercial framing produce competing pressures on deployment velocity, pricing decisions, and partnership terms. The tension is structural and persists regardless of governance restructuring efforts.

Behavioral Signature

OpenAI exhibits four consistent behavioral patterns: capability release timed to competitive pressure rather than internal roadmap; partnership-as-distribution (licensing to Apple, Microsoft, enterprise customers) rather than organic channel build; product expansion into adjacent layers following capability establishment; and governance restructuring to reduce constraints on commercial velocity. The adaptation velocity score of 0.92 — the highest in the system — reflects this grammar’s recursive speed. The commitment score of 0.89 confirms that OpenAI’s deployment signaling is foreclosing competitor options at a faster rate than any other institution in this analysis.

Installed Cognitive Grammar — Consumption-First, Scale-Dominant

OpenAI processes strategic decisions through a consumption-first sequence: establish frontier capability, release through API and consumer product to maximize consumption scale, use consumption scale to fund next frontier investment, use frontier position to deepen platform incumbent dependency. The incentive alignment index of 0.76 reflects moderate tension between the subscription model’s consumer pricing expectations and the enterprise API model’s customization requirements — a tension that the grammar resolves through product differentiation rather than pricing coherence.

Failure Mode Sequence

Primary — Infrastructure Constraint. Microsoft’s compute infrastructure control limits OpenAI’s scaling independence. A deteriorating Microsoft relationship — whether through strategic divergence, commercial dispute, or governance conflict — would impose compute constraints that consumption-first grammar cannot route around on the relevant time horizon.

Secondary — Open-Weight Compression. Meta’s Llama strategy erodes the frontier capability premium that sustains ChatGPT’s pricing power. If open-weight models reach frontier-equivalent performance in the consumer use cases that drive ChatGPT adoption, OpenAI’s consumer-to-enterprise bleed mechanism loses the capability differentiation that justifies the pricing premium.

CDT COMPRESSION — OPENAI

Consumer-facing intelligence layer attempting to become the default routing surface for user intent — recursively self-funding through consumption scale while structurally exposed at the compute layer above and the capability compression layer below.

IV. Microsoft — Enterprise Infrastructure and the Consumer Bleed

MICROSOFT — Behavioral Profile (MAP CDT Layer)

MAP CDT models Microsoft as an infrastructure-dominant, integration-leveraged, enterprise-to-consumer-extending institutional CDT. The behavioral profile CSI score resolves at 0.88 (High) — the highest in the system — driven by very high signal alignment (ALI: 0.92), strong causal model fit (CMF: 0.89), and high input signal integrity (RIS: 0.88). Vision Function dominance resolution: FGR (ADS: 0.84) over ICG (AVS: 0.74) — structural lock-in position drives outcome over grammar velocity. Microsoft does not need to win the frontier model race. Microsoft needs the frontier model race to run on its infrastructure — which it already does.

Governing Objective Function

Microsoft optimizes for enterprise workflow lock-in extended into AI-native interaction — not for frontier capability leadership in the OpenAI sense. Azure is the margin engine: compute infrastructure that captures value regardless of which model runs on it. Copilot is the lock-in mechanism: embedding AI interaction so deeply into Office 365, Teams, and GitHub workflows that AI-powered productivity becomes inseparable from Microsoft’s software subscription. The objective function compounds: Azure revenue funds OpenAI compute, Copilot adoption funds Azure consumption growth, enterprise lock-in funds Copilot pricing power. The coordination capacity index of 0.86 — the highest in the system — reflects the structural coherence of this compounding mechanism.

Constraint Stack

OpenAI Dependency (Inverted). Microsoft’s Copilot strategy depends on OpenAI’s frontier capability to differentiate the product. If OpenAI’s capability lead erodes — through open-weight commoditization or competitive pressure from Anthropic or Google — Microsoft’s Copilot pricing premium compresses. Microsoft has invested in internal AI capability (Phi models, Azure AI Foundry) as an alternative, but those capabilities are not currently at frontier level for the use cases that drive Copilot’s enterprise differentiation.

Consumer Grammar Constraint. Microsoft’s institutional grammar is enterprise-first: products are built for enterprise deployment, pricing is structured for enterprise contracts, and distribution moves through enterprise sales channels. Extending that grammar to consumer interfaces — Copilot on Windows, Copilot in consumer Microsoft 365 — requires behavioral changes that Microsoft’s ICG resists. Microsoft’s consumer AI track record (Cortana, Bing AI) reflects this constraint. The adaptation velocity score of 0.74 — the lowest among anchor institutions — quantifies the grammar’s resistance to consumer-speed iteration.

Antitrust Exposure (Emerging). Microsoft’s equity position in OpenAI and simultaneous role as OpenAI’s primary infrastructure provider is under regulatory scrutiny in the EU and UK. The structural concern mirrors the Android-Gemini coupling concern that limits Google: a dominant infrastructure provider that also owns the primary application layer running on that infrastructure faces competition law risk at each coupling point.

Behavioral Signature

Microsoft exhibits three consistent behavioral patterns across technology transitions: acquisition or deep investment to access capability it cannot build organically at the required velocity; integration of acquired capability into the Office and Azure distribution infrastructure to convert capability into enterprise lock-in; and slow consumer extension of enterprise-first products, producing market presence without market leadership at the consumer interface. The incentive alignment index of 0.83 — the highest in the system — reflects the structural coherence of these patterns across cloud, enterprise software, and AI usage.

Installed Cognitive Grammar — Integration-Dominant, Enterprise-First

Microsoft processes new technology domains through an integration-dominant sequence: acquire or invest in frontier capability, integrate into existing enterprise software distribution, monetize through subscription and infrastructure pricing, extend to consumer interfaces as a secondary channel. The commitment score of 0.85 confirms that Microsoft is executing commitment-dominant strategy — locking in enterprise switching costs before competitors arrive at the workflow layer. The grammar predicts that Microsoft will deepen Copilot integration before expanding consumer distribution, and will absorb OpenAI dependency risk through internal model investment that reduces but does not eliminate frontier dependency.

Failure Mode Sequence

Primary — Over-Integration Rigidity. Integration depth that produces enterprise lock-in also produces adaptation rigidity. Microsoft’s grammar generates durable enterprise position but slow consumer adaptation velocity. If the consumer AI interface shifts faster than Microsoft’s ICG can respond — specifically, if OpenAI’s cross-device identity layer or an alternative consumer surface displaces Windows and Microsoft 365 as the default consumer AI routing point — Microsoft’s enterprise-to-consumer bleed mechanism loses its downstream extension.

Secondary — Frontier Capability Compression. Copilot’s pricing premium depends on OpenAI’s frontier capability differentiation. Open-weight compression that reduces the capability gap between frontier and open-weight models compresses Copilot’s premium while Azure’s infrastructure margin remains insulated. The failure mode is product-layer, not infrastructure-layer — which limits severity but constrains Microsoft’s consumer ambition.

CDT COMPRESSION — MICROSOFT

Infrastructure-backed coordination authority absorbing enterprise AI demand through workflow embedding, compounding Azure margin and Copilot lock-in simultaneously — the system’s highest-CSI institutional CDT.

V. Anthropic — Capability Differentiation and the Precision Positioning Path

ANTHROPIC — Behavioral Profile (MAP CDT Layer)

MAP CDT models Anthropic as a capability-differentiated, safety-constrained, enterprise-selective institutional CDT. The behavioral profile CSI score resolves at 0.72 (Moderate) — driven by moderate signal alignment (ALI: 0.78), constrained causal model fit (CMF: 0.70), and reduced input signal integrity (RIS: 0.68). Vision Function dominance resolution: FGR (ADS: 0.66) over ICG (AVS: 0.69) — a narrow split that reflects genuine uncertainty about whether Anthropic’s structural position or its grammar velocity produces the binding constraint on its value capture path. The CSI score of 0.72 is the lowest among anchor institutions and directly reflects the timing risk that is Anthropic’s primary structural vulnerability.

Governing Objective Function

Anthropic optimizes for safety-differentiated frontier capability — specifically, for the subset of enterprise use cases where alignment reliability and interpretability justify premium pricing over higher-consumption alternatives. The objective function is not margin maximization at scale. Anthropic’s grammar prioritizes the enterprise trust architecture that makes Claude the preferred model for high-stakes deployment contexts: legal, medical, financial, governmental. The incentive alignment index of 0.72 reflects coherence between safety investment and enterprise trust accumulation, with residual tension at the distribution velocity constraint where safety-first deployment pacing conflicts with the consumption scale required to generate enterprise behavioral defaults.

Constraint Stack

Amazon Infrastructure Dependency. Amazon’s AWS investment gives Anthropic compute access at frontier training scale — and creates a structural dependency parallel to OpenAI’s Microsoft exposure. Amazon’s Bedrock platform distributes Claude to enterprise AWS customers, embedding Anthropic’s model in Amazon’s cloud infrastructure stack. The dependency creates channel reach but reduces Anthropic’s pricing autonomy and strategic independence in proportion to AWS revenue concentration.

Distribution Velocity Constraint. Anthropic’s safety-first grammar produces slower deployment velocity than OpenAI’s consumption-first grammar. The adaptation velocity score of 0.69 — twenty-three points below OpenAI’s 0.92 — quantifies the velocity gap. Pre-deployment evaluation requirements, staged rollout protocols, and selective partnership criteria each reduce the speed at which Anthropic reaches consumption scale. The constraint is intentional and is the mechanism that produces safety differentiation — but it is also the mechanism that allows OpenAI to compound behavioral defaults faster.

Consumer Interface Absence. Anthropic has no consumer product at the scale of ChatGPT. The absence means Anthropic does not generate the consumer behavioral defaults that create enterprise pull — limiting the enterprise-to-consumer bleed to the Microsoft mechanism. Anthropic’s value capture path does not include consumer-to-enterprise bleed and depends entirely on enterprise trust accumulation without a consumer default-setting mechanism to accelerate it.

Behavioral Signature

Anthropic exhibits three consistent behavioral patterns: capability release timed to safety evaluation completion rather than competitive pressure (producing slower but more defensible deployment); enterprise partnership selection based on deployment context risk rather than revenue potential (producing higher-quality customer concentration); and policy engagement as a strategic positioning tool rather than a regulatory management afterthought. The commitment score of 0.71 reflects cautious but genuine commitment — Anthropic’s deployment signaling is not delay-dominant in the Google sense; it is precision-bounded by safety evaluation requirements.

Installed Cognitive Grammar — Safety-First, Precision-Deployment

Anthropic processes strategic decisions through a safety-first sequence: evaluate capability against alignment criteria, deploy selectively to enterprise contexts where alignment reliability commands premium pricing, use enterprise trust accumulation to expand the deployment envelope, engage policy processes to establish safety standards that institutionalize Anthropic’s positioning advantages. The grammar produces precision positioning over consumption scale. The policy engagement strategy has a specific structural function: if governments and enterprises adopt safety certification frameworks that open-weight models cannot meet at the required evaluation depth, the commoditization floor for high-stakes deployment rises — which is the structural outcome Anthropic’s policy strategy is designed to produce.

Failure Mode Sequence

Primary — Consumer Layer Missed. Anthropic’s grammar does not generate consumer behavioral defaults. If the value capture battleground moves to the consumer interface layer before Anthropic’s enterprise trust architecture compiles enough pricing premium to sustain independent investment, Anthropic’s path to value capture narrows to the regulated enterprise sector — a real but bounded market.

Secondary — Open-Weight Safety Compression. Open-weight model development increasingly incorporates alignment techniques (RLHF, constitutional AI approaches) that reduce the safety differentiation gap. If open-weight models reach safety certification parity in regulated enterprise contexts before Anthropic’s policy engagement institutionalizes higher certification standards, the precision positioning premium erodes from the same direction as the raw capability premium.

CDT COMPRESSION — ANTHROPIC

Trust-optimized intelligence provider without distribution control — building enterprise positioning through safety differentiation that commands premium pricing under concentration but narrows in value if commoditization arrives before the trust architecture generates irreversible enterprise lock-in.

VI. The Governing Variable Revisited — Intelligence Layer Stakes

Installment I established that Apple’s strategic choices feed back into the governing variable: passive continuation by platform incumbents signals that the platform layer will absorb dependency costs rather than contest them, which reduces competitive pressure on frontier providers to commoditize and increases the structural reward for concentration. Installment III adds the intelligence layer’s own feedback mechanism: intelligence layer providers’ competitive strategies actively shape whether the variable resolves toward concentration or commoditization.

OpenAI’s consumption scale strategy deepens platform incumbent dependency — which signals to the market that frontier model pricing power is sustainable. Microsoft’s enterprise infrastructure strategy creates a different feedback mechanism: Azure’s dominance as AI compute infrastructure means that open-weight model adoption still generates Azure revenue, partially neutralizing the commoditization scenario for Microsoft’s value capture. Anthropic’s policy engagement produces a third feedback effect: safety certification requirements that open-weight models cannot meet in regulated contexts raise the commoditization floor for high-stakes deployment.

VII. CDT Foresight Predictions — 12 to 36 Months

The following ten predictions are generated by MAP CDT execution across all six institutional CDTs simultaneously. Each prediction carries a probability assignment, a defined time window, and an observable falsification signal. Predictions one through four target system-level dynamics. Predictions five through ten target institution-specific behavioral sequences.

CDT FORESIGHT PREDICTIONS — 12 TO 36 MONTHS

1. ChatGPT daily active users exceed aggregate engagement time of the top five iOS apps for two consecutive quarters, marking the displacement of app-native surfaces as the primary consumer interaction model — P=0.82 | Observable: Sensor Tower or SimilarWeb data showing ChatGPT session time surpassing top-5 iOS app aggregate in two sequential quarterly reports

2. Microsoft embeds Copilot as default enterprise interface across the full productivity stack — P=0.80 | Observable: Office 365 enterprise contracts include Copilot as non-optional default in new renewals

3. Device incumbents lose direct control over AI monetization pathway in at least two major product categories — P=0.76 | Observable: Apple or Samsung reports AI revenue attributed to third-party provider rather than platform-native model

4. OpenAI or Anthropic announces a flagship API tier price reduction exceeding 30 percent within 18 months, citing open-weight competitive pressure as the stated rationale — P=0.73 | Observable: Official pricing page update with stated percentage reduction and competitor-benchmarking language in the accompanying announcement

5. OpenAI launches persistent cross-device identity layer replacing app-based interaction as the primary interface model — P=0.75 | Observable: ChatGPT account becomes the authentication and routing layer across three or more non-OpenAI device surfaces

6. Meta drives model pricing toward marginal cost through sustained open-weight releases at frontier-approaching capability — P=0.78 | Observable: Enterprise AI RFP language cites Llama or open-weight alternatives as pricing floor in vendor negotiations

7. Anthropic secures dominant position in two or more regulated enterprise verticals before consumer AI layer resolves — P=0.70 | Observable: Anthropic announces exclusive or preferred provider contracts with two major regulated-sector institutions

8. Google restructures monetization architecture to reduce advertising dependence in AI-facing product lines — P=0.55 | Observable: Google reports AI subscription or API revenue exceeding 15 percent of total revenue in any quarter

9. Enterprise AI procurement consolidates so that Microsoft plus one frontier lab account for 60 percent or more of reported enterprise AI spend, as measured by two independent procurement surveys in the same calendar year — P=0.68 | Observable: Gartner or Forrester enterprise AI spend survey showing two-provider concentration at or above 60 percent threshold

10. Mistral anchors EU sovereign AI initiatives and secures preferred status in at least three member state AI programs — P=0.60 | Observable: EU member state government contract awards naming Mistral as primary or co-primary AI provider

Closing — The Intelligence Layer’s Unresolved Geometry

Three installments have mapped the same governing variable across two tiers of the AI platform stack. At the platform incumbent tier, the finding is structural drift: Apple toward dependency, Samsung toward constrained internalization, Google toward structural asymmetry that makes resolution irrelevant. At the intelligence layer tier, the finding is competitive divergence: three distinct value capture architectures executing simultaneously, each betting the governing variable resolves in the direction that favors their constraint stack.

The intelligence layer is no longer upstream support for devices. Intelligence is becoming the interaction surface itself. Coordination authority, not hardware distribution, determines value capture. The FGR Vision quantifies the geometry shift: model-layer attractor dominance scores (OpenAI: 0.88, Microsoft: 0.84) exceed any device-layer attractor that platform incumbents can currently deploy. The firms that control routing between user intent and computational execution absorb margin. The rest become infrastructure or distribution shells.

The series thesis resolves: the battle is not device versus device. The battle is interface versus intelligence. The CDT Foresight Simulations assign 85 percent probability to outcomes in which intelligence layer institutions — not device incumbents — capture the margin that the AI transition generates. The 15 percent commoditized infrastructure scenario is the only outcome in which device incumbents recover coordination authority — and it is the scenario that requires Meta’s open-weight strategy to succeed faster than any current trajectory projects.

The intelligence layer’s unresolved geometry is not a temporary condition. The geometry is the product of structural positions that each institution is actively reinforcing. OpenAI deepens platform incumbent dependency to make its API position more costly to replace. Microsoft embeds Copilot more deeply into enterprise workflows to make its infrastructure position more costly to exit. Anthropic engages policy processes to make safety certification more costly to circumvent. Each strategy, if successful, produces a different equilibrium at the intelligence layer — and the three equilibria are not compatible. The governing variable will resolve. The CDT Foresight Simulations provide the observable signals that indicate which resolution is compiling. No institution in the device layer currently exhibits the adaptation velocity or coordination leverage required to reverse the geometry shift within the modeled horizon.

FOR INVESTORS

The series’ three-installment arc produces a single structural insight for portfolio construction: the AI platform market is a layered system in which value capture architecture differs by tier. Platform incumbents (Apple, Samsung) are the dependency tier — value preservation depends on internalization velocity or commoditization. Intelligence layer providers (OpenAI, Microsoft, Anthropic) are the capture tier — value accrual depends on the governing variable’s resolution direction and each institution’s structural resilience to the adverse scenario. Google occupies both tiers simultaneously, which is why Installment II classified its dual-position asymmetry as the series’ central structural finding. Portfolio construction that treats platform incumbents and intelligence layer providers as the same competitive category is mispricing the layered structure that determines where margin accrues as the governing variable resolves.

FOR CORPORATE STRATEGY

The closing frame for corporate strategists is a threshold monitoring framework. Each CDT Foresight Simulation identifies the observable signals that indicate the internalization threshold is approaching irreversibility for a given platform incumbent. Corporate strategists at platform incumbents should map those signals against their own AI roadmap milestones and calculate the remaining window before the threshold crosses. The signals are falsifiable: if they do not materialize on the predicted timeline, the threshold has not moved as projected and the internalization window remains open. If they do materialize, the behavioral grammar of the intelligence layer provider — not the platform incumbent’s strategy team — is setting the competitive geometry. Intelligence is winning.