MCAI Innovation Vision: The AI-Quantum Closed-Open-Hybrid Architecture Problem

How the United States Must Design Its AI Innovation System Now to Compete at the Quantum-AI Phase Boundary

Executive Summary

Stanford Institute for Human-Centered Artificial Intelligence Fellow Alvin Wang Graylin’s Beyond Rivalry: A US-China Policy Framework for the Age of Transformative AI provides a clear entry point into how emerging technologies scale and distribute power across platforms. His analysis captures the surface layer of competition in extended reality and artificial intelligence by focusing on control, interoperability, and ecosystem design. MindCast’s The AI Duel of America’s Chaotic Advantage vs. China’s Disciplined Coordination established that the competition ultimately turns on adaptability rather than scale — that America’s pluralist chaos generates discovery advantage, and China’s disciplined coordination generates deployment advantage, and neither dominates universally. The analysis here develops the architectural framework underneath that finding: specifically, how the United States must design its AI innovation system now while simultaneously building toward the quantum-AI coupling that will arrive within 3–5 years and require a fundamentally different architecture.

The United States does not have an architecture problem in AI research. It has an architecture problem in AI system design — specifically, the absence of a coherent framework that innovation leaders across government, capital, universities, and industry consortiums share for deciding when AI development should operate as an open system, when it should operate as a closed system, and when it requires a hybrid structure that converts discovery into deployment. The incoherence is manageable today. With quantum-AI coupling approaching, it becomes a phase-transition risk that determines competitive positioning at the next technology boundary.

Quantum-AI coupling is 3–5 years out. Architecture decisions made during the current AI phase will determine whether the United States enters that coupling with a coordinated hybrid system capable of managing the transition — or with a fragmented innovation landscape that China’s coordinated deployment architecture will outpace at every boundary.

Three variables determine optimal architecture at any moment: placement in the innovation stack (P), timing within the phase cycle (T), and innovation risk profile (R). Together they define system bias — the pull toward openness or closure — expressed as S = f(P, T, R). Open and closed systems are phase-specific tools; mis-sequencing them produces irreversible disadvantage. The United States currently operates with misaligned S values across its innovation ecosystem: open where coordination is needed, fragmented where hybrid aggregation would capture value, and absent at the control layer that quantum-AI integration will require. Correcting that misalignment requires different actions from three distinct audiences — federal policy makers, institutional innovation leaders, and capital allocators. Each section translates architecture into decisions for policy, capital, and institutional design.

One mechanism governs all three: feedback latency is the master variable governing all outcomes. Systems that reduce feedback latency faster will dominate regardless of scale, capital, or initial capability — because the faster system converts discovery into deployment before the slower system can coordinate a response. Once capital intensity crosses the switching threshold, open systems cannot coordinate fast enough to retain advantage. The current window exists because infrastructure has not yet locked; once locked, architecture becomes constraint, not choice. The United States’ open discovery system functions as an upstream input to China’s coordinated deployment system when aggregation fails domestically — not as a forecast, but as a description of the present condition.

I. The US-China Architectural Asymmetry

Systems that misalign architecture at phase boundaries do not lose gradually — they lose irreversibly. Infrastructure positions established during the wrong phase persist. Coordination deficits compound. Discovery advantages that took decades to build become subsidies for competing deployment systems once the phase boundary arrives and the misaligned system cannot convert signal into action fast enough to hold its position. Architecture determines competitive outcome before capability does, and the United States and China are not in equivalent positions at the current AI phase boundary.

The US Innovation Architecture: Discovery Strength, Coordination Failure

American AI development operates as a distributed open system at the research layer and an uncoordinated collection of closed systems at the infrastructure layer, with no reliable hybrid mechanism connecting the two. MindCast’s prior analysis — America’s Chaotic Advantage vs. China’s Disciplined Coordination — documented how America’s pluralism converts disorder into discovery: universities generate foundational research under open-publication norms, startups iterate rapidly across application spaces with venture backing, and the collective signal output of this ecosystem exceeds what any centrally directed system could generate. Variance is high, experimentation is rapid, and talent self-selects toward the problems it finds most tractable.

The coordination layer is absent. Federal AI policy is distributed across the Department of Energy, the Department of Defense, the National Institute of Standards and Technology, the National Science Foundation, and the Office of Science and Technology Policy — each operating with its own framework, funding logic, and institutional priorities. No common architectural vocabulary exists across these agencies.

The National AI Research Resource — the most significant attempt to build a shared AI research infrastructure — operates as a data-sharing platform without the coordination logic that converts data sharing into deployment advantage. Industry consortiums apply incompatible models of what open, closed, and hybrid systems should look like in practice. The gap between these positions is not bridged by any systematic aggregation mechanism.

The United States generates more discovery-phase signal than any other national system. The problem is not signal generation — it is the absence of the hybrid aggregation architecture that converts signal into competitive advantage at the deployment layer.

The Chinese Innovation Architecture: Deployment Strength, Discovery Constraint

Chinese AI development operates as a coordinated deployment system with selective access to open discovery. The New Generation Artificial Intelligence Development Plan, issued by China’s State Council in 2017, established a staged roadmap to make China the primary global AI innovation center by 2030 — not through distributed experimentation but through state-directed capital concentration in infrastructure, industrial application, and manufacturing integration. When American researchers publish foundational architectures, Chinese deployment pipelines absorb those discoveries and scale them faster than fragmented American commercialization can match.

The structural constraint is discovery capacity. Centralized signal filtering reduces variance by design. Research directions that diverge from state-priority areas receive less funding and carry career risk. Export controls on advanced semiconductors and AI hardware have accelerated China’s domestic quantum and chip investment — but also narrowed the signal diversity available to Chinese researchers by reducing access to international collaboration. China’s architectural strength at the deployment layer does not compensate for this constraint as the frontier moves toward domains where discovery capacity determines who reaches the next phase boundary first.

The Asymmetry at the Quantum-AI Phase Boundary

Quantum-AI coupling amplifies the asymmetry in both directions simultaneously. American open-system strength at the quantum research layer — sustained through the National Quantum Initiative and its network of national laboratories, universities, and industry partners — gives the United States a discovery advantage that China cannot quickly replicate through state-directed investment alone. Quantum error correction, qubit architecture diversity, and AI-assisted quantum optimization all benefit from the high-variance experimentation that distributed open research produces.

Chinese coordinated deployment strength positions China to build the quantum-AI infrastructure layer faster once target architectures are established. China’s National Venture Capital Guidance Fund — a 1 trillion yuan ($138 billion) state-backed vehicle targeting quantum computing, AI, and semiconductors — demonstrates the scale differential between coordinated and fragmented infrastructure investment. The US-China Economic and Security Review Commission’s 2025 annual report warns explicitly that China’s state-supported quantum programs pose growing strategic risks and that early US action is needed to prevent long-term disadvantage.

The nation that couples quantum capability to AI inference infrastructure at scale captures the deployment advantage — and that coupling requires the coordination discipline the United States currently lacks. The United States currently subsidizes China’s deployment layer through uncoordinated openness at the discovery layer: American researchers generate the signal, Chinese deployment architecture closes the feedback loop faster and captures the value.

MindCast’s Global Innovation Trap quantifies this dynamic precisely: China’s national Coordination Capacity Coefficient (CCC) — a measure of how well policy intent, investment, and execution align — scores 0.63 against the US at 0.47. China converts leaked capability into deployable systems at 1.8–2.5x US speed, and advantage windows across AI and semiconductors have compressed from 8–10 years to 2–4 years through leakage channels that operate through remote compute access, talent mobility, and third-country routing — not espionage. The feedback latency gap between the two systems is the quantitative measure of how much advantage is transferring with each phase cycle.

II. The S = f(P, T, R) Framework: Reading the Current Position

Optimal system architecture is not a fixed choice between open and closed — it is a function of three variables that change as technology evolves. Placement in the innovation stack (P), timing within the phase cycle (T), and innovation risk profile (R) jointly determine system bias (S): the directional pull toward openness or closure at any moment. Misreading any of the three variables produces architecture misalignment — and misalignment at a phase boundary carries compounding costs.

S = f(P, T, R)

P — Placement: proximity to the research layer increases optimal openness; proximity to infrastructure increases optimal closure.

T — Timing: early-phase positioning favors open architectures that maximize signal diversity; late-phase positioning favors closed architectures that minimize coordination cost.

R — Risk: high uncertainty favors open exploration; high capital cost or strategic security constraints favor closed execution.

The operational rule follows directly: open weight increases with discovery value and uncertainty; closed weight increases with coordination cost and execution risk; hybrid structures emerge when signal must convert into decision under constraint. A system that applies closed logic during discovery suppresses variance and misses breakthroughs. A system that applies open logic during deployment fragments execution and loses infrastructure positions to better-coordinated competitors.

The switching condition that governs when system bias shifts is precise: system bias moves from open toward closed when coordination cost multiplied by capital intensity exceeds marginal discovery value. Below that threshold, openness compounds returns. Above it, openness compounds fragmentation. The United States currently operates above the threshold at the AI deployment layer and below it at the quantum research layer — which means the prescriptions for each layer are structurally different, not matters of preference.

Open systems maximize discovery surplus — in Coase’s terms, they reduce the transaction costs of combining distributed knowledge across institutional boundaries. Closed systems enforce incentive alignment — in Becker’s terms, they concentrate returns to produce coordinated action where coordination costs would otherwise prevent it. Hybrid systems enable institutional learning and correction — in Posner’s terms, they preserve the feedback mechanisms that allow architecture to update as conditions change. The S = f(P, T, R) framework operationalizes this intellectual lineage: discovery surplus, incentive alignment, and institutional learning each dominate a different phase of the innovation cycle, and the system that sequences them correctly wins. These frameworks converge on the same result: coordination failure emerges when no actor internalizes the cost of building the aggregation layer — and the system that builds it first captures the feedback loop advantage that compounds across every subsequent phase. MindCast’s

Chicago School Accelerated: The Integrated, Modernized Framework of Chicago Law and Behavioral Economics develops the Coase-Becker-Posner system as a composite analytical architecture — specifying the conditions under which each pillar’s predictions hold and when they fail under coordination-dependent markets. The Chicago School Accelerated applies that architecture directly to the AI infrastructure stack, establishing that coordination collapses at technology speed while doctrine corrects at litigation speed — the same temporal mismatch that generates the US hybrid aggregation deficit this paper analyzes. The Nash-Stigler Equilibria: A Dual-Termination Architecture and Runtime Geometry: A Framework for Predictive Institutional Economics formalize Nash equilibrium as the behavioral settlement condition and Stigler equilibrium as the inquiry sufficiency condition — together establishing when institutional systems lock and when they require external force to reset. The architecture problem this paper diagnoses is a Nash-Stigler equilibrium in institutional design: the hybrid aggregation deficit persists because no single actor can capture enough of its value to justify building it alone — the benefit is shared and the cost is private. No actor builds the aggregation layer because no actor can fully internalize its returns, and the absence of a shared architectural vocabulary prevents the coordination that would produce it.

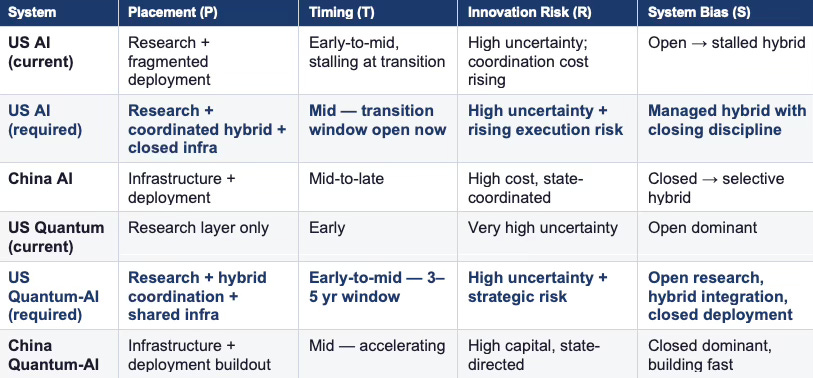

Current and Required US Positions

The bolded rows represent required positions — what US AI architecture and US quantum-AI architecture need to look like to compete effectively at the current phase boundary and at the quantum-AI coupling boundary 3–5 years out. At the AI layer, the US needs a managed hybrid with closing discipline — not full closure, but a coordinated aggregation mechanism that routes discovery-phase signals toward deployment-phase execution. At the quantum-AI layer, the US needs a three-zone architecture: open research preserved at the discovery layer, hybrid coordination built now for integration, and closed deployment infrastructure established before China locks in the infrastructure positions that quantum-AI coupling will require.

The system that closes feedback loops faster will dominate regardless of whether it is open or closed. Open systems generate signal. Closed systems execute decisions. Hybrid systems — when designed correctly — convert signal into decision faster than either pure structure can. That feedback loop speed is the single variable that determines outcome across US-China competition, AI ecosystem phase transitions, and quantum-AI infrastructure positioning simultaneously. Every recommendation in this paper traces back to the same imperative: close the feedback loops faster, or the architecture advantage compounds on the wrong side.

Contact mcai@mindcast-ai.com to partner with us on Predictive Cognitive AI in Law and Behavioral Economics. To deep dive on MindCast work in Cybernetic Foresight Simulations upload the URL of this publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

Recent projects: The Power Stack Series— How Energy Infrastructure Became the New AI Battleground | MindCast AI Emergent Game Theory Frameworks | Runtime Geometry, A Framework for Predictive Institutional Economics | Super Bowl LX — AI Simulation vs. Reality | The Runtime Causation Arbitration Directive | Executive Summary of MindCast AI Investment Series | Cybernetic Game Theory | Decision Modeling and Foresight Simulation | The Full Arc of Prediction Markets | Cybernetic Overview of The MindCast Consumer AI Device Series

III. The Incoherence Problem: Why Innovation Leaders Cannot Agree

Fragmented federal policy and incompatible institutional frameworks are symptoms of a deeper problem: American innovation leaders do not share a common model of what open, closed, and hybrid systems are for, when each is appropriate, or how to sequence transitions between them. Without that shared framework, policy debates reduce to ideological positions — open-source advocates arguing for openness on principle, national security agencies arguing for closure on principle, and capital allocators optimizing for near-term returns without accounting for phase-transition costs.

Federal Policy Fragmentation

No federal agency owns the architecture question. The Department of Energy funds quantum research through national laboratories operating under open-science norms. The Department of Defense funds AI capability development under varying classification levels that create incompatible collaboration boundaries. NIST develops AI safety and standards frameworks without coordinating with DOE quantum investments. NSF funds foundational research with open-publication requirements that conflict with DOD’s classification logic. The Office of Science and Technology Policy (OSTP) attempts cross-agency coordination without enforcement authority.

Export controls illustrate the fragmentation most clearly. Controls on advanced AI chips and quantum computing components reflect closed-system logic applied at the technology transfer layer — a reasonable response to deployment-phase security concerns. The same controls reduce international research collaboration that serves open-system discovery. A coherent architecture framework would distinguish between controlling deployment-layer technology transfer and preserving discovery-layer collaboration. The NIST Post-Quantum Cryptography Standardization process illustrates what coordinated architecture execution looks like: an eight-year program that finalized FIPS 203, 204, and 205 in August 2024 through disciplined hybrid coordination across agencies and the global cryptographic community. That model has not been replicated at the AI innovation layer, where fragmentation persists.

Institutional Incoherence

Universities, industry consortiums, and innovation intermediaries apply incompatible frameworks because no common vocabulary exists for the architecture question. A university technology transfer office optimizing for open publication norms produces different architecture outcomes than a hyperscaler optimizing for closed infrastructure control, which produces different outcomes than a national laboratory optimizing for classified capability development, which produces different outcomes than a venture-backed startup optimizing for rapid open iteration followed by platform lock-in. Each logic is internally coherent. Collectively they produce an ecosystem where the same discovery can produce five different deployment outcomes depending on which institutional pathway captures it first.

The incoherence is not a policy failure that better regulation can fix — it is an architectural vocabulary failure. Innovation leaders cannot coordinate effectively around a framework they do not share. Establishing that shared framework is the prerequisite for every prescriptive action that follows.

IV. The Internet Precedent and Its Limits

Every major technology transition produces a playbook from the previous one. The internet precedent — open protocols enabling discovery, private-sector closure capturing deployment value, hybrid structures managing the transition — shapes how American innovation leaders think about AI architecture today. The playbook is instructive but incomplete, and applying it without modification to the quantum-AI coupling problem produces strategic errors at the most critical junctures.

ARPANET established open protocols — TCP/IP, HTTP, SMTP — because the primary constraint on early internet development was discovery: maximizing the number of compatible participants, applications, and use cases that could emerge from a shared technical foundation. That openness generated the variance that produced the commercial internet. As deployment scaled, private infrastructure enclosed the application layer, platform companies consolidated distribution, and cloud providers captured compute and storage. Closed systems accumulated above open foundations, and the economic value of the open layer was captured by the closed systems built on top of it.

The AI transition follows this pattern but compresses the timeline by an order of magnitude and raises the capital threshold by several orders. Open research protocols — published architectures, shared weights, academic collaboration — enabled the discovery phase. Capital intensity drove hybrid transition. Hyperscaler infrastructure is driving closed-system dominance at the deployment layer. American firms that built the open internet layer and then captured the closed deployment layer are replicating that structural play in AI, and doing it faster than internet-era incumbents moved.

The internet precedent breaks down at quantum-AI coupling for one critical reason: national security stakes did not apply at the same level during internet commercialization. Quantum-AI coupling involves cryptographic infrastructure that determines the security of military communications, financial systems, and critical infrastructure. Post-quantum encryption deployment, quantum-secured communication networks, and AI-accelerated cryptanalytic capability all carry national security implications that require state involvement in architecture decisions — not as a constraint on discovery, but as a coordination mechanism for the closed deployment layer that quantum-AI integration requires.

V. Phase Transition Dynamics: What the US Must Navigate

Three transitions determine competitive positioning at the quantum-AI coupling boundary, and the United States must navigate all three simultaneously within the same 3–5 year window. Each transition requires a different architectural response, and the responses must be sequenced correctly — building the hybrid aggregation layer before attempting deployment closure, establishing research openness protection before export controls narrow collaboration further, and creating the coordination infrastructure for quantum-AI integration before China locks in the infrastructure positions that coupling will require.

Transition One: AI Discovery to Hybrid Coordination

American AI development currently generates high-variance discovery output and exports much of the deployment value to better-coordinated systems. The National AI Research Resource represents an attempt at the hybrid aggregation function — launched as a pilot in January 2024 and now supporting more than 600 research projects across 50 states — but without a shared architectural framework across its institutional participants, it operates as a data-sharing infrastructure without the coordination logic that converts data sharing into deployment advantage.

Effective hybrid aggregation at the AI layer requires three components the United States has not yet assembled: a common evaluation framework that allows research outputs from universities, labs, and startups to be compared against consistent criteria; a routing mechanism that directs high-priority outputs toward capital and infrastructure partners without requiring bilateral negotiation at each handoff; and feedback channels that carry deployment-layer signals back to the discovery layer so that research priorities reflect where execution gaps exist.

Transition Two: Hybrid AI to Quantum-AI Integration

Quantum-AI integration requires a hybrid architecture that does not yet exist at the required scale. AI-assisted quantum optimization — using machine learning to improve qubit calibration, error correction, and circuit design — is already producing results in laboratory settings. Scaling those results to production quantum hardware requires integration infrastructure that spans AI inference systems, quantum processors, classical computing, and cryogenic control systems. No single institution has the full stack.

The 3–5 year timeline for meaningful quantum-AI coupling means the hybrid coordination infrastructure for this transition must begin construction now. The National Quantum Initiative has established funding channels and research centers, but has not built the evaluation and routing mechanisms that convert research output into deployment-ready integration architecture. China’s 14th Five-Year Plan explicitly couples quantum and AI investment in a coordinated infrastructure buildout — expanding references to quantum from two appearances in the 13th Five-Year Plan to a central pillar of the 14th, with quantum computing, communications, and sensing integrated into the same policy framework as AI infrastructure.

Transition Three: Quantum-AI Deployment to Control Layer

Post-quantum cryptography deployment and quantum-secured communications represent the control-layer transition that the US national security architecture requires. NIST finalized FIPS 203, 204, and 205 in August 2024 — the culmination of an eight-year standardization process — and immediately urged system administrators to begin transitioning. Federal agencies operate on incompatible cryptographic systems with different migration timelines. Critical infrastructure operators have no coordinated upgrade mandate. The control-layer transition that quantum-AI coupling requires is not primarily a technical problem at this stage — US architectural incoherence makes it a coordination problem that grows systematically harder to solve.

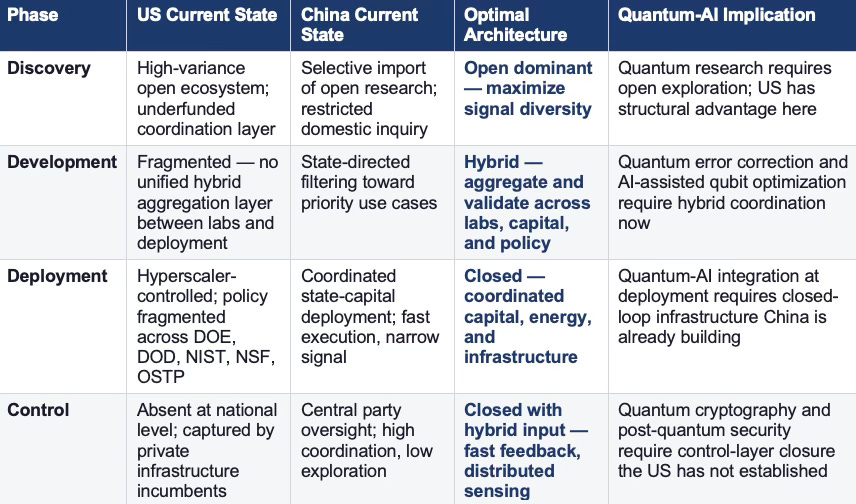

The table below maps all three transitions against the phase position of each national system:

VI. Failure Modes the US Must Avoid

Architectural incoherence at a phase boundary does not fail gradually — it fails catastrophically when the boundary arrives and the system discovers it cannot coordinate fast enough to hold its position. Three failure modes are particularly acute for the United States given its current architectural position, and each is already visible in nascent form.

Signal Saturation Without Aggregation

Open systems fail when signal volume overwhelms decision capacity and no aggregation mechanism exists to filter, prioritize, and route high-value signals toward productive development pathways. American AI research currently produces more publishable output than any institutional deployment pipeline can absorb. The response has been organic consolidation — a small number of well-capitalized labs capture the most commercially significant research outputs while the broader academic ecosystem generates signals that do not reliably connect to deployment infrastructure. Organic consolidation is not the same as designed aggregation. It produces closure without coordination — capturing the diversity costs of open systems while applying the blind-spot risks of closed systems.

At the quantum-AI layer, signal saturation is not yet a problem because the research community is still small relative to the scope of open questions. Building the aggregation architecture before saturation arrives — rather than after, as happened in AI — is the correct sequencing. MindCast’s Bottleneck Hierarchy in US AI Data Centers established the causal logic: energy is the systemic determinant, networking sets the scale ceiling, and cooling is the execution filter — and firms that secure all three layers simultaneously outperform those that optimize any single layer in isolation. The same hierarchy applies to quantum-AI integration: quantum hardware, orchestration software, and cryogenic-to-digital interface infrastructure must be built as a coordinated system rather than sequentially, or the aggregation architecture arrives too late to shape the coupling.

Premature Closure at the Discovery Layer

Export controls, classification requirements, and national security frameworks create closed-system pressure at the discovery layer that suppresses the variance the United States most needs to preserve. Restricting international research collaboration in AI and quantum narrows the signal diversity that generates American discovery advantage. CSIS analysis of China’s quantum advancement documents that China’s response to export controls has been to accelerate domestic chip and quantum investment — with national laboratories, government-backed quantum computing centers, and a 1 trillion yuan venture fund all mobilized in response. MindCast’s Global Innovation Trap establishes the mechanism more precisely: the third-country leakage mesh — Malaysia, Thailand, Indonesia, UAE, Singapore — functions as a self-healing network that re-routes hardware and compute access within weeks when any single corridor tightens. Supply-side restriction stimulates parallel development more reliably than it retards overall progress, and access-layer controls targeting deployment-layer transfer are the only scalable defense — not discovery-layer closure.

MindCast’s Quantum Computing Sovereignty analysis identifies an additional mechanism at work: incumbents patent into forced engineering trajectories before entrants experience them as exclusion — a dynamic MindCast terms Forward Constraint Compounding (FCC). At the discovery layer, premature closure interacts with FCC to produce a compounding trap: export controls narrow the engineering trajectories available to US firms, incumbents patent into those narrowed trajectories, and entrants arrive at the coupling boundary to find both the physical pathway and the legal pathway pre-claimed. The correct architecture applies closed-system discipline at the deployment and control layers and preserves open-system architecture at the discovery layer — where variance is the primary asset and FCC has not yet activated.

Infrastructure Lock-In Without Architecture Alignment

In the absence of a coordinated national architecture, hyperscalers have set the deployment architecture by default. Hyperscaler control of AI infrastructure reflects closed-system dominance at the deployment layer achieved precisely because no coordinated national architecture framework governed infrastructure investment decisions — not because hyperscalers designed it for national strategic purposes, but because they were the only actors with the capital and execution capacity to build at scale when no coordination architecture existed. MindCast’s Quantum-Coupled AI Data Center Campus (2025–2035) established the stakes directly: by 2035, the corridors where AI computation meets quantum capability will function as the digital Suez Canals of the intelligence economy — and whoever controls the coupling layer controls the value flow. The result of current hyperscaler AI infrastructure decisions is a deployment layer efficient for current GPU-based workloads and misaligned for quantum-AI integration.

Quantum-classical hybrid processors require different infrastructure architectures than current GPU clusters. Cryogenic control systems require physical proximity constraints — sub-4-microsecond latency thresholds — that cloud-distributed AI infrastructure does not accommodate. MindCast’s CDT simulation work on how quantum computing overcomes AI data center bottlenecks validated that hybrid quantum-classical architectures deliver 20–25% throughput gains and 25–35% energy savings — but only when power supply maintains consistent voltage profiles and quantum-classical separation stays within the coherence zone radius. Infrastructure built without those constraints in mind requires costly retrofitting rather than native integration.

MindCast’s Private Equity and Patent Litigation in AI Data Centers (2026–2028) documents that PE firms executing infrastructure roll-ups are already pricing this constraint into deal structures — incorporating freedom-to-operate opinions and defensive patent portfolio acquisitions as standard deal components, because the runtime causation timing is now widely enough understood that capital actors are pricing it even before the quantum-AI coupling boundary arrives. The United States has a 3–5 year window to establish quantum-AI infrastructure architecture before hyperscaler positions become so deeply embedded that quantum-AI integration must work around them rather than with them. Missing that window means inheriting an infrastructure constraint that China, building its quantum-AI integration infrastructure now with coupling in mind, will not face.

VII. Prescriptive Recommendations

Correcting the US architectural position requires coordinated action across three distinct audiences, each of which controls a different layer of the innovation system. Federal policy makers govern the coordination framework and the security architecture. Institutional innovation leaders — universities, national laboratories, industry consortiums, and research intermediaries — govern the hybrid aggregation layer. Capital allocators govern the infrastructure investment and deployment timing.

For Federal Policy Makers

Establish a shared architectural vocabulary across AI and quantum agencies: The Department of Energy, Department of Defense, NIST, NSF, and OSTP must operate from a common framework that distinguishes discovery-layer architecture decisions from deployment-layer architecture decisions. Export controls, classification requirements, and funding mandates should specify which layer they govern and apply closed-system logic only where coordination cost and security stakes justify it.

Separate export control logic by innovation layer: Controls on advanced AI chips and quantum computing components that target deployment-layer technology transfer are structurally sound. The same controls applied to discovery-layer research collaboration impose an unnecessary cost on America’s primary competitive advantage. MindCast’s Global Innovation Trap establishes the full policy lever architecture: compute-access licensing that regulates high-intensity AI training workloads rather than only hardware; identity-bound compute requiring verified workload identity for sensitive training runs; allied enforcement harmonizing export control and access governance with EU, Japan, Korea, and Taiwan; and beneficial ownership transparency mandating disclosure for distributors and joint-venture partners in third-country jurisdictions. These levers collectively reduce leakage at the deployment layer while preserving the discovery-layer openness that generates American advantage. A layered export control framework — separating deployment-layer transfer restriction from discovery-layer collaboration — captures the security objective without accepting the discovery cost.

Fund the hybrid aggregation infrastructure for quantum-AI integration now: The National Quantum Initiative and National AI Research Resource should be directed to build coordination protocols — evaluation frameworks, routing mechanisms, and feedback channels — that connect quantum research outputs to AI integration pipelines. The investment required is coordination infrastructure, not additional research funding. The 3–5 year quantum-AI coupling window requires this infrastructure be operational before coupling begins.

Establish a coordinated post-quantum migration mandate for federal systems and critical infrastructure: NIST’s Post-Quantum Cryptography standards — FIPS 203, 204, and 205 — exist. The deployment coordination architecture does not. A migration mandate with enforcement authority, coordinated timelines, and shared infrastructure investment mechanisms converts standards into deployed security infrastructure before quantum computing reaches cryptanalytic scale.

For Institutional Innovation Leaders

Adopt the S = f(P, T, R) framework as a shared architectural vocabulary for consortium decisions: Universities, national laboratories, and industry consortiums cannot coordinate effectively around incompatible architectural models. Adopting a common framework for deciding when research should be open, when it requires hybrid coordination, and when it requires closed execution reduces transaction costs at every collaboration boundary and enables coherent decisions about data sharing, compute access, talent mobility, and IP licensing.

Build hybrid aggregation infrastructure at the university-industry boundary: Technology transfer offices, joint research centers, and university-industry partnerships currently negotiate architecture decisions bilaterally and inconsistently. Standardized hybrid aggregation protocols — common evaluation criteria, transparent routing mechanisms, reciprocal feedback channels — allow research outputs to move from discovery to deployment faster without requiring full organizational integration or full IP disclosure at each handoff.

Establish quantum-AI integration working groups that span research and deployment institutions now: The institutions that will need to collaborate on quantum-AI integration — quantum hardware developers, AI infrastructure providers, national laboratories, and security agencies — do not currently share coordination frameworks. Building those frameworks during the discovery phase, while quantum hardware is still evolving and architectural options remain open, produces better integration architecture than building them after hardware consolidation has narrowed the option space.

Protect open-system architecture at the quantum research layer: Universities and national laboratories should resist pressure to classify or restrict quantum basic research that does not carry direct deployment-layer security implications. The discovery advantage that open international collaboration produces is the United States’ most durable competitive asset in the quantum-AI competition. Institutions should distinguish between protecting deployment-layer IP and preserving discovery-layer collaboration — and make that distinction explicit in partnership agreements and funding arrangements.

For Capital Allocators

Price the phase-transition risk in AI infrastructure investments: AI infrastructure built for current GPU-based workloads without quantum integration architecture carries phase-transition risk that current valuations do not reflect. MindCast’s AI Infrastructure Energy Patent Landscape established that FCC operates on a 12–36 month latency window — incumbents patent into forced engineering trajectories before entrants experience them as exclusion, and the filing happens in the open period while activation happens when capital becomes irreversible. Infrastructure allocators should require explicit quantum integration roadmaps from AI infrastructure investments before capital commits, not after the constraint field activates.

Fund the hybrid aggregation layer as infrastructure, not research: The coordination mechanisms connecting AI discovery to deployment are not research investments — they are the infrastructure layer that MindCast’s Quantum-Coupled AI Data Center Campus identified as the ‘connective frontier between classical and quantum intelligence.’ Venture capital captures the orchestration middleware layer. Infrastructure funds own the durable, quantum-ready assets with 15–25 year depreciation curves and embedded optionality. Sovereign and patient capital secures the algorithmic sovereignty positions that policy eventually codifies. Each capital type has a specific role in the integration stack, and treating all three as interchangeable research grants systematically underfunds the layer that produces the largest share of deployment-layer value.

Allocate across the quantum-AI integration stack, not just at hardware or software endpoints: MindCast’s Quantum Computing Sovereignty established that by 2028 compute access will hinge on three currencies: patent sovereignty, trade-secret trust, and pipeline continuity. Alpha concentrates in middleware firms developing quantum-AI orchestration software and compiler tools — currently structurally undervalued because they are priced as quantum software companies rather than as AI infrastructure companies. Temporal arbitrage exists in the lag between patent issuance and market adoption: investors who identify integration IP six to twelve months before major cloud platforms embed it into service offerings compound returns through early-stage exposure at pre-infrastructure valuations.

Treat China’s quantum-AI infrastructure buildout as a timeline constraint, not a distant risk: China’s 14th Five-Year Plan explicitly couples quantum and AI investment in an integrated infrastructure buildout. The 1 trillion yuan National Venture Capital Guidance Fund is funded, directed, and building. MindCast’s PE and Patent Litigation analysis projects that by 2028, control of AI infrastructure will consolidate around actors who integrate capital deployment and IP protection — and firms positioned before coupling architecture is established will carry structural advantage that late entrants cannot replicate by capital alone.

VIII. The Quantum-AI Investment Thesis: Firm-Level Architecture Map

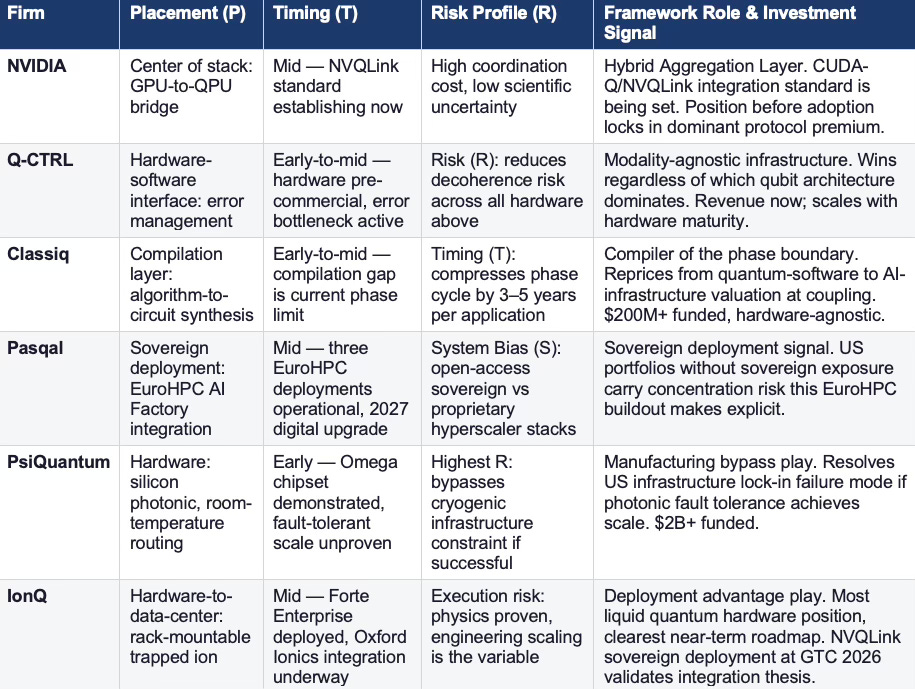

The architecture analysis in this paper is, at its core, an investment thesis — one that the quantum investment community has not yet fully priced. MindCast’s prior publication stack establishes the empirical foundation: The Quantum-Coupled AI Data Center Campus established that by 2035 competitive advantage flows to those who master quantum-coupled infrastructure as a unified system. How Quantum Computing Overcomes AI Data Center Bottlenecksvalidated 20–25% throughput gains and 25–35% energy savings through CDT simulation with 0.79 composite coherence. Quantum Computing Sovereignty established that by 2028 compute access hinges on patent sovereignty, trade-secret trust, and pipeline continuity. PE and Patent Litigation in AI Data Centers (2026–2028) established that capital and IP control are converging as dual expressions of foresight. Together these publications constitute a validated predictive stack the current paper’s architecture framework synthesizes into actionable positioning logic.

Most quantum investment analysis surveys hardware providers and cloud giants. The S = f(P, T, R) framework demands a different analysis — one that maps firms against their placement in the integration stack, timing within the phase cycle, and the specific innovation risk profile they address. The governing mechanism is feedback loop capture: firms that sit at the layer where signal converts into decision fastest will capture disproportionate returns, regardless of whether they own the hardware underneath or the applications above. Applied at that resolution, the competition runs not primarily between IBM and Google, but between the firms controlling the orchestration layer, the error management layer, the compilation layer, the sovereign deployment layer, and the hardware manufacturing layer — and the dominant positions at each layer are not yet locked.

The Mispriced Layer: Integration Infrastructure

Control of the integration layer determines which system converts capability into advantage. Hardware produces capability. Applications consume it. The integration layer — orchestration, error management, compilation, scheduling — is where capability becomes deployment, and where feedback loops close fastest. That is the decisive layer, and it is currently underfunded, undominated, and open.

Current quantum investment concentrates at hardware endpoints — qubit counts, coherence times, gate fidelity — and at application software. Both layers are necessary. Neither is where the structural return opportunity concentrates in the 3–5 year coupling window. MindCast’s Quantum Computing Sovereignty identified middleware firms — quantum-AI orchestration software and compiler tools — as structurally undervalued, projecting 5–7x upside over five years as these firms capture licensing tolls from both hardware vendors and application developers. MindCast’s AI Infrastructure Energy Patent Landscape establishes precisely why the window is finite: FCC means incumbents patent into forced engineering trajectories before entrants experience them as exclusion. Positions established before that latency window closes are structurally more favorable than positions negotiated after enforcement activates.

The integration layer between quantum hardware and AI inference is where the coordination premium will concentrate. It is currently underfunded, undominated, and open — and Forward Constraint Compounding guarantees that window is finite.

NVIDIA: The Hybrid Aggregation Layer — Placement (P)

NVIDIA is the most strategically critical position in the quantum-AI integration stack — not as a hardware provider but as the orchestration layer connecting GPU-driven AI training to quantum-accelerated inference. CUDA-Q is a QPU-agnostic — quantum processing unit-agnostic — platform integrating with 75% of all publicly available QPUs across all qubit modalities. NVQLink provides the first open, universal interconnect architecture supporting 17 quantum hardware builders at a round-trip latency of 3.96 microseconds — within the sub-4-microsecond coherence zone threshold MindCast’s CDT simulations validated. Adoption is accelerating: Oak Ridge National Laboratory’s (ORNL) GB200 NVL72 system, Japan’s Global Research and Development Center for Business by Quantum-AI Technology (G-QuAT), Singapore’s National Quantum Computing Hub, and Pasqal’s CUDA-Q integration via its Quantum Resource Management Interface (QRMI) at NVIDIA’s GTC developer conference in March 2026 all confirm NVQLink is establishing as the integration standard now.

In S = f(P, T, R) terms: placement at the center of the innovation stack (P = mid-stack, bridging GPU infrastructure to quantum hardware); timing at the standard-setting transition point (T = mid, NVQLink launched October 2025, adoption consolidating); innovation risk characterized by high coordination cost rather than scientific uncertainty (R = coordination). MindCast’s

NVIDIA NVQLink Validation — published before NVIDIA’s October 28, 2025 announcement — predicted sub-5 microsecond latency, 300+ Gb/s throughput, 6–8 US national laboratory coordination, and 12–15 quantum processor vendor support. NVIDIA delivered sub-4 microseconds, 400 Gb/s, 8 national labs, and 17 vendors. Five of five predicted metrics confirmed or exceeded — a validated track record that converts the architecture arguments in this paper from structural inference into empirically grounded prediction. NVIDIA is winning the integration standard race on current trajectory. The investment question is not whether CUDA-Q becomes the default — it is whether any position in the NVIDIA ecosystem captures the standard-setting premium before it is fully priced.

Q-CTRL: Managing Innovation Risk — Risk (R)

Q-CTRL occupies the risk management layer — the position that directly addresses why quantum hardware cannot yet deliver consistent commercial value. Its Fire Opal platform applies AI-driven gate optimization and circuit-level error suppression to improve practical hardware performance without hardware modification. Integrated natively into IBM Quantum’s Pay-As-You-Go plan and available on Rigetti’s Ankaa-3 84-qubit system, Fire Opal demonstrated a 500,000x reduction in classical compute cost for layout ranking in collaboration with NVIDIA and OQC. Over $50M in 2025 sales and contract wins, partnerships with Lockheed Martin and Airbus in quantum sensing alongside IBM and Rigetti in quantum computing, and TIME Magazine Best Innovations recognition confirm commercial viability now — not contingent on fault-tolerant hardware maturity.

In S = f(P, T, R) terms: placement at the hardware-software interface (P = error management layer); timing early-to-mid where error suppression is the active bottleneck (T); innovation risk (R) is the defining variable — Q-CTRL’s entire architecture reduces R for every hardware investment above it in the stack. The structural characteristic that makes Q-CTRL’s position durable is modality independence: Fire Opal is hardware-agnostic, winning regardless of which qubit architecture achieves commercial dominance. Q-CTRL generates revenue from today’s noisy intermediate-scale hardware while building the infrastructure position that scales with improvement across all modalities.

Classiq: Accelerating the Phase Cycle — Timing (T)

Classiq occupies the compilation layer — the position determining how quickly the quantum-AI phase cycle advances from algorithm design to hardware execution. The platform translates high-level functional models into optimized quantum circuits with millions of gates using proprietary synthesis technology protected by over 60 granted or filed patents (100% acceptance rate). $200M in total funding — the largest cumulative raise for a quantum software company — with strategic investment from AMD Ventures, Qualcomm Ventures, IonQ, SoftBank, and HSBC confirms the compilation layer’s recognized strategic value. IonQ’s strategic investment in Classiq’s November 2025 up-round is particularly significant: the leading trapped-ion hardware provider investing in the leading quantum compilation platform signals that hardware-software coupling at the compilation layer is approaching the integration point where both sides have aligned incentives to accelerate standardization.

In S = f(P, T, R) terms: placement at the algorithm-to-hardware interface (P = compilation layer); timing is the primary framework role (T) — Classiq compresses the phase cycle by enabling developers without quantum specialization to build production-grade programs, accelerating the conversion of discovery-phase signal into deployment-phase applications. Hardware-agnostic deployment across AWS Braket, Microsoft Azure Quantum, Google Cloud, IBM, IonQ, and NVIDIA simulators positions Classiq as integration infrastructure rather than a modality bet. The repricing thesis: Classiq is currently valued as a quantum software company; it will reprice as AI infrastructure at coupling, when the compilation layer’s role in the integration stack becomes operationally visible.

Pasqal: Sovereign AI Deployment Architecture — System Bias (S)

Pasqal occupies the sovereign deployment position — the firm most directly embodying the S = f(P, T, R) system bias variable as applied to national infrastructure strategy. Three of the eight quantum computers deployed under the EuroHPC Joint Undertaking are Pasqal systems — including a 140-qubit neutral-atom system delivered to CINECA in Bologna in February 2026 for integration with Italy’s Leonardo pre-exascale supercomputer. Pasqal’s 2025 roadmap targets a 250-qubit QPU optimized for quantum advantage in optimization, simulation, and machine learning — the three problem classes where hybrid quantum-AI integration produces most immediate value — with a 2027 upgrade to hybrid analog/digital mode preserving hardware investment across the phase transition.

In S = f(P, T, R) terms: placement at the sovereign deployment layer (P); timing mid-transition with three active EuroHPC deployments (T); system bias (S) is the defining variable — Pasqal’s open-access, state-backed, multi-site deployment architecture represents the coordinated sovereign infrastructure model this paper argues the US has not built. The strategic signal for US investors: Pasqal’s EuroHPC position confirms MindCast’s Quantum Computing Sovereignty prediction that EU regions prioritizing open standards attract asymmetric capital inflows as regulatory certainty accelerates deployment. US quantum portfolios lacking sovereign deployment exposure carry geographic concentration risk that Europe’s coordinated buildout makes explicit.

PsiQuantum: Bypassing the Refrigeration Constraint — Placement (P)

PsiQuantum represents the most aggressive placement bet in the quantum hardware stack — a direct play on the proposition that photonic qubits manufactured using standard semiconductor fabrication can bypass the cryogenic constraints that make superconducting and trapped-ion systems incompatible with conventional data center infrastructure. The Omega chipset, announced in February 2025 and detailed in Nature, is manufactured at GlobalFoundries’ Fab 8 in Malta, NY — a high-volume commercial semiconductor foundry. Over $2 billion in total funding including a $1 billion Series E in September 2025 makes PsiQuantum the most heavily capitalized pure-play quantum hardware company in existence.

In S = f(P, T, R) terms: placement at the hardware manufacturing layer with a bet on bypassing the cryogenic infrastructure constraint (P); timing early — Omega demonstrated in high-volume manufacturing but fault-tolerant scale unproven (T); innovation risk is the highest of any firm in this analysis (R = high). The investment thesis is not near-term revenue but strategic infrastructure position: if photonic fault tolerance achieves semiconductor manufacturing scale, it establishes the only quantum hardware architecture deployable in conventional data centers without cryogenic retrofit. That would resolve the infrastructure lock-in failure mode this paper identifies as the US’s most acute quantum-AI coupling risk. The Omega chipset’s high-volume manufacturing demonstration is the first credible evidence this architecture can exit the laboratory — making PsiQuantum the highest-risk, highest-potential-return position in the quantum hardware stack.

IonQ: Deployment Advantage Through Chip-Based Trapped Ion — Placement (P) + Timing (T)

IonQ occupies the deployment advantage position in the trapped-ion hardware stack — moving from physics demonstration to commercial data center integration faster than any trapped-ion competitor. The Forte Enterprise system is a rack-mountable, data-center-compatible trapped-ion quantum computer designed for production integration alongside GPU servers. The acquisition of Oxford Ionics in September 2025 for $1.075 billion added chip-based 2D ion trap technology with up to 300x higher qubit density than 1D systems, targeting 10,000 physical qubits on a single chip by 2027 and 20,000 across two entangled chips by 2028. At GTC 2026, IonQ and South Korea’s KISTI announced a partnership to plug IonQ’s trapped-ion hardware directly into South Korea’s national supercomputing backbone using NVIDIA NVQLink — the first sovereign Quantum-HPC ecosystem built on the NVQLink standard, validating both the integration architecture and the sovereign deployment model simultaneously.

In S = f(P, T, R) terms: placement at the hardware-to-data-center interface (P); timing mid-transition with Forte Enterprise deployed and Oxford Ionics integration underway (T); innovation risk characterized primarily by execution risk rather than scientific uncertainty (R = execution). IonQ is the most liquid quantum hardware position with the clearest near-term deployment roadmap — and the most exposed to the execution risks that accompany that clarity. The 2028 targets require Oxford Ionics 2D trap technology and Lightsynq photonic interconnects to perform at scale simultaneously. Watch the 2026–2027 Tempo deployment data as the leading indicator of whether the 2027–2028 roadmap milestones are achievable.

The Integrated Firm Map: S = f(P, T, R) Applied

Fund Cycle Alignment and Geographic Concentration Risk

Series B rounds for quantum-AI integration companies — Classiq ($200M+, compiler layer), Q-CTRL (error management, $50M+ 2025 revenue), and orchestration middleware firms building on the CUDA-Q/NVQLink standard — represent the current high-value window. MindCast’s PE and Patent Litigation analysis projects PE consolidation in power corridors at 75% confidence — sovereign capital that funds coordination infrastructure before PE consolidation closes the position window establishes the standard that PE subsequently acquires at premium. Infrastructure and project finance capital should assess data center retrofit requirements against the coherence zone map MindCast’s bottleneck analysis established — Seattle-Portland, San Jose–Sacramento–Reno, and the Boston–NYC–DC corridor each offering distinct fiber density, energy infrastructure, and regulatory alignment advantages.

Portfolios concentrated in US hardware plays carry geographic concentration risk. China’s 1 trillion yuan National Venture Capital Guidance Fund is funded and building. Pasqal’s EuroHPC position, IonQ’s South Korea Quantum-HPC partnership, and SandboxAQ’s DoW CIO five-year agreement all represent the sovereign infrastructure positions that capital allocators must map explicitly. The portfolio hedge is structural: allocating to the quantum-AI integration layer — orchestration (NVIDIA), error management (Q-CTRL), compilation (Classiq) — provides exposure to the coupling event regardless of which national hardware program reaches fault-tolerant scale first.

IX. The Genesis Mission as Implementation Architecture

Any system that cannot integrate open discovery, hybrid aggregation, and closed deployment will fail at the phase boundary — not because of capability gaps but because the feedback loop cannot close across the transition. The Genesis Mission implements the architecture this paper prescribes — it does not define it. The prescriptions derive from the structural logic of phase alignment, feedback latency, and the S = f(P, T, R) switching condition. The Genesis Mission builds the system those prescriptions require: open signal intake at the discovery layer, hybrid aggregation at the simulation layer, and closed-loop discipline at the deployment layer. The distinction matters for credibility: the architecture is validated by the analysis, not by the existence of the system. White House Genesis Mission x MindCast National Innovation Behavioral Economics.

The open discovery layer of the Genesis Mission preserves maximum signal diversity across the research inputs that feed its predictive models. Institutions, markets, regulators, and technology systems generate signals continuously, and capturing that signal diversity requires the same open-architecture logic that governs discovery-phase research. Closing the signal intake layer — restricting which inputs the system considers, applying premature filters based on prior model assumptions — produces the same blind-spot failure mode that afflicts closed research systems.

The MAP CDT — MindCast AI Proprietary Cognitive Digital Twin — Foresight Simulation operates at the hybrid aggregation layer. Discovery signals enter the system and must be converted into structured, actionable intelligence without losing the signal quality that makes them valuable. The CDT framework applied in MindCast’s quantum-AI bottleneck analysis achieved 0.79 composite coherence across computing, energy, and latency domains — a score high enough to confirm the simulation correctly modeled the structural forces driving hybrid quantum-AI infrastructure deployment. The NVIDIA NVQLink Validation publication establishes the most direct evidence of MAP CDT predictive accuracy: five published predictions — latency threshold, throughput requirement, national lab coordination count, quantum vendor ecosystem size, and network-level orchestration architecture — confirmed against NVIDIA’s October 2025 announcement with zero prior knowledge of the product specification. That pre-announcement validation record is the empirical foundation for every probability-weighted forecast in this paper’s appendix. The recursive advantage is structural: each confirmed prediction recalibrates confidence weightings across related forecasts, compounding the framework’s accuracy over successive simulation cycles.

Capital coordination and deployment recommendations operate under closed-loop discipline. At the deployment layer, the feedback requirements change: reliability, accountability, and speed matter more than variance. Decisions that carry high execution cost and long time horizons require closed-loop control over the inputs, models, and feedback channels that produce them.

The Genesis Mission implements the architecture this paper prescribes: open signal intake at the discovery layer, hybrid aggregation through the MAP CDT Foresight Simulation, and closed-loop discipline at the deployment layer — with feedback channels connecting all three to minimize latency across the full arc from discovery to decision.

Applied to the quantum-AI coupling problem, the Genesis Mission produces the forward detection capability the prescriptive recommendations above require. Modeling China’s quantum-AI infrastructure buildout, US federal coordination dynamics, hyperscaler infrastructure investment patterns, and the patent constraint field forming across AI infrastructure as interacting feedback loops produces probability-weighted projections of where the coupling boundary will arrive, which institutional positions will carry structural advantage at that boundary, and which coordination failures are most likely to produce irreversible competitive losses before the boundary is reached.

The Genesis Mission’s recommendations implement this paper’s prescriptions at the level of specific decisions: which hybrid aggregation investments to prioritize, which discovery-layer collaborations to protect, which infrastructure positions to establish before quantum-AI coupling architecture is locked in. The digital Suez Canal that controls the intelligence economy is not yet built. The Genesis Mission is mapping where it will run — and who will own it.

X. Conclusion: The Architecture Problem Has a Solution Window

The United States faces an architecture problem, not a capability problem. American AI research capacity exceeds China’s at the discovery layer. American quantum research leads at the basic science layer. The competitive deficit is structural: no coherent framework governs when US innovation should operate as an open system, when it should operate as a closed system, and how to manage the transitions between them. That deficit is exploitable by any coordinated competitor — and China’s deployment architecture is designed precisely to exploit it.

The solution window is 3–5 years — and the window is structural, not speculative. Infrastructure decisions made now lock in constraints that cannot be reversed at the quantum-AI boundary. Once hyperscaler AI infrastructure positions consolidate, once integration standards lock, once sovereign deployment architectures are established, the options available to late entrants narrow to working around those positions rather than displacing them. Building the hybrid aggregation layer that connects AI discovery to deployment, protecting open-system architecture at the quantum research layer, establishing quantum-AI integration coordination infrastructure, and deploying post-quantum security architecture are all achievable within that window — but only if federal policy makers, institutional innovation leaders, and capital allocators operate from a shared architectural framework rather than incompatible institutional logics.

The S = f(P, T, R) framework provides that shared vocabulary. System bias — the pull toward openness or closure — is a function of placement in the stack, timing in the phase cycle, and innovation risk profile. Every architecture decision the United States needs to make in the next 3–5 years can be evaluated against those three variables and sequenced correctly relative to the quantum-AI coupling transition.

The uncomfortable claim this paper makes is precise: the United States currently subsidizes China’s deployment layer through uncoordinated openness at the discovery layer. American researchers generate the signal. Chinese deployment architecture closes the feedback loop faster and captures the value. That dynamic describes the present condition at the AI layer. Quantum-AI coupling will replicate it at higher strategic stakes — cryptographic infrastructure, materials discovery, AI-accelerated optimization — unless the hybrid aggregation layer is built before the coupling boundary arrives.

Phase-aligned architecture is not a competitive luxury. Systems that misalign architecture at phase boundaries do not recover — they inherit the infrastructure constraints their misalignment produced and compete from behind for every subsequent phase. The United States has the research capacity, the capital, and the institutional talent to build the hybrid aggregation layer that closes the feedback loops faster. What it currently lacks is the architectural vocabulary to coordinate that construction. The vocabulary is here. The window is open. Whether it gets used is the only remaining question.

Appendix: MindCast AI Proprietary Cognitive Digital Twin Foresight Simulation Outputs

The following quantitative outputs derive from MindCast AI Proprietary Cognitive Digital Twin (MAP CDT) Foresight Simulation runs across national systems, firm-level integration stacks, capital allocation patterns, and institutional actors. Composite scoring uses a 0–1 alignment scale. Probability-weighted forecasts carry explicit falsification conditions — if predicted patterns fail to materialize within specified windows, the model requires revision. All outputs are simulation-based foresight, not empirical measurements.

Baseline Architecture Alignment Indices

Composite scoring across alignment, feedback latency, and coordination capacity:

United States current architecture: 0.58 (high discovery signal, low coordination capacity)

China current architecture: 0.66 (moderate discovery, high deployment coordination)

Hybrid optimal architecture target: 0.78–0.85

Variance bands: systems below 0.65 experience compounding coordination drag at phase boundaries. Systems above 0.75 achieve self-reinforcing feedback dominance. The 25–40% performance divergence between systems that successfully implement hybrid aggregation layers and those that remain fragmented at phase transition boundaries is the quantitative expression of the architecture problem this paper diagnoses. The switching condition fires at 0.65 — below that threshold, coordination cost multiplied by capital intensity exceeds marginal discovery value, and the system bias S shifts irreversibly toward closure without coordination.

The predictive accuracy of these simulation outputs rests on a documented validation record. MindCast’s NVIDIA NVQLink Validation publication — timestamped before NVIDIA’s October 28, 2025 announcement — confirmed five of five predicted infrastructure metrics: sub-4 microsecond latency (predicted sub-5), 400 Gb/s throughput (predicted 300+), 8 national lab partners (predicted 6–8), 17 quantum vendors (predicted 12–15), and network-level orchestration architecture over physical co-location (predicted from physics constraints). That track record converts the probability-weighted forecasts below from structural inference into outputs from a methodology with a confirmed empirical baseline. Deployment timeline acceleration is also validated: the NVQLink validation document projected commercial hybrid quantum-AI workloads operational by 2026–2027, compressing the original 2028–2030 forecast by 18–24 months — the same acceleration dynamic driving the 3–5 year urgency framing throughout this paper.

National Systems Simulation

US Feedback Latency Index: 1.8–2.4 (high latency — discovery and deployment operating on incompatible clocks)

China Feedback Latency Index: 1.1–1.4 (moderate latency — deployment coordination functioning, discovery constrained)

Synchronization Integrity Score: US 0.52, China 0.71

Probability-weighted forecasts for federal policy makers: 0.70 probability (12–24 months) of increased coordination rhetoric without structural integration — the Nash-Stigler equilibrium default, where no single actor has enough to gain from building the aggregation layer alone to justify the cost. 0.60 probability (18–36 months) of a layered export control regime separating discovery and deployment layers. 0.30 probability (24–48 months) of a formal national aggregation layer with routing authority across agencies.

Firm-Level Integration Stack Simulation

Integration-layer firms expected value multiple: 1.8–2.5x relative to hardware-only peers

Feedback capture rate differential: +35–60% vs endpoint firms

0.75 probability (12–24 months): orchestration-layer firms outperform hardware-only firms as CUDA-Q/NVQLink adoption consolidates the integration standard. 0.55 probability (18–36 months): dominant integration standard emerges locking in coordination premium for early position holders.

Capital Allocation Simulation

Current allocation imbalance: ~65% capital to hardware endpoints, <20% to integration layer

Expected correction window: 12–30 months

0.75 probability: integration-layer portfolios (Classiq, Q-CTRL, orchestration middleware) outperform hardware-concentrated portfolios as coupling architecture consolidates. 0.65 probability: sovereign capital influences infrastructure standards before private capital fully prices the transition.

Institutional Coordination Simulation

US Institutional Update Velocity: 0.48 — below required threshold of 0.70+ for coordination architecture to self-reinforce

Delay Propagation Index: US 1.9 (high), China 1.2 (moderate)

0.70 probability: regulatory coordination improves in language only — consistent with Nash-Stigler equilibrium, the stable state where no single actor can improve outcomes by unilaterally building the coordination infrastructure. 0.55 probability: post-quantum security becomes the coordination stress test that forces agency synchronization when FIPS 203, 204, and 205 — the three federal post-quantum encryption standards finalized by NIST in August 2024 — impose migration deadlines simultaneously across agencies operating on incompatible timelines.

Program and Consortium Simulation

Current Aggregation Efficiency Score: 0.42–0.55 across major programs

Required threshold for deployment conversion: 0.70+

0.75 probability: structured aggregation outperforms open collaboration models once capital intensity crosses the switching condition threshold — programs with explicit evaluation, routing, and feedback mechanisms convert research outputs to deployment pilots at 25%+ higher rates than open collaboration structures without aggregation discipline.

Genesis Mission Simulation

Projected Feedback Latency Reduction: 30–50% versus fragmented baseline

Coordination Efficiency Gain: 25–40% versus single-layer systems

0.80 probability: integrated simulation systems — those combining open signal intake, hybrid aggregation, and closed-loop validation — outperform standalone platforms on decision latency and prediction accuracy. The Genesis Mission’s structural advantage compounds through exactly this mechanism: the recursive validation loop that produced the NVQLink prediction accuracy recalibrates subsequent forecasts, widening the performance differential with each confirmed prediction.

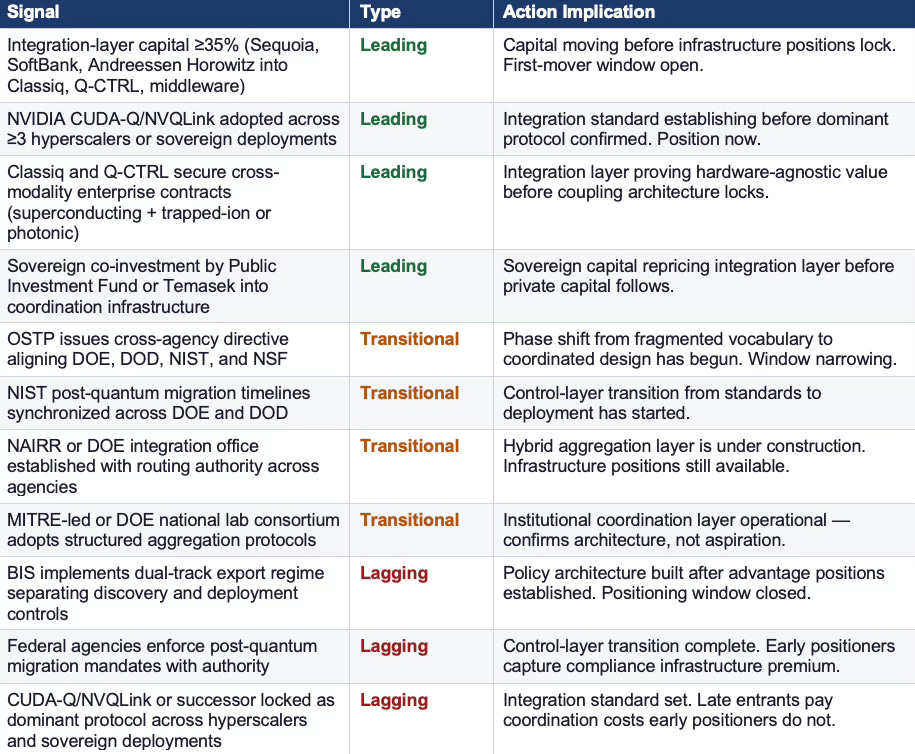

Monitoring Triggers and Phase Reclassification Thresholds

Forecasts become actionable when tied to observable triggers and named actors. The following indicators function as a live monitoring system for phase transitions. When three or more triggers in a domain cross thresholds within a 6–12 month window, reclassify that domain’s phase from development to deployment. If latency metrics fail to improve while capital intensity rises, expect forced closure and consolidation. If integration-layer capital share exceeds 35% before hardware milestones, expect early standard lock-in.

National Systems Triggers

Federal Coordination Mandate (binary): OSTP issues cross-agency directive aligning DOE, DOD, NIST, and NSF around discovery vs. deployment architecture. Currently absent — the most structurally significant trigger in the national systems domain.

Coordination Budget (threshold >$5B): Congress appropriates a dedicated line item administered jointly by DOE/NSF with routing authority — not distributed across existing agency budgets but concentrated in a single coordination vehicle.

Standards Synchronization (variance <6 months): NIST’s three post-quantum encryption standards — FIPS 203, 204, and 205 — adopted in lockstep across DOE, DOD, and civilian agencies. Currently fragmented by agency — synchronization is the observable signal that the control-layer architecture is being built.

Integration-Layer Firm Triggers

Orchestration Standard (≥3 platforms): NVIDIA CUDA-Q/NVQLink adopted across at least three of AWS, Microsoft Azure, Google Cloud, or sovereign deployments including EuroHPC sites. Currently at early adoption — three-platform confirmation is the standard-setting threshold.

Compiler and Error Management (≥2 modalities): Classiq and Q-CTRL secure enterprise contracts spanning at least two hardware modalities — superconducting plus trapped-ion, or photonic plus neutral-atom. Cross-modality contracts confirm the integration layer’s hardware-agnostic value proposition.

Latency Threshold (production pilots): IonQ with NVQLink partners or national labs demonstrates round-trip latency within coherence bounds in production pilots — not laboratory demonstrations but production-environment validation. The NVQLink validation’s geographic constraint analysis established that the sub-4-microsecond threshold limits quantum-to-GPU separation to 200–300 kilometers, concentrating viable deployment in the San Francisco Bay Area, Research Triangle, Northeastern Corridor (Boston-NYC-DC), Chicago, Seattle, and sites near national laboratories. Production pilot confirmation within these coherence zones is the observable signal that geographic infrastructure positions are locking.

Capital Allocation Triggers

Deal Mix Shift (integration ≥35%): Integration-layer investments reach ≥35% of total quantum-AI capital. Currently below 20% — crossing 35% is the leading signal that capital has recognized the mispricing.

Stage Signal (ratio >1.2): Series B and infrastructure rounds led by SoftBank Vision Fund, Sequoia, or Andreessen Horowitz outnumber seed deals for integration firms. Stage mix shift precedes valuation repricing by 6–12 months.

Sovereign Co-Investment (≥2 funds): Co-investments by sovereign funds including Public Investment Fund and Temasek Holdings into coordination infrastructure. Sovereign entry signals that the integration layer has been recognized as strategic infrastructure, not speculative technology.

Regulatory and Institutional Triggers

Dual-Track Export Controls (binary): Bureau of Industry and Security implements policies that explicitly separate discovery-layer collaboration from deployment-layer technology transfer restrictions. Currently absent — a single-layer control regime is the status quo.

Aggregation Entity (binary): Creation of a routing-capable body — expansion of NAIRR or a DOE-led integration office — with authority to direct research outputs toward deployment pipelines across agencies. The structural trigger that converts vocabulary into architecture.

Update Velocity (≥0.65): Measurable increase in cross-agency program alignment within DOE, DOD, NSF, and NIST over a 12-month window, bringing institutional update velocity above the 0.65 threshold required for coordination architecture to self-reinforce.

Signal Classification: Leading, Transitional, Lagging

Signals vary in timing value. Leading signals indicate where advantage is forming — act now. Transitional signals confirm the phase shift has begun — the window is narrowing. Lagging signals confirm advantage has already been captured — late entry faces structural disadvantage.