MCAI Economics Vision: The Full Arc of Prediction Markets

From Truth-Seeking to Strategic Exploitation to Behavioral Extraction

Related publications: Kalshi Is Crypto’s Test Case | Kalshi’s Prediction Market Litigation Architecture, the CFTC Amicus, and the Strategic Framework for State Enforcement | The National Kalshi Prediction Market Litigation Map | The Full Arc of Prediction Markets | Prediction Markets and the Regulatory Split | Prediction Markets— Legislative Regime Conversion and the Collapse of Preemption | Kalshi Found the One Gap in American Gaming Law Nobody Closed | The Ninth Circuit on April 16 as System Convergence — The First Measurable Test of Prediction Market Structure | Kalshi, Prediction Markets and the Conflict Architecture of Regulation | Prediction Markets Litigation Stack — Federal, Private, and State Enforcement Converge

Executive Summary

Prediction markets do not exist as isolated truth engines. A belief layer — fragile, contingent, and structurally embedded — sits at the center of a system that extends from raw information production all the way through narrative formation, capital deployment, flow optimization, and institutional control. Truth emerges only when specific structural conditions hold. When incentives drift, beliefs correlate, or feedback loops degrade, the system does not stall — it transitions. What began as truth-seeking converts into strategic exploitation, and exploitation converts, in time, into behavioral extraction.

Most commentary on prediction markets treats them as either promising or broken, as novel financial instruments or disguised gambling. Both framings miss the point. Prediction markets occupy a defined position within a larger arc. Understanding them means understanding the arc — the full spectrum of actors, incentives, and regime transitions that determine when and why the truth-seeking function survives, and when it collapses. The Kalshi election market controversy — in which a public belief exchange’s legal fight to list congressional control contracts exposed every structural tension the arc framework predicts — is the live-fire instantiation of that question, examined in detail in Section I.

MindCast’s prior work on prediction market regulation, the Cybernetics Series, and the Seahawks Super Bowl LX Cognitive Digital Twin (CDT) Foresight Simulation each addressed segments of this architecture. The present analysis integrates those threads into a unified cartographic framework, mapping the full arc from signal to control and locating prediction markets within it as one transitional layer among many.

I. Two Kinds of Prediction Markets: Economic Basis and Structural Distinction

The term “prediction market” currently covers two structurally distinct activities that share a name but diverge in economic logic, participant composition, regulatory exposure, and epistemic claim. Conflating them produces the analytical confusion that dominates public debate. Separating them is the prerequisite for understanding either.

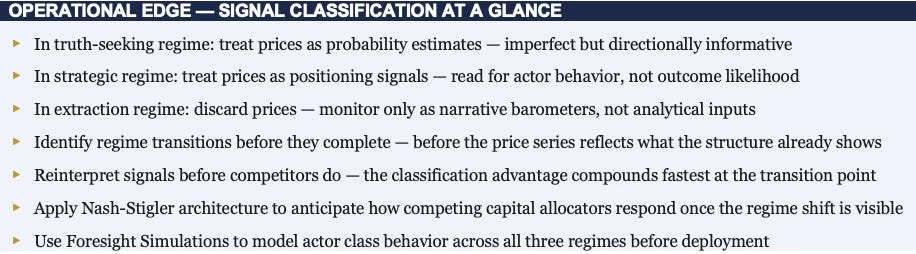

Figure 1. Two Kinds of Prediction Markets: Structural Comparison

Public Belief Exchanges

The first kind — the kind currently in regulatory controversy — operates as a public belief exchange. Platforms such as Kalshi and Polymarket offer open-participation contracts on discrete outcomes: election results, policy decisions, economic data releases, sports outcomes. Any retail participant can buy or sell a binary contract, and the contract price — which moves between zero and one — functions as a publicly broadcast probability estimate. The platform’s revenue model depends on transaction volume, so platform architects optimize for participation breadth and engagement depth. The epistemic claim is explicit: the price represents the crowd’s collective judgment, aggregating distributed private information into a consensus that no individual participant could produce alone.

Regulatory controversy attaches to these platforms precisely because this public epistemic claim creates regulatory standing. When a platform broadcasts a price as a probability estimate and retail participants act on it, regulators can ask whether the price is honest, whether the participants are protected, whether the mechanism is gambling or finance, and whether outcome influence is corrupting the signal. The , state gambling authorities, and congressional actors all engage because a public interface is making a public claim to truth-discovery — and public claims to truth-discovery invite public scrutiny of whether the claim holds.

Proprietary Probability Engines

The second kind operates as a private probability engine. Firms such as SIG (Susquehanna International Group), Jane Street, and Citadel Securities do not run open platforms, do not broadcast prices as public goods, and make no epistemic claim to the commons. What they operate is continuous probability estimation across financial instruments — equity options, index derivatives, volatility surfaces, event-linked securities — with the output deployed internally to identify and capture pricing edge. Accuracy is a private competitive advantage, not a public service. A better probability model generates better edge; that edge is captured through trade, not broadcast. The revenue model is edge times volume times speed, not rake on retail participation.

No retail participant faces exploitation through a public interface because there is no public interface. No regulator asks whether the price is honest because the price is never published as a representation of truth. SIG does not claim to tell the world what will happen — it claims to price faster and more accurately than counterparties, then trade on that difference. Pricing faster and more accurately than counterparties, then trading on that difference, is a capital markets activity — not a belief market activity — regardless of the probabilistic machinery underneath.

The Economic Basis Distinction

The distinction is precise: public prediction markets socialize the belief layer. Proprietary probability pricing privatizes it. Both produce probability estimates. One monetizes by charging for access to the aggregation process and broadcasting the output as a public epistemic good. The other monetizes by keeping the output private and systematically trading against those who lack it.

The public market’s value proposition requires belief diversity and participant independence — the mechanism only works if participants bring genuinely different information. The private firm’s value proposition requires informational advantage over counterparties — the edge only exists if the firm’s model outperforms the market’s. Structurally opposed strategies for extracting value from uncertainty, not variations of the same activity.

Why Controversy Attaches to One and Not the Other

Public prediction markets create three exposures that proprietary probability pricing does not. A retail protection problem: when open platforms attract participants who cannot recognize when market prices have drifted from truth-seeking to strategic regime behavior, those participants face systematic exploitation they cannot detect. An outcome influence problem: public prices broadcast as probability estimates create incentives for outcome influencers to hold positions that profit from events they can also affect, laundering intent through a market mechanism.

A classification problem: public platforms must be assigned to regulatory categories — finance or gambling — that carry fundamentally different participant protection regimes, and the surface features of binary event contracts activate gambling classification frameworks regardless of epistemic function. None of these exposures applies to proprietary probability pricing because there is no public interface, no retail participant, and no epistemic claim requiring regulation.

The controversy around Kalshi’s election markets, PredictIt’s CFTC status, and the broader legislative debate over event contracts all concern the first kind. The institutional activity of SIG and its peers concerns the second. A framework treating them as points on the same spectrum — rather than as structurally distinct activities that happen to involve probability — cannot explain why one generates regulatory controversy and the other does not, why one faces a retail protection problem and the other does not, or why one is epistemically fragile in ways the other is not.

Three Controversies, One Structural Source

Public prediction markets have generated controversy across three distinct domains — sports, elections, and outcome influence — each appearing to involve different regulatory concerns. Examined structurally, all three draw from the same source: the retail protection problem, the outcome influence problem, and the classification problem that Section I identified as the three exposures proprietary probability pricing avoids. The controversies differ in severity and in the specific regulatory frameworks they activate. The underlying structural logic is the same in each.

Sports Markets: A Real but Narrower Controversy

Sports prediction markets carry genuine controversy, but of a different structural character. Three concerns animate the debate. Integrity risk: participants with material inside knowledge of game outcomes — players, coaches, officials, team staff — can exploit prediction market positions in ways that corrupt the underlying sport. The NBA, NFL, and MLB have all suspended players for betting on games, and prediction market contracts on sports outcomes extend that integrity problem to a broader, less regulated participation surface.

Jurisdictional conflict: following the Supreme Court’s 2018 Murphy v. National Collegiate Athletic Association decision striking down the federal sports betting prohibition, most states built their own sports betting regulatory frameworks under state authority. Sports prediction market contracts on CFTC-regulated platforms sit in an uncomfortable gap between federal event contract jurisdiction and state-licensed sports betting, with states arguing their regulatory authority is being circumvented without their consent. The outcome influencer problem takes a specific form in sports: the people with the most material information about outcomes are direct participants in the events being priced, creating a structural integrity exposure that no disclosure regime fully resolves.

Real concerns with active regulatory and legislative engagement — but all operating within a framework most participants accept: pricing sports outcomes as financial instruments is a legitimate activity subject to integrity rules, jurisdictional clarity, and participant protection requirements. The debate is about how to regulate a recognized activity, not whether the activity is categorically permissible.

The Election Market Case: A Categorically Different Controversy

The Kalshi election market controversy is structurally different — not a debate about how to regulate a recognized activity, but a contest over whether the activity is categorically permissible at all. The CFTC’s opposition to Kalshi’s congressional control contracts did not rest on integrity risk or jurisdictional overlap. It rested on a theory about democratic legitimacy: that pricing political outcomes as tradeable contracts corrupts the deliberative process itself, independent of whether the contracts function as honest belief aggregators.

No sports market faces that objection. Nobody argues that pricing the Super Bowl winner corrupts the legitimacy of football. The election market controversy introduced a regulatory category — public interest harm to democratic institutions — that mechanism design cannot resolve and that makes the classification contest categorically harder than anything sports markets face.

Kalshi sought CFTC authorization to list contracts on congressional control outcomes: which party would control the House, the Senate, and the presidency. The CFTC moved to block them on grounds that election contracts constituted activity contrary to the public interest — invoking a regulatory category that had historically been applied to contracts involving manipulation, fraud, or systemic risk, not epistemic function. MindCast’s Prediction Market Regulationestablished the foundational jurisdictional architecture underlying this contest, including the structural tension between CFTC event contract authority and state gambling regulatory frameworks that the Kalshi dispute brought into open conflict. The D.C. Circuit Court of Appeals ruled in Kalshi’s favor in 2024, finding that the CFTC’s public interest determination lacked adequate basis — a ruling tracked in MindCast’s Prediction Market Regulation Update as confirmation that regulatory classification follows political equilibrium rather than functional analysis. The ruling did not resolve the underlying policy question. It reset the political equilibrium around a new legal fact.

The episode instantiated all three structural exposures simultaneously. The retail protection problem appeared immediately: once election contracts launched, retail participants with no understanding of prediction market regime dynamics began treating prices as authoritative probability estimates in real time, with media amplifiers citing Polymarket and Kalshi prices as consensus measures of election likelihood — completing the narrative amplification feedback loop in which market prices lend authority to the narratives that drove correlated retail positioning in the first place.

The outcome influence problem became the episode’s defining controversy: large political donors and campaign-adjacent actors holding significant positions in markets linked to elections they were simultaneously funding raised the precise endogeneity question the arc framework identifies — the market price was no longer measuring an independent probability but encoding the intentions and positioning of actors who also controlled material inputs to the outcome being priced.

The classification problem drove the legal architecture of the entire dispute: the CFTC’s opposition rested on surface resemblance to gambling and public integrity concerns, while Kalshi’s defense rested on functional epistemic value — the identical classification contest the Posnerian framework predicts will always follow political equilibrium rather than functional analysis.

Throughout all of this, SIG, Jane Street, and their peers ran continuous probability models on the same elections, traded election-linked instruments across options and volatility surfaces, and generated zero regulatory scrutiny. No CFTC proceeding. No congressional hearing. No public integrity concern. Not because their activity was less consequential to market structure, but because proprietary probability engines make no public epistemic claim, expose no retail participant, and present no classifiable surface to regulatory frameworks built around public interface and retail protection. The Kalshi controversy and the institutional trading activity were structurally incommensurable — not two versions of the same thing, but two different things that the absence of a framework made appear to be the same.

The election market episode did not reveal that prediction markets are dangerous. It revealed that public belief exchanges and proprietary probability engines operate under structurally different rules — and that regulatory frameworks built for one cannot govern the other.

Outcome Influence: The Cross-Domain Controversy

The outcome influence problem is not specific to sports or elections — it runs across every domain where prediction market participants also possess the capacity to affect the events being predicted. Political donors betting on elections they fund. Corporate insiders holding event contracts on regulatory decisions their lobbying affects. Athletes trading on game outcomes in which they participate. In each case the market price ceases to measure an independent probability and begins encoding the intentions of actors who simultaneously occupy both sides of the prediction — as forecaster and as cause.

What makes outcome influence structurally distinct from the sports and election controversies is that it does not require a specific regulatory category to generate harm, and no existing framework was designed to address it directly. Gambling law addresses corruption of the underlying event but does not reach market manipulation by participants who are also actors in the event. Securities law addresses insider trading but requires a security and a defined issuer. Commodity futures law requires a commodity with separable economic function. Outcome influence in prediction markets falls into the gap between all three frameworks simultaneously — more corrosive than sports integrity violations because the scale of positions is uncapped, and more structurally destabilizing than election market classification disputes because the endogeneity is self-reinforcing: prices that encode actor intentions influence other participants’ beliefs, which influence the actor’s subsequent behavior, which further encodes in subsequent prices.

The controversy around outcome influence is quieter than sports integrity disputes and election market classification fights precisely because no regulatory framework has yet named it with sufficient precision to activate enforcement. MindCast’s Signal Suppression Equilibrium framework is specifically designed to map this endogeneity — identifying when and to what degree market prices encode actor intentions, and anticipating the threshold conditions under which regulators will develop the vocabulary to act.

The Legal Architecture Gap: Between Gambling Law and Commodity Futures Law

The deeper reason prediction market controversies resist resolution is not that regulators cannot decide whether prediction markets are gambling. The deeper reason is that prediction markets genuinely occupy the gap between two legal frameworks — gambling law and commodity futures law — that were each constructed around a world where the distinction between them was obvious. Neither framework was designed for an activity that is simultaneously a financial instrument, an information aggregation mechanism, and a mass-participation wagering product. The controversy is structural, not factual.

Gambling law targets wagering where the sole economic function is risk transfer between bettors with no underlying productive purpose. Futures and derivatives markets — which also involve risk and uncertain outcomes — are exempted from gambling law precisely because they serve a price discovery or hedging function in underlying economic activity. A corn futures contract serves farmers and processors who need to manage price exposure in a real underlying market. The CFTC’s jurisdiction over event contracts rests on this economic purpose doctrine: the contract must connect to economic activity whose price or outcome has productive significance beyond the wager itself.

Prediction markets claim exactly this exemption. A contract on congressional control, Kalshi argued, serves the economic purpose of aggregating forecasts about legislative outcomes that affect real investment and planning decisions. Gambling law answered that the claim is pretextual when no underlying hedging market exists and retail participation is driven by the wager, not by the hedge. Both arguments are legally coherent. A structural gap in legal categories — never designed to address an activity with this combination of features — separates them, not a factual dispute about what prediction markets do.

The jurisdictional architecture compounds the problem. Gambling is primarily state-regulated under the police power reserved to states under the Tenth Amendment. The CFTC regulates commodity futures and event contracts under federal law — which preempts state law when applicable. When Kalshi obtained CFTC designation as a contract market, it claimed the preemptive shield of federal commodity law against state gambling prohibitions.

States running sports betting licensing frameworks argued their authority governed regardless of federal designation. The D.C. Circuit’s 2024 ruling in Kalshi’s favor resolved that jurisdictional contest in favor of federal preemption — but it did not determine that election contracts are not gambling under state law on the merits, and it did not resolve the CFTC’s public interest analysis. It moved a legal boundary without closing the underlying gap.

The information asymmetry problem runs in the opposite direction from both frameworks. Gambling law has historically treated information asymmetry as corruption: a bettor with inside knowledge of a fixed outcome commits fraud. Commodity futures law treats information asymmetry as the mechanism that makes markets function: a trader with better information than the market is supposed to move the price toward truth by exploiting their edge. Prediction market theory inherits the commodity framework — inside information is supposed to improve price accuracy. Gambling integrity rules inherit the opposite premise.

A sports player who knows they will underperform and trades prediction market contracts on that knowledge is simultaneously improving price accuracy under one legal theory and committing integrity fraud under another. Both conclusions follow from the same facts under different frameworks. The frameworks do not resolve the contradiction; they each answer a different question.

The event definition problem completes the gap. Gambling law requires a bet on an outcome that is uncertain and determined by parties other than the bettor. Futures law requires a contract on an underlying commodity or index with economic significance independent of the contract itself. Political event contracts fail both definitions in characteristic ways: the outcome is uncertain but the underlying has no commodity index, and the outcome is partly endogenous to the bettors themselves when those bettors also fund campaigns.

Neither framework was designed for endogenous outcomes — events that prediction market pricing can itself influence, and in which market participants are also actors in the underlying event. Both frameworks assume a clean separation between observer and observed that the outcome influencer problem structurally violates.

Prediction market controversies are not disputes about whether markets resemble gambling. They are collisions between two legal frameworks — gambling law and commodity futures law — that reach opposite conclusions from the same facts because they were built to answer different questions. The gap between them is where prediction markets live.

MindCast at the Intersection

MindCast’s analytical position sits precisely at the intersection of both. Public prediction markets generate prices that institutional actors on the private side monitor as one input among many. When those public prices carry genuine epistemic content — when they reflect truth-seeking regime dynamics — they are informative inputs to private probability models.

When public prices have drifted into strategic or extraction regime dynamics, treating them as probability estimates introduces systematic miscalibration into any model that ingests them. MindCast provides the regime-state intelligence that determines when public prediction market prices are informative versus when they are strategic artifacts — a determination no actor inside the arc can make reliably from its own position within the system it is trying to read.

II. Governing Structure

Before examining who populates the arc and why they behave as they do, the arc itself requires precise definition. The system that prediction markets inhabit is not a collection of adjacent industries — it is a single architecture in which each layer generates the conditions that the next layer exploits. Locating prediction markets within that architecture is the prerequisite for understanding both their epistemic function and their structural fragility.

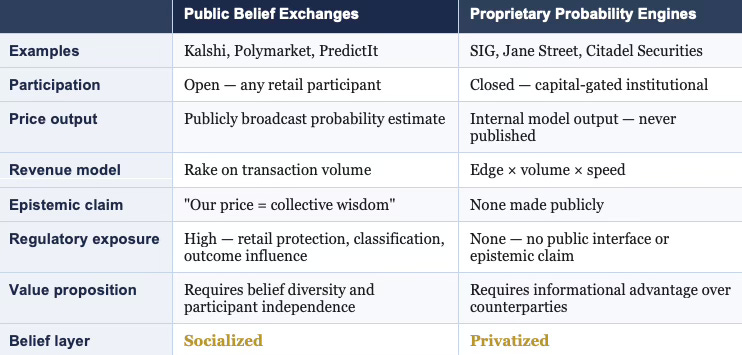

At the highest level of abstraction, the system follows a single continuous arc:

Signal → Belief → Position → Capital → Flow → Control

Each stage represents a distinct transformation of uncertainty. Raw signals — data, events, statements, rumors — enter the system at the left and undergo successive processing: aggregation into beliefs, translation of beliefs into positions, concentration of positions into capital, deployment of capital as flow, and crystallization of flow patterns into structural control. Prediction markets occupy the narrow band between belief formation and early capital deployment. Hedge funds and trading firms dominate the capital layer. Sportsbooks occupy the flow layer. Casinos represent flow optimization taken to its extractive limit. Regulators and platform architects define the control layer that governs what can exist at every stage below them.

Most analyses treat these as separate industries. The arc reveals them as successive phases of a single system — phases that share structural logic, exert mutual pressure, and drive one another toward predictable regime transitions. A sportsbook entering the prediction market space does not bring a foreign incentive structure into a pure epistemic environment; it accelerates a migration that the market’s own internal dynamics had already begun. A capital allocator exploiting prediction market prices does not corrupt a pristine truth-discovery mechanism; it completes a transition from belief aggregation to relative performance that volume growth had already initiated.

MindCast’s Consumer AI Device Series applied the same arc to device ecosystems across four installments, producing a prior validation of the framework’s cross-domain applicability.

Installment I — The Intelligence Gap: Apple’s AI Strategy and the Commoditization Bet established the drift-stable equilibrium pattern in Apple’s AI strategy — surface metrics stable while the internal trajectory deteriorates — mapping directly onto the truth-seeking-to-extraction regime transition.

Installment II — The Apple AI Challenger Framework: Google, Samsung, and the Intelligence Layer modeled Google’s dual-loop position as the only institutional architecture sustaining governance under both scenario resolutions, the device ecosystem analog of a capital allocator operating simultaneously across multiple arc layers.

Installment III — The Consumer AI Device Intelligence Layer: Value Capture Under Interface Drift traced value capture migrating from the interface layer to the behavioral default layer — the device equivalent of the arc’s transition from belief aggregation to flow control.

Installment IV How Cybernetic Feedback Latency, Loop Architecture, and Ashby’s Viability Condition Resolve Consumer AI Device Competition applied Ashby’s Law of Requisite Variety (”An Introduction to Cybernetics,” 1956) to the competitive system, grounding the phase transition conditions that determine when a mechanism can no longer sustain its epistemic function against structural pressures.

The series synthesis established the conclusion those four installments approached individually: device platforms transition from open epistemic environments toward closed control architectures through the identical incentive drift sequence — early-stage openness attracts epistemic diversity; value concentration attracts capital allocators who optimize for extraction; platform architects respond by designing for engagement over exploration; the control layer crystallizes. The arc is not a prediction market phenomenon. A system-level dynamic governs any architecture in which distributed belief or preference production is progressively captured by capital and institutional control. Prediction markets represent one particularly transparent instantiation because the belief layer is visible — priced, public, and apparently objective. Transparency makes them useful analytical objects. Transparency also makes them uniquely vulnerable to manipulation.

The arc establishes the frame. Every subsequent section — the distinction between belief markets and capital markets, the three regime states, the actor classes, the pressures driving drift — derives its analytical precision from the arc’s structure. Understanding what prediction markets are requires first understanding where they sit.

Each stage of the arc operates under a distinct governing economic logic. Hayek governs the signal-to-belief transformation: signals carry dispersed local knowledge, and the aggregation mechanism at the belief layer is the Hayekian price system applied to probability rather than to resource allocation. Becker governs the belief-to-position transition: actors respond to the payoff function the market actually offers, not to the epistemic function it claims to serve, and once that payoff function rewards strategic positioning over honest belief expression, Beckerian rational choice predicts the transition from truth-seeking to strategic behavior.

Nash-Stigler governs the position-to-capital layer — drawing on Nash’s equilibrium theory (”Non-Cooperative Games,” 1951) and Stigler’s work on information and market structure (”The Economics of Information,” 1961): capital allocators model each other’s behavior, withhold information strategically, and compete for relative advantage in ways that game theory — not individual optimization — explains. Coase governs the capital-to-flow layer: sportsbooks and casinos are Coasian transaction cost architectures, minimizing the friction of retail participation while extracting rent from the flow; platform consolidation at this layer follows the Coasian logic that transaction cost reduction creates scale advantages that concentrate flow through fewer intermediaries.

Posner governs the flow-to-control transition: the control layer — regulatory classification, legal architecture, the distribution of permissions across actor classes — reflects incumbent political equilibrium rather than functional optimization, exactly as Posnerian institutional analysis predicts. The arc is not merely a description of how markets behave. A map of where governing economic logic changes — and each transition point is where structural pressure accumulates.

Figure 3. Arc Economic Logic: Governing Tradition by Stage

III. Core Distinction: Belief Markets vs. Capital Markets

Prediction markets function as belief markets. Participants convert private information and judgment into public probability estimates by placing positions, and prices aggregate those estimates into a synthetic consensus. The mechanism depends on a specific set of structural conditions: participants must hold reasonably independent beliefs, incentives must reward accuracy over narrative alignment, and feedback must arrive cleanly enough to support learning.

Capital markets function under a different logic entirely. Hedge funds and trading firms deploy capital to extract profit from mispricing and strategic positioning. Accuracy serves as an input, not an end. A capital market participant holding correct beliefs but wrong position sizing loses. Conversely, a participant holding slightly wrong beliefs but superior information about other participants’ intentions can win. Capital markets are games of relative performance, not epistemic tournaments.

Prediction markets fail systematically when capital market logic colonizes the belief market structure. Once participants optimize for position profit rather than predictive accuracy — once the payoff function rewards strategic behavior over honest signal production — the aggregation mechanism degrades. Prices begin to reflect positioning dynamics rather than underlying probability estimates. The market continues to produce numbers. Numbers that cease to mean what the interface claims they mean.

Identifying regime transition in real time requires more than observing price movements. The diagnostic tools come from the structure of incentives, the correlation of beliefs, and the feedback dynamics — not from the price series itself.

Contact mcai@mindcast-ai.com to partner with us on Predictive Law and Behavioral Economics + Game Theory Foresight Simulations. To deep dive on MindCast work in Cybernetic Foresight Simulations upload the URL of this publication into any LLM and prompt ‘reconstruct MindCast framework with three degrees of cited sub links.’ See Live-Fire Game Theory Simulators, Runtime Predictive Infrastructure.

Recent projects: The Power Stack Series— How Energy Infrastructure Became the New AI Battleground | MindCast AI Emergent Game Theory Frameworks | MindCast AI Field-Geometry Reasoning | MindCast AI Installed Cognitive Grammar | Runtime Geometry, A Framework for Predictive Institutional Economics | Super Bowl LX — AI Simulation vs. Reality | The Runtime Causation Arbitration Directive | Google’s Deep-Thinking Ratio Measures Effort, Not Structure | MindCast AI Constraint Geometry and Institutional Field Dynamics | Double-Sided Rational Ignorance, How Platform Intermediaries Monetize the Measurement Gap | Executive Summary of MindCast AI Investment Series

IV. Rationality as a Conditional System Property

One of the most durable errors in prediction market discourse treats truth-discovery as the market’s natural equilibrium state. Markets do not naturally gravitate toward truth. Truth emerges as a contingent property of system conditions. When conditions hold, truth is the output. When conditions degrade, strategic behavior or extraction displaces it.

Truth Role (T) = f ( rationality structure, incentive alignment, independence of signals, feedback integrity )

Three distinct regimes govern outcomes across the arc.

In the truth-seeking regime, independent actors operate under incentives that reward accuracy above all else. Judgment errors distribute independently across participants, and aggregation causes them to cancel. No single actor can reliably shift the price away from the true probability without losing money. Truth emerges not because participants are virtuous but because the incentive structure punishes deviation from honest belief expression.

In the strategic regime, actors model each other. Participants no longer simply express beliefs — they ask what other actors believe, what positions they hold, and how to exploit the gap between market prices and underlying realities created by strategic positioning. Accuracy becomes instrumental. A participant may hold correct beliefs about an outcome while deliberately trading in the opposite direction to obscure position, accumulate a larger stake, or trigger stop-losses in other participants. Truth ceases to be the primary output of the market; relative advantage becomes the objective function.

In the extraction regime, the market no longer even pretends to aggregate beliefs honestly. Platform architects design participation structures to maximize engagement and loss rates. Behavioral biases — overconfidence, the gambler’s fallacy, recency weighting, social proof — are not anomalies to be corrected but features to be amplified. Truth becomes irrelevant to the operator.

Prediction markets aspire to the first regime. Hedge funds operate in the second. Casinos operate in the third. The critical analytical question is never which regime a market occupies at a given moment, but rather which structural pressures are pushing it toward regime transition — and how fast.

Identifying those pressures requires mapping the actors who create them. Each actor class within the arc carries a distinct incentive structure, exerts characteristic force on adjacent actors, and contributes to or undermines the conditions that sustain each regime.

V. Archetypal Players Across the Arc

Eleven actor classes populate the arc. Each occupies a defined position, operates under distinct incentive geometry, and exerts characteristic pressure on adjacent actors. No actor operates in isolation. Signal generators feed narrative amplifiers who distort the belief layer that arbitrageurs exploit on behalf of capital allocators who drain the liquidity that retail participants were providing to platform architects optimizing for volume. The arc is not a taxonomy — it is a system of interdependencies, and understanding any actor requires understanding what it takes from and delivers to the actors beside it.

Signal Generators

Raw information production originates here. Journalists, analysts, data vendors, research institutions, and social media participants all function as signal generators. Incentives in this class reward attention and relevance, not accuracy. A wrong prediction generating significant engagement earns more than a correct prediction generating none. Signal generators supply the upstream input layer for all downstream actors, which means their bias structure — toward novelty, toward conflict, toward confident assertion — propagates through the entire arc. Narrative amplifiers selectively draw from signal generators, accelerating whatever content best synchronizes downstream belief. Capital allocators monitor signal generator output for exploitable divergence between narrative and underlying probability.

Belief Aggregators (Prediction Markets)

Dispersed signals translate into probability estimates here. When the mechanism functions, prices encode the collective judgment of genuinely independent participants, each with private information that others lack. When narrative amplifiers have already synchronized participant beliefs, the market aggregates correlated priors rather than independent signals, producing a price that encodes shared bias rather than distributed knowledge. The aggregator cannot diagnose its own failure from the inside; it continues generating prices regardless of whether those prices carry epistemic content.

Narrative Amplifiers

Individual beliefs convert into collective priors through narrative amplification. Media ecosystems, social networks, and influential commentators collapse the diversity of participant judgment into synchronized expectation. MindCast’s Predictive Cybernetics Suite formalizes this as feedback-driven signal alignment: narrative amplifiers create the conditions under which the error-cancellation mechanism fails, because errors are no longer independent — they share a common cause. Narrative amplifiers benefit from the appearance of a functioning belief market because market prices lend authority to the narratives they distribute. A Polymarket or Kalshi price becomes a data point amplifiers cite as evidence of consensus, further entrenching the prior the market was encoding.

Retail Position Takers

Retail participants provide liquidity and behavioral texture. Operating under bounded rationality, retail participants follow narratives, anchor to round numbers, exhibit loss aversion, and update beliefs asymmetrically. Retail participation generates the volume that makes markets liquid and the behavioral predictability that makes them profitable for arbitrageurs and capital allocators. Platform architects design participation interfaces specifically to maximize retail engagement — which means optimizing for the behavioral tendencies that make retail participants exploitable rather than for the accuracy incentives that would make them epistemically valuable.

Arbitrageurs

Arbitrageurs bridge belief markets and capital markets. By exploiting price inconsistencies across platforms and instruments, arbitrageurs introduce financial discipline and accelerate price discovery. Arbitrage activity also accelerates drift toward capital market logic: arbitrageurs are indifferent to why a price is wrong; they care only that it is wrong and that correcting it is profitable. A market dominated by arbitrageurs improves in short-run accuracy and degrades in long-run epistemic character, because the participant composition has shifted from truth-motivated forecasters to profit-motivated traders whose presence improves pricing on observable signals while reducing the informational diversity that makes aggregation epistemically productive.

Capital Allocators (Hedge Funds / Trading Firms)

Capital allocators — hedge funds, trading firms, and sophisticated institutional participants — treat prediction markets as one signal environment among many. Beliefs produced by prediction markets become inputs to exploit, not outputs to trust. Capital allocators withhold their own information, act strategically, and systematically extract value from retail participants and naive arbitrageurs. Their presence is empirically associated with improved short-run price accuracy and degraded long-run epistemic integrity. More consequentially, capital allocators operate across the arc simultaneously — extracting signal from belief markets, deploying it in capital markets, and influencing flow patterns in ways that feed back into the upstream belief layer they were ostensibly drawing from.

Odds Setters (Sportsbooks)

Sportsbooks price probabilities to balance flow and manage risk, not to produce truth. A line moves not because new information arrives about the underlying event but because money distribution across positions creates unacceptable risk for the book. Sportsbooks operate in a fundamentally different mode than prediction markets despite superficial similarity: they optimize for margin, not epistemic accuracy. A sportsbook’s entry into prediction market adjacent spaces does not import truth-discovery incentives into the flow optimization framework — it exports flow optimization incentives into what was previously a belief market environment.

Flow Optimizers (Casinos)

Casinos represent the extractive endpoint of the arc. Platform design, environmental engineering, and product architecture converge on a single objective: maximizing participation duration and loss rates. Bounded rationality is not a friction to be overcome but a resource to be harvested. Flow optimizers pull platform architects toward extraction dynamics by demonstrating the profitability of engagement-maximizing design — demonstrating that volume is more reliably monetized through behavioral exploitation than through epistemic function.

Platform Architects

Platform architects define the rules of engagement: contract design, participation requirements, liquidity mechanisms, resolution criteria. These structural choices determine whether a market sustains truth-seeking dynamics or engineers drift toward strategic and extraction behavior from the start. Platform architects face a persistent tension: epistemic accuracy requires design choices that reduce volume — restricting high-frequency trading, enforcing position limits, preserving retail participant diversity — while revenue generation rewards volume maximization. In competitive market environments, platform architects who prioritize epistemic accuracy lose market share to those who optimize for engagement, creating a selection pressure toward extraction dynamics regardless of individual platform intentions.

Regulators

Regulators classify and constrain the system — and classification is not a neutral administrative act. Regulatory decisions determine which actors can participate, at what scale, under what disclosure requirements, and whether the applicable framework is designed for investor protection, gambling regulation, or neither. A prediction market operating as a genuine truth-seeking belief aggregator may be classified as gambling because its surface features activate the wrong regulatory category; a sportsbook optimizing for behavioral extraction may escape the epistemic scrutiny its function warrants because it has established political relationships that insulate it from reclassification. The legal architecture gap established in Section I — the structural space between gambling law and commodity futures law where prediction markets actually operate — exists in part because regulators lack the functional classification tools to distinguish markets by regime state rather than by surface resemblance. Public choice theory — grounded in Buchanan and Tullock’s “The Calculus of Consent” (1962) — establishes that this is not an oversight: classification decisions follow the political equilibrium among incumbents, and incumbents benefit from frameworks that protect their existing positions rather than from frameworks optimized for epistemic output. Regulators are simultaneously the control layer governing what the system can do and the actors most structurally constrained by the political equilibrium in which they operate — which is why regime-state functional analysis, not surface classification, is the prerequisite for effective regulatory action.

Outcome Influencers

Outcome influencers represent the most structurally destabilizing actor class: participants with the ability to shape the underlying events being predicted. Outcome influencers collapse the separation between observation and causation. When an actor holds a large position on an election outcome and simultaneously controls campaign messaging, the prediction market no longer measures an independent probability — it measures the actor’s own intentions, partially laundered through a price mechanism. MindCast’s Seahawks Super Bowl LX CDT Foresight Simulation demonstrated precisely this dynamic: actors within the system influence both expectations and outcomes simultaneously, and the market price encodes that entanglement rather than resolving it. Outcome influencers render the feedback mechanism structurally unreliable — not because resolution is delayed or noisy, but because the outcome being resolved is itself a function of the predictions being made.

Eleven actors, eleven distinct incentive structures, eleven characteristic pressures on the actors beside them. What the individual entries cannot show is how those incentive structures interact when they collide within the same market environment — which is the question Section VI addresses.

VI. Incentive Geometry Across Systems

Mapping incentive structures across actor classes reveals the geometric logic driving systemic drift. Prediction markets, under ideal conditions, incentivize epistemic accuracy. Hedge funds incentivize relative advantage. Sportsbooks incentivize balanced flow and margin. Casinos incentivize maximum behavioral extraction. Each incentive structure is internally coherent; each is fundamentally incompatible with the others when introduced into the same operating environment.

Drift occurs when actors operating under capital market or flow optimization incentives enter belief market structures. Capital allocators entering prediction markets do not adopt belief market incentives — they import capital market incentives into a belief market environment, degrading the conditions that sustain epistemic value. Volume-chasing platform design produces the same result through a different mechanism: when prediction markets optimize for participation rates rather than forecast accuracy, they migrate structurally toward sportsbook dynamics. When they optimize for behavioral engagement, they migrate toward casino dynamics.

The trajectory is directional and largely irreversible under normal conditions. A prediction market that has drifted into strategic regime behavior can return to truth-seeking only through deliberate architectural intervention — and absent that intervention, drift continues.

Incentive geometry explains the direction and velocity of systemic drift. The academic traditions that ground the framework explain why each incentive structure produces the behavior it does — and why the combination of those structures in a single operating environment generates outcomes that no single tradition could predict alone.

VII. The Academic Architecture

The arc framework does not emerge from a single intellectual tradition — it integrates several that have each explained a different layer of the system prediction markets inhabit. Presenting those traditions as a catalogue would miss the point. Each one explains what the others leave implicit, and the framework’s analytical power derives from their interaction rather than from any single contribution.

The arc framework draws on multiple intellectual traditions. Understanding why requires integrating rather than cataloguing them.

Hayek’s insight — formalized in “The Use of Knowledge in Society” (1945) — that markets aggregate dispersed, locally-held knowledge that no central planner could access provides the foundational logic for prediction markets as belief aggregators. Prediction markets represent a pure application of the Hayekian mechanism, stripped of the real-resource allocation function that grounds ordinary price signals in consequential decisions. Detaching the signal from consequential allocation increases the mechanism’s fragility: participants in real asset markets face material consequences that discipline strategic behavior; prediction market participants face only position risk, which capital-rich actors can absorb with minimal epistemic cost.

Becker’s rational choice framework — developed across “The Economic Approach to Human Behavior” (1976) — explains why the Hayekian mechanism fails under pressure. Actors respond to incentives. When the incentive structure rewards strategic positioning over honest belief expression, rational actors position strategically. The Beckerian actor does not betray the truth-seeking purpose of the market — the actor simply optimizes for the payoff function the market actually offers, which under strategic conditions differs fundamentally from the payoff function the market claims to offer. Prediction markets fail not because participants are irrational but because rational participants under misaligned incentives systematically produce irrational-looking aggregate outputs.

Fama’s efficient market hypothesis — first systematically articulated in “Efficient Capital Markets: A Review of Empirical Work” (1970) — specifies the conditions under which prices fully reflect available information. Applying Fama rigorously to prediction markets reveals that most operate in a state of pseudo-efficiency: prices adjust in response to observable signals, producing the appearance of information incorporation, while systematic biases from correlated beliefs, thin liquidity, and strategic withholding degrade the quality of that incorporation. A market can be locally efficient — responsive to new information within a session — while remaining structurally inefficient in the sense that prices fail to reflect the true probability distribution.

Posner’s institutional analysis — developed in “Economic Analysis of Law” (1973) and elaborated through the law and economics tradition — supplies the regulatory layer that Hayek, Becker, and Fama leave underspecified. Legal classification does not follow functional analysis; it follows political equilibrium. Prediction markets face the persistent threat of regulatory reclassification as gambling not because their epistemic function is indistinguishable from gambling but because their surface features resemble gambling in ways that activate existing regulatory categories and incumbent political interests. The classification decision reshapes the incentive structure at every downstream layer, demonstrating that institutional design is not a neutral container for market function but an active determinant of what function the market can perform.

Mechanism design theory specifies the conditions under which designed institutions achieve their intended equilibria. Prediction markets rest on mechanism design assumptions — truthful revelation, independence of types, robust resolution criteria — that break systematically under manipulation, thin liquidity, correlated beliefs, and outcome influence. Recognizing prediction markets as mechanism design problems rather than naturally occurring price discovery systems reframes the diagnostic question: not whether markets work in the abstract, but whether the specific mechanism deployed in a specific context can sustain its equilibrium conditions against the structural pressures acting on it.

Coase’s transaction cost theory — established in “The Nature of the Firm” (1937) and “The Problem of Social Cost” (1960) — explains the flow layer that the other traditions leave unaddressed. Coase established that firms and markets exist on a continuum governed by transaction costs: activities are internalized within firms when the cost of market transactions exceeds the cost of internal coordination, and exposed to markets when the reverse holds. Applied to the arc, Coasian logic explains why sportsbooks and flow optimizer platforms consolidate around scale advantages: reducing the transaction costs of retail participation to near zero concentrates flow through fewer intermediaries, and the operator captures the spread between frictionless participation and the true cost of the underlying risk.

Platform architects at the flow layer are making Coasian boundary-of-the-firm decisions when they choose which prediction functions to internalize, which to expose to market participants, and which to bundle with engagement mechanics that increase switching costs. Coase also explains why proprietary probability engines remain private: when the transaction cost of revealing model output exceeds the cost of trading on it privately, rational actors keep their probability estimates internal. The public/private structural distinction established in Section I is, at its economic foundation, a Coasian transaction cost choice.

Behavioral economics and market microstructure complete the picture from below. Behavioral economics establishes that systematic cognitive biases are not noise — they are predictable, exploitable, and amplifiable. Narrative amplification converts individual-level biases into collective distortions; a single anchoring effect operating across thousands of correlated participants can shift market prices in ways that superficially resemble genuine information incorporation while encoding a shared cognitive error.

Market microstructure establishes that price discovery depends on the composition and behavior of the participant pool. Thin liquidity, concentrated informed participation, and retail domination each produce characteristic distortions in the price formation process — distortions that look different from the outside but share a common structural cause.

Taken together, these traditions form a layered analytical architecture. Hayek explains the aspiration. Becker explains the failure mode. Posner explains the regulatory constraint. Coase explains the platform economics that govern the flow layer. Mechanism design explains the architectural dependency. Behavioral economics and microstructure explain the specific pathways through which the architecture degrades. MindCast adds the layer each tradition leaves implicit: transition modeling — the capacity to predict not merely what each layer does under stable conditions, but when and why stable conditions will break and what regime replaces them.

Three forces drive those breaks. Each operates independently of the others — and each compounds their effect when they operate together.

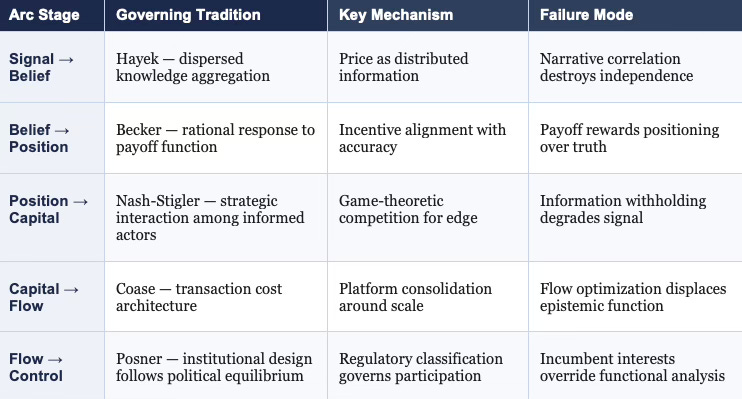

VIII. Structural Pressures Driving Drift

Incentive drift, belief correlation, and feedback distortion do not require bad actors or deliberate manipulation to move a prediction market out of truth-seeking behavior. Each force operates through individually rational decisions that aggregate into systemic degradation — which is why they are difficult to detect from inside the market and why identifying them requires the external vantage point the arc framework provides.

Three forces consistently push systems along the arc from truth-seeking toward strategic and extraction behavior. Each operates independently; each compounds the others.

Figure 4. Three Structural Pressures Driving Regime Drift

Correlation of Beliefs

Correlation of beliefs represents the most fundamental threat to the Hayekian aggregation mechanism. Error cancellation depends on independence: when participant A overestimates probability and participant B underestimates it, their errors offset in the aggregate price. Narrative amplification breaks this independence. When signal generators produce synchronized content and distribution platforms amplify it uniformly across participant populations, participants begin with shared priors rather than independent ones. Errors no longer cancel — they compound. The aggregate price encodes the shared bias rather than the true probability, and no mechanism internal to the market can detect or correct the error because the error is distributed identically across all participants.

Incentive Drift

Incentive drift converts accuracy-rewarding environments into profit-rewarding ones through a predictable sequence. Early-stage prediction markets attract participants genuinely motivated by accurate forecasting. Volume growth attracts arbitrageurs and capital allocators. Their presence improves short-run price accuracy and degrades long-run epistemic integrity by shifting the equilibrium participant composition away from truth-motivated forecasters and toward profit-motivated traders. Platform architects respond to volume growth by optimizing participation design for engagement, completing the drift from epistemic to extractive incentive structures. Each step in the sequence is individually rational for the actor taking it; the aggregate consequence is systematic degradation of the mechanism’s original function.

Feedback Distortion

Feedback distortion degrades the learning mechanism that prediction markets rely on to correct systematic errors over time. Delayed or noisy resolution prevents participants from updating beliefs in response to prediction outcomes. Outcome influence introduces a more corrosive distortion: when participants can affect the events being predicted, the feedback relationship between prediction and outcome inverts. The market no longer measures an independent external reality — it measures a partially endogenous process in which participant expectations influence the outcome being measured, which in turn influences subsequent expectations. The feedback loop that was supposed to enforce epistemic discipline instead amplifies whatever bias the market encoded at the moment outcome influence entered the system.

IX. Phase Transition Conditions

The three drift pressures identified in Section VIII do not degrade prediction market accuracy gradually and uniformly. Degradation follows threshold logic: markets sustain truth-generating behavior until specific structural conditions fail, at which point the system transitions to a different regime. Understanding those threshold conditions is the prerequisite for detecting transitions before they complete.

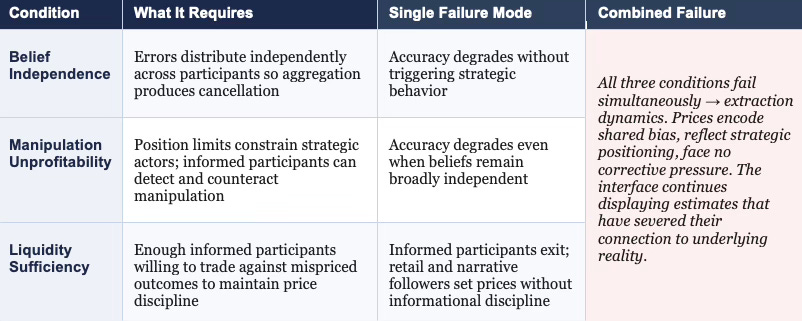

Figure 5. Phase Transition Conditions: Failure Modes and Regime Consequences

Prediction markets sustain truth-generating behavior only when three conditions hold simultaneously. Participant beliefs must remain sufficiently independent that aggregation produces cancellation rather than amplification of errors. Manipulation must remain unprofitable — either because position limits constrain strategic actors or because informed participants can reliably detect and counteract manipulation. Liquidity must remain sufficient to attract genuinely informed participants willing to trade against mispriced outcomes.

Failure of any single condition initiates regime transition toward strategic behavior. Belief correlation alone degrades aggregate accuracy without necessarily triggering strategic manipulation. Profitable manipulation alone can degrade accuracy even when participant beliefs remain broadly independent. Thin liquidity alone drives informed participants out, leaving retail participants and narrative followers to set prices without informational discipline.

Failure of all three conditions simultaneously produces extraction dynamics. When beliefs are correlated, manipulation is profitable, and informed participants have exited, the market produces prices that encode shared biases, reflect strategic positioning, and face no corrective pressure from independent informed trading. The interface continues to display probability estimates — estimates that have severed their connection to the underlying reality being predicted.

Phase transition conditions define when a market stops being what its interface claims it is. The foresight predictions that follow apply this logic forward — tracing where the structural pressures documented in Sections VII and VIII are driving public prediction markets over the next decade.

X. Foresight Predictions

Foresight predictions derived from a structural framework carry a different epistemic status than trend extrapolation. Each prediction below follows causally from the arc’s internal logic — from the incentive drift sequence, the phase transition conditions, and the actor class dynamics documented in prior sections. Where the mechanism is clear, the prediction follows with high confidence. Where timing is uncertain, the structural direction is not.

Prediction markets will increasingly concentrate in high-engagement domains — sports, elections, entertainment outcomes — because these domains structurally guarantee the narrative amplification that drives retail participation volume. Accuracy is not the attractor; engagement is. Platform architects optimizing for revenue will follow engagement, not accuracy, and regulatory classification will follow the surface features of the resulting platforms rather than their epistemic function.

Aggregate accuracy in public prediction markets will decline measurably from current baselines as narrative correlation increases and retail participation grows. The mechanism is straightforward: growing platforms attract capital allocators whose strategic behavior degrades belief independence; growing platforms attract platform architects who optimize for engagement rather than accuracy; growing platforms attract narrative amplifiers who use market prices to lend authority to the synchronized beliefs those prices now merely reflect. The degradation is self-reinforcing.

High-quality forecasting will migrate toward private institutional systems — proprietary models, closed-access platforms, and internal capital market infrastructure. When the public belief market is dominated by correlated retail participants and strategic capital allocators, institutional actors with genuine private information face no incentive to reveal it through public prediction market positions. Revelation is costly — it moves prices against the revealing actor — while private deployment captures the full informational advantage. The gap between publicly available probability estimates and the private beliefs of well-informed institutional actors will widen as public prediction markets degrade.

Hybrid systems will emerge that combine prediction market interfaces with capital deployment strategies. Platforms will present as belief aggregators while operating increasingly as flow optimizers, extracting value from retail participation through interface design, engagement mechanics, and position structure. Regulatory classification will struggle to keep pace with functional convergence because classification authorities face the same political equilibrium pressures that Posner’s framework identifies: incumbent sportsbooks and financial platforms have stronger regulatory relationships than novel prediction market entrants, and classification decisions will protect incumbents.

The outcome influencer problem will worsen as prediction markets expand into domains — political outcomes, regulatory decisions, corporate events — where actors with large positions also possess the capacity to influence the events they are betting on. Market prices in these domains will increasingly encode actor intentions rather than independent probability estimates, creating systematic mispricing that sophisticated actors will exploit and retail participants will absorb.

Each prediction is falsifiable against observable evidence. Volume patterns, spread behavior, regulatory filings, and institutional participation rates all generate testable signals. Section XI translates these structural predictions into the operational diagnostics an institutional actor can apply in real time.

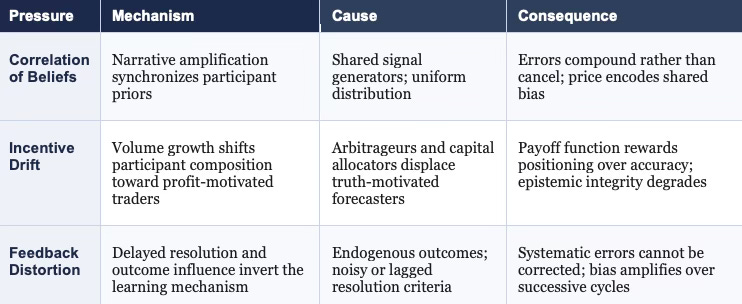

XI. Signal Classification Triggers: Operational Implications by Regime

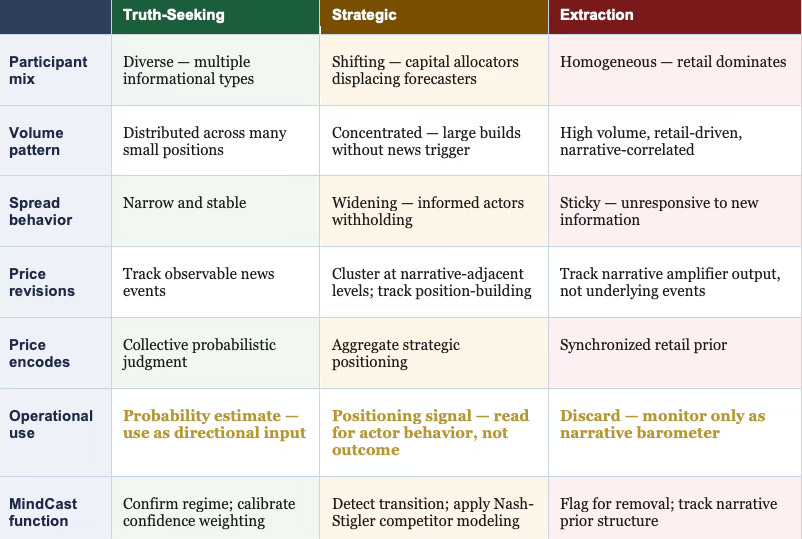

Theory without operational translation serves analysis but not decision-making. The regime framework is analytically precise, but an institutional actor needs to know not just that regimes exist but how to detect which regime a given market occupies at a given moment — and what to do differently depending on the answer. What follows converts the structural framework into a classification system with observable indicators and explicit operational implications.

Figure 2. Regime Classification: Observable Indicators and Operational Implications

Truth-Seeking Regime: Indicators and Operational Use

A prediction market operates in truth-seeking mode when participant composition remains diverse across informational types, bid-ask spreads are narrow and stable without sustained directional drift, volume distributes across many small positions rather than concentrating in a few large ones, and price revisions track observable news events rather than position-building patterns. Under these conditions, market prices carry genuine probabilistic content.

Operationally: prices are usable signals. Weight them as collective probability estimates with appropriate confidence discounting for thin liquidity. A sophisticated actor ingesting these prices into a broader model can treat them as directionally informative inputs — imperfect aggregations of distributed private information that improve on any single participant’s estimate.

Strategic Regime: Indicators and Operational Use

Transition into strategic regime behavior produces a characteristic pattern. Volume spikes without corresponding news events — participants are building positions, not updating beliefs. Spreads widen as informed actors begin withholding information rather than expressing it. Price movements cluster around narrative-adjacent levels as retail participants drive short-term direction while sophisticated actors accumulate beneath. Large position concentration becomes visible in order book depth. Retail participation as a share of total volume rises as sophisticated actors shift from price-making to price-taking, extracting liquidity rather than providing it.

When these signals appear, the price no longer encodes probabilistic consensus — it encodes the aggregate of strategic positioning, making it a better indicator of what large actors want other actors to believe than of what those actors themselves believe.

Operationally: prices become adversarial signals. Read them for positioning intelligence — who is building exposure, in which direction, and at what velocity — not as forward probability estimates. Strategic regime prices are informative about actor behavior, not outcome likelihood. A capital allocator who continues treating these prices as probability estimates after the regime shift is transferring information advantage to the actors who moved first.

Extraction Regime: Indicators and Operational Use

Extraction regime behavior is characterized by retail participation dominating total volume, platform design features that maximize session duration over prediction accuracy, narrative amplifier citations of platform prices as authoritative consensus, and price stickiness around publicly broadcast narratives regardless of new information arriving. Sophisticated actors have largely exited — the remaining participant pool is too homogeneous to sustain informational arbitrage, and the platform has optimized away the diversity that made the mechanism epistemically productive.

Operationally: prices are discard signals. Remove extraction-regime platforms from analytical input sets. Monitor them only as narrative barometers — indicators of what the retail belief environment currently reflects, useful as context for understanding the prior structure of a broader population, but carrying no informational content about the underlying event being priced.

The Inversion Condition

A critical edge case requires explicit treatment. In both the strategic and extraction regimes, price movements can appear informationally rich — large, directional, and persistent — while carrying no epistemic content about the underlying event. A sophisticated actor who observes a large sustained price movement in a strategic-regime market and interprets it as new information entering the system is systematically miscalibrated. The movement more likely reflects position-building by a large actor whose entry distorts prices in the direction of their desired exit, or narrative amplification creating correlated retail positioning that produces apparent momentum without informational basis.

MindCast’s regime-state classification is the necessary prior for any interpretation of prediction market price movements. Without knowing which regime a market occupies, a price movement is ambiguous between three interpretations carrying opposite operational implications: new information has entered the system, a strategic actor is moving the price, or retail narrative correlation has produced apparent momentum. Getting the classification right before interpreting the price is the analytical prerequisite — not a refinement, but a condition of non-error.

XII. Synthesis

Prediction markets represent a transitional layer within a broader system that transforms information into capital and ultimately into behavioral extraction. Truth emerges only when structural conditions hold, and those conditions face persistent, compounding pressure from incentive drift, belief correlation, and feedback distortion.

Evaluating prediction markets in isolation — asking whether a given platform produces accurate forecasts — answers the wrong question. The right question asks where a given market sits along the arc, which regime governs its current behavior, and which structural pressures are driving its next transition. A market producing accurate forecasts today under conditions of thin retail participation and low volume may produce degraded forecasts tomorrow when volume growth attracts capital allocators and narrative amplification synchronizes participant beliefs. Snapshot accuracy measures nothing about trajectory.

The cross-domain evidence for this claim matters. MindCast’s Consumer AI Device Series traced the identical arc across device ecosystems — open platforms drifting toward closed extraction architectures through the same sequence of incentive drift, participant composition shift, and control layer crystallization documented here. The same phase transition conditions applied: epistemic diversity sustained the open platform’s value until capital concentration made extraction more profitable than aggregation, at which point the platform optimized for flow rather than truth. Prediction markets and device ecosystems are not analogous systems that happen to rhyme — they are instantiations of the same arc operating across different domains. The framework’s predictive power derives precisely from that domain-independence: the structural conditions that sustain truth-seeking behavior and the pressures that erode it are not specific to any industry. They are properties of any system in which distributed belief production is progressively exposed to capital market incentives.

The arc reveals not a category but a trajectory. A trajectory has direction, velocity, and structural causes. Understanding those causes — and predicting when and how they will drive regime transitions — constitutes the analytical objective.

Understanding the arc is necessary but not sufficient. Each actor within the system needs to know not just what the arc does in aggregate but what it means for their specific position within it — which is what Section XIII addresses.

XIII. MindCast Value Across the Arc

The arc framework is not a neutral academic exercise. Each actor class operating within the system faces a structural blind spot created by its own position on the arc — and that blind spot is not a matter of insufficient effort or analytical sophistication. The blind spot is architectural. Capital allocators cannot see the belief correlation degrading the price signals they ingest because detecting that correlation requires visibility above the capital layer, not within it.

Platform architects cannot see the incentive drift their volume metrics are generating because the drift is a second-order consequence of individually rational design choices that look correct from inside the platform. Regulators cannot see the functional dynamics that surface features increasingly fail to represent because regulatory classification frameworks were built around surface features, not functional analysis. No actor within the arc can see the full arc — because every actor’s information set is defined by its position within the system it is trying to understand.

MindCast operates outside the arc as the meta-layer that maps it. Each use case below opens with the specific problem the actor cannot solve from inside its position, then identifies the MindCast function that addresses it. The value propositions are most fully developed for actors operating at the belief-to-capital transition — the arc position where regime-state classification carries the highest decision stakes and where the blind spot created by arc position is most consequential. Actors at the flow and control layers — sportsbooks, casinos, and flow-layer platform architects — face different analytical problems addressed through separate MindCast publications on platform economics and regulatory trajectory. The five use cases below represent the primary institutional surface the present framework addresses.

For Capital Allocators and Hedge Funds

The unsolvable problem from inside the arc: a capital allocator drawing signal from a prediction market cannot determine, from the price series alone, whether a price movement reflects genuine information entering the system or strategic positioning by actors who moved earlier. The two interpretations carry opposite operational implications, and the price looks identical under both. MindCast resolves this by classifying regime state before the price movement is interpreted — identifying when the market has transitioned from truth-seeking to strategic behavior and reframing the signal accordingly. A capital allocator treating a strategic-regime price as a probability estimate is systematically miscalibrated in ways that compound over time. Beyond regime detection, MindCast’s Nash-Stigler Equilibrium architecture — a proprietary framework modeling competitive positioning across institutional actors under shared structural conditions — anticipates not just what the market price implies, but how other sophisticated actors will respond to it before that response becomes visible in prices.

For Platform Architects

The unsolvable problem from inside the arc: every individual design choice a platform architect makes in the direction of volume maximization appears locally rational — it increases participation, improves liquidity metrics, and grows revenue — while the aggregate of those choices drives the platform from truth-seeking to extraction dynamics. A platform architect optimizing local metrics cannot observe the regime-level consequence of those choices until the transition has already completed and sophisticated participants have exited. MindCast maps the downstream consequences of specific architectural decisions before they compound: how position limit changes affect participant composition, how resolution mechanism design affects feedback quality, how liquidity incentive structures shift the balance between truth-motivated forecasters and profit-motivated traders. The distinction between volume growth reflecting epistemic value creation and volume growth reflecting early extraction regime drift is not visible from inside the platform — it requires the meta-layer perspective that only MindCast’s position outside the arc provides.

For Regulators and Policy Actors

The unsolvable problem from inside the arc: regulatory classification based on surface features — small bets, discrete outcomes, retail access — systematically misclassifies truth-seeking prediction markets as gambling while failing to capture extraction-regime platforms that have adopted prediction market aesthetics without epistemic function. The surface features that activate gambling classification are present in both high-quality belief aggregators and pure extraction engines; the functional distinction that determines regulatory appropriateness is invisible to a classification framework built around surface resemblance. MindCast provides functional classification intelligence — distinguishing markets by their regime state rather than their surface form — alongside Posnerian analysis of the political equilibrium constraints that will shape how any classification decision is contested. Regulators receive both the analytical case for functional classification and the strategic map of where incumbent resistance will concentrate.

For Signal Generators and Media Actors

The unsolvable problem from inside the arc: a media actor operating as a narrative amplifier and citing prediction market prices as evidence of consensus cannot detect, from inside the amplifier role, that the prices it is citing are partially a reflection of its own prior amplification. The feedback loop is invisible to the actor creating it — narrative amplification drives belief correlation, belief correlation degrades the aggregation mechanism, and the degraded price then appears to confirm the narrative the amplification was distributing. MindCast models the full feedback pathway from narrative amplification through belief correlation through prediction market price formation, identifying the conditions under which a signal generator is amplifying its own prior output rather than independent information — a form of inadvertent self-citation that systematically overstates consensus.

For Outcome Influencers